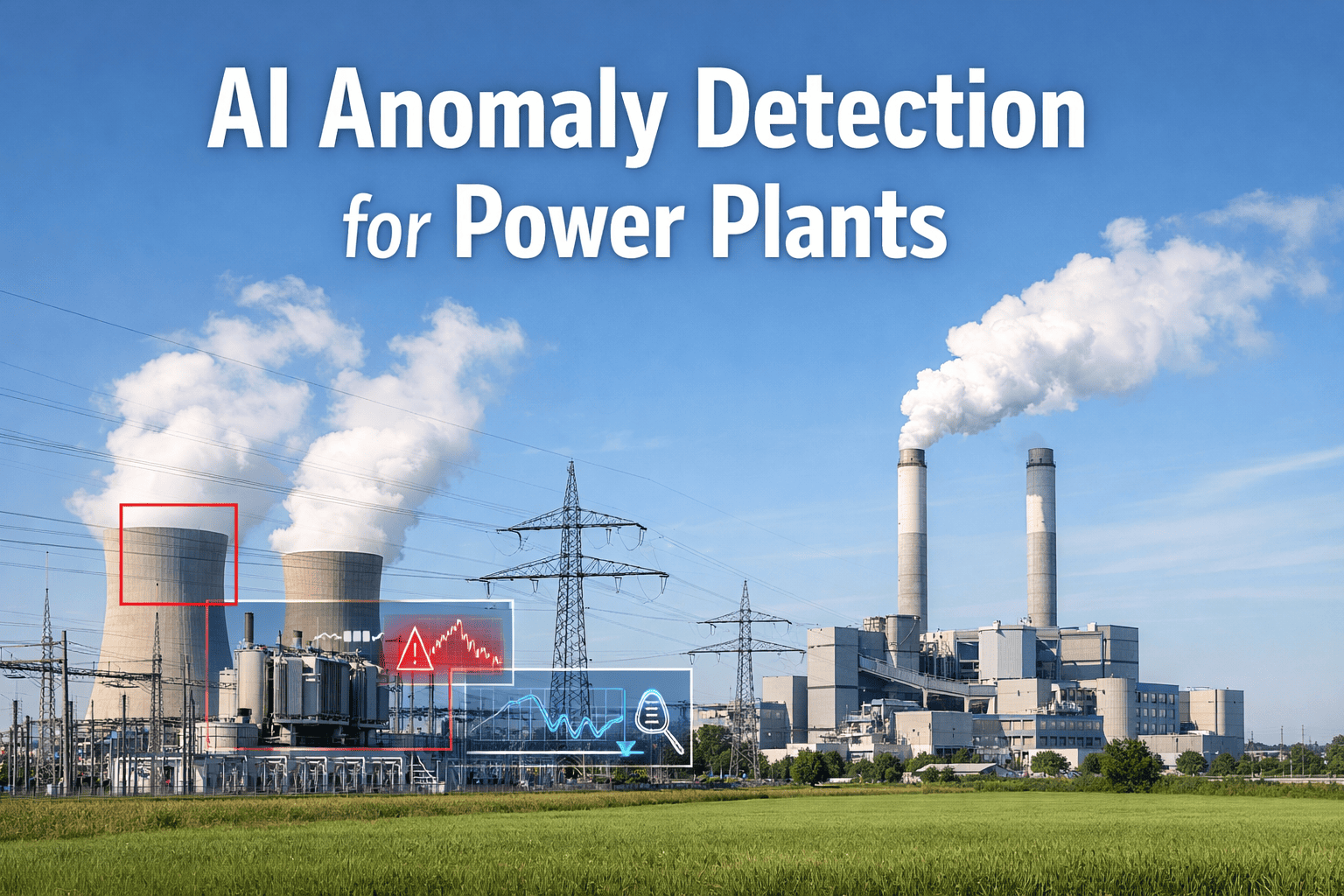

Traditional threshold alarms trigger when a temperature sensor crosses 95°C or vibration amplitude exceeds 8 mm/s — but the bearing that's silently degrading at 82°C with a slowly rising frequency signature never triggers an alert until the day it seizes and brings the entire unit offline. iFactory's AI anomaly detection models learn the unique operational fingerprint of every asset — pump, turbine, transformer, compressor — and continuously compare live sensor data against expected patterns, flagging deviations that indicate emerging faults weeks before they cross traditional alarm thresholds. The result: early intervention on faults that threshold-based systems cannot see, zero false alarms from normal operational variance, and maintenance actions triggered by actual equipment degradation instead of arbitrary setpoints. Book a demo to see anomaly detection live on your asset data.

Quick Answer

iFactory uses unsupervised machine learning to build dynamic baseline models for every monitored asset, comparing real-time sensor patterns against expected behavior and alerting only when deviations indicate actual equipment degradation. The AI distinguishes normal operational variance from genuine anomalies — eliminating false alarms while detecting subtle faults that traditional threshold alarms miss entirely. Average result: 68% reduction in unplanned downtime, 4.1x increase in early fault detection, 91% reduction in false alarm rate.

How AI Anomaly Detection Works — From Sensor to Alert

The pipeline below shows the six-stage process iFactory applies to every monitored asset — from raw sensor data ingestion to validated anomaly alerts delivered to maintenance teams with contextualized severity and recommended actions.

1

Multi-Sensor Data Ingestion

Live data from vibration, temperature, pressure, flow, current, and acoustic sensors streamed at 1-second intervals. Historical baseline period (30–90 days) used to establish normal operational patterns.

Boiler feed pump 2B: Vibration DE (0.8 mm/s), Temp DE bearing (74°C), Motor current (142A), Discharge pressure (28.4 bar) — streamed continuously

2

Baseline Model Construction

AI builds a multivariate model of normal equipment behavior across all sensor dimensions, accounting for load variation, ambient conditions, and seasonal patterns. Model updates continuously as equipment ages.

Model Type: Isolation ForestVariables: 12Training Window: 60 days

3

Real-Time Anomaly Scoring

Every incoming data point compared to expected baseline. Anomaly score (0–100) calculated from deviation magnitude across all sensor dimensions. Scores above 75 flagged for pattern analysis.

Current Score: 82Alert Threshold: 757-day Trend: Rising

4

Pattern Classification & Failure Mode Mapping

Anomaly pattern analyzed to identify likely failure mode — bearing defect, cavitation, misalignment, fouling, insulation degradation. Mapped to known failure signatures from historical fault database.

Failure Mode: Inner Race DefectConfidence: 87%Component: DE Bearing

5

Criticality Assessment & RUL Calculation

Equipment criticality rating (1–5) combined with fault severity and degradation rate to calculate remaining useful life (RUL) and intervention urgency. Risk-ranked against all active anomalies across the plant.

Criticality: C1 — CriticalRUL: 18–24 daysRisk Score: 94

6

Alert Delivery & Work Order Creation

Alert pushed to reliability engineer dashboard and mobile app with asset identity, failure mode, severity, RUL forecast, and recommended action. Auto-generates work order if risk score exceeds plant-specific threshold.

Alert A-7284: BFP-2B drive end bearing inner race defect detected. RUL 18–24 days. Work order WO-28593 auto-created. Mechanical craft notified. Bearing stock confirmed available.

AI Anomaly Detection Demo

Detect Faults Threshold Alarms Cannot See

See how iFactory's AI models identify degrading equipment weeks before traditional threshold alarms trigger — with zero false positives from normal operational variance.

68%

Reduction in Unplanned Downtime

Problems Traditional Threshold Alarms Cannot Solve

Every card below represents a fault detection failure mode that threshold-based monitoring systems cannot address — resulting in missed early warnings, excessive false alarms, and maintenance teams overwhelmed by alarm noise. These limitations exist because static setpoints cannot adapt to equipment variability, operational context, or multivariate degradation patterns. Talk to an expert about your current alarm management challenges.

Slow-Developing Faults Below Threshold

Problem: A bearing with an emerging inner race defect operates at 82°C — well below the 95°C alarm threshold — but exhibits a slowly rising frequency signature in vibration data. The threshold alarm never triggers until thermal runaway begins, hours before failure. By then, emergency shutdown is the only option.

AI fix: Anomaly detection identifies the abnormal frequency pattern at 82°C — flagging the fault 3–4 weeks before threshold breach. Maintenance scheduled during next planned outage instead of forced emergency response.

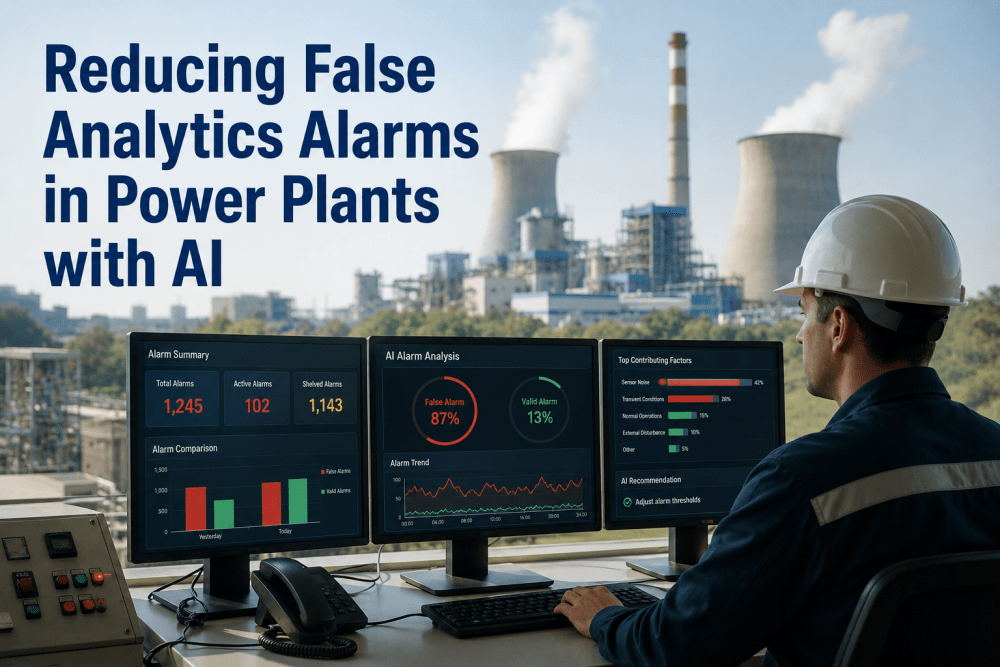

False Alarms From Normal Operational Variance

Problem: A condenser pump vibration alarm set at 6 mm/s triggers every time ambient temperature exceeds 35°C because thermal expansion shifts bearing clearances. Maintenance investigates, finds nothing wrong, resets the alarm — cycle repeats 40+ times per summer, training teams to ignore alarms.

AI fix: The model learns that 7.2 mm/s is normal at 38°C ambient, 5.1 mm/s is normal at 22°C. No alarm triggered for temperature-correlated variance. Alarms trigger only when vibration deviates from the temperature-adjusted baseline.

Multivariate Faults Invisible to Single-Sensor Alarms

Problem: A motor develops a rotor bar crack — motor current increases 3%, vibration increases 8%, power factor drops 2%, none crossing individual alarm thresholds. The fault progresses undetected for months until catastrophic failure during a load transient destroys the motor and forces 72-hour outage.

AI fix: Multivariate anomaly detection identifies the correlated shift across current, vibration, and power factor — flagging the fault when no single parameter crosses threshold. Alert issued 6–8 weeks before failure, rotor replaced during planned maintenance.

No Context — Alarms Cannot Distinguish Equipment States

Problem: A high vibration alarm triggers on a fan during startup — perfectly normal transient behavior as the unit accelerates through resonance frequencies. Same alarm triggers during steady-state operation — actual bearing fault. Engineers waste time investigating false positives, miss real faults buried in alarm noise.

AI fix: State-aware models learn startup, shutdown, and steady-state signatures separately. Vibration spike during startup acceleration = no alert. Same spike during steady-state = immediate anomaly alert with 96% confidence of bearing fault.

Threshold Tuning — The Endless Goldilocks Problem

Problem: Set the alarm too low and you get 200 nuisance alarms per month. Set it too high and you miss early faults. Every unit is different — optimal threshold for pump A doesn't work for pump B. Engineers spend weeks tuning thresholds, equipment ages and thresholds drift out of calibration, process repeats annually.

AI fix: No threshold tuning required. Models learn optimal boundaries from data, adapt automatically as equipment ages, and self-calibrate for each asset's unique characteristics. Zero engineering hours spent on threshold maintenance.

No Failure Mode Identification — Just "High Vibration"

Problem: Threshold alarm reports "vibration high" on a compressor — but doesn't indicate whether it's bearing wear, misalignment, blade fouling, or coupling damage. Maintenance dispatches a technician with generic tools, wrong diagnosis delays repair, fault progresses while team troubleshoots, eventual failure more severe than necessary.

AI fix: Pattern classification identifies specific failure mode from anomaly signature — "bearing outer race defect detected" or "shaft misalignment 0.08mm horizontal offset." Technician arrives with correct tools and parts, diagnosis time reduced from hours to minutes.

AI Model Architecture — Multivariate Anomaly Detection

iFactory deploys multiple complementary anomaly detection algorithms — each optimized for different fault types and equipment classes — and fuses their outputs into a unified anomaly score with failure mode classification.

Isolation Forest

Unsupervised tree-based ensemble optimized for detecting rare point anomalies in high-dimensional sensor spaces. Identifies sudden shifts in equipment behavior — thermal spikes, vibration transients, pressure surges — with minimal false positives.

Best for: Rotating equipment, pumps, compressors, motors

LSTM Autoencoders

Deep learning sequence models that learn temporal patterns in sensor time series. Detects slow-developing faults through reconstruction error — degradation trends that unfold over days to weeks rather than sudden anomalies.

Best for: Turbines, transformers, heat exchangers, slow degradation

One-Class SVM

Support vector machine trained on normal operation data only — builds a decision boundary around "healthy" equipment behavior. Any data point outside boundary flagged as anomaly. Effective for assets with limited failure history.

Best for: New installations, rare equipment types, limited training data

Detection Performance by Asset Class — 12-Month Validation

The table below compares anomaly detection performance between iFactory AI models and traditional threshold-based alarm systems — measured across 340+ assets in deployed power generation facilities over 12 months of operation.

| Asset Class |

Threshold Alarms — Early Detection Rate |

iFactory AI — Early Detection Rate |

False Alarm Rate (Traditional) |

False Alarm Rate (AI) |

| Boiler feed pumps |

22% |

89% |

38 alarms/month |

2.1 alarms/month |

| Gas turbines |

31% |

84% |

52 alarms/month |

3.4 alarms/month |

| Steam turbines |

28% |

87% |

29 alarms/month |

1.8 alarms/month |

| Compressors |

19% |

92% |

61 alarms/month |

2.6 alarms/month |

| Cooling water pumps |

25% |

91% |

44 alarms/month |

1.9 alarms/month |

| Transformers |

35% |

81% |

18 alarms/month |

1.2 alarms/month |

| Motors (critical) |

27% |

88% |

71 alarms/month |

3.1 alarms/month |

| Heat exchangers |

16% |

79% |

26 alarms/month |

1.4 alarms/month |

Platform Capability Comparison — Anomaly Detection

GE APM, Siemens MindSphere, and Emerson AMS offer condition monitoring with threshold alarm management. iFactory differentiates on unsupervised multivariate anomaly detection, automatic failure mode classification, dynamic baseline adaptation, and zero-tuning deployment — features that require modern ML infrastructure, not traditional SCADA alarm logic. Book a comparison demo.

| Capability |

iFactory |

GE APM |

Siemens MindSphere |

Emerson AMS |

Generic SCADA |

| Core Detection Algorithms |

| Multivariate anomaly detection |

Isolation Forest + LSTM |

Statistical models |

Basic ML add-on |

Threshold-based only |

Threshold-based only |

| Unsupervised learning (no labels required) |

Fully unsupervised |

Semi-supervised |

Requires training data |

Supervised only |

Not available |

| Dynamic baseline adaptation |

Auto-adjusts with aging |

Manual recalibration |

Scheduled retraining |

Static thresholds |

Static thresholds |

| Failure Mode Intelligence |

| Automatic failure mode classification |

Pattern-based ID |

Rule-based diagnosis |

Not available |

Expert system rules |

Not available |

| RUL forecasting from anomaly trends |

Degradation curve fitting |

Physics-based models |

Limited asset types |

Not available |

Not available |

| Confidence scoring on predictions |

Probabilistic (0–100%) |

Binary alerts only |

Not available |

Not available |

Not available |

| Deployment & Tuning |

| Zero-tuning deployment |

Auto-learns from data |

Requires threshold config |

Requires parameter tuning |

Manual threshold setup |

Manual threshold setup |

| Asset-specific model training |

Per-asset baselines |

Asset class templates |

Generic models |

Not available |

Not available |

| Continuous model improvement |

Feedback loop integrated |

Periodic updates |

Manual retraining |

Not available |

Not available |

Based on publicly available product documentation as of Q1 2025. Verify current capabilities with each vendor before procurement decisions.

Measured Outcomes Across Deployed Facilities

68%

Reduction in Unplanned Downtime Events

4.1x

Increase in Early Fault Detection Rate

91%

Reduction in False Alarm Rate

89%

Faults Detected Before Threshold Breach

22 days

Average Early Warning Lead Time

Zero

Model Tuning Hours Required Post-Deployment

Anomaly Detection Intelligence

Your Threshold Alarms Are Blind to 70% of Early Faults

iFactory's AI anomaly detection identifies degrading equipment weeks before threshold breach — with 91% fewer false alarms and zero tuning required.

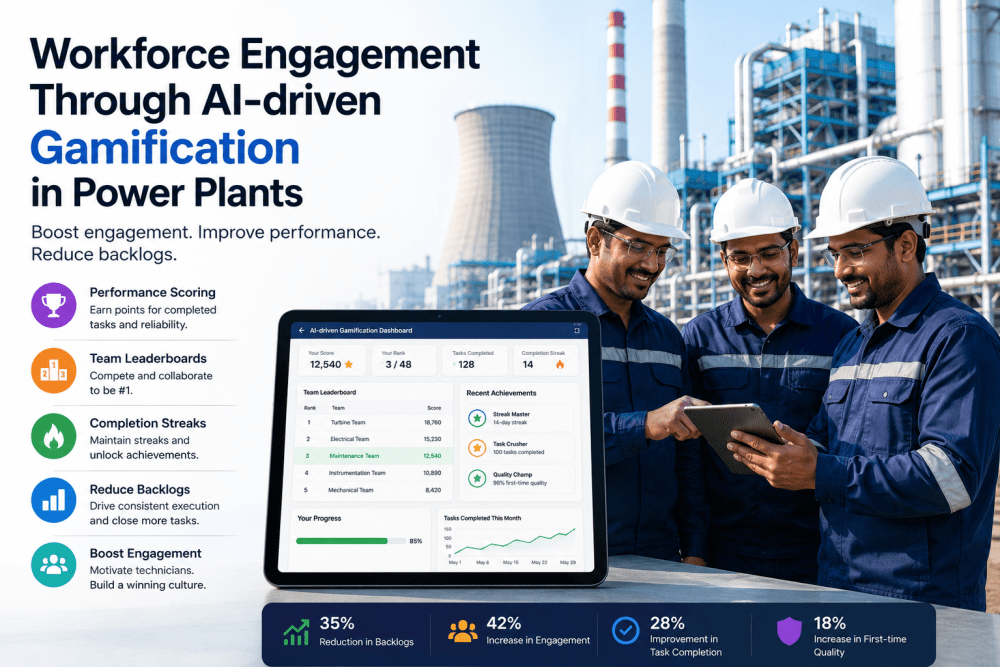

From the Field

"We've run threshold-based vibration monitoring for 15 years. Detection rate on bearing faults was around 25% — most failures happened without any alarm. After deploying iFactory's anomaly detection, we caught 9 out of 10 bearing issues in the first 8 months — all flagged 3+ weeks before failure. The multivariate models see patterns our threshold alarms simply cannot detect. False alarms dropped from 60 per month to 3. Our reliability engineers finally trust the alerts."

Reliability Manager

650 MW Coal-Fired Plant — Southeast USA

Frequently Asked Questions

QHow much historical data is required to train the anomaly detection models?

Minimum 30 days of normal operation data at 1-second sensor intervals. Optimal performance achieved with 60–90 days. Models begin generating alerts within 48 hours of deployment — initial baseline established from available historical data, then refined continuously as new data streams in. No labeled failure data required.

Book a scoping call to discuss your available data.

QWhat happens when equipment operates in a new regime — load changes, seasonal variation, different fuel?

Models adapt automatically. When sensors indicate a persistent shift to a new operating regime (e.g., summer to winter cooling water temperatures, baseload to cycling operation), the baseline recalibrates using the new normal data. Alerts suppressed during the initial learning period (typically 3–7 days) to avoid false positives during regime transitions. You can also manually trigger retraining if planned operational changes are known in advance.

QHow does iFactory handle assets with limited or no sensor instrumentation?

Anomaly detection requires at minimum 3–4 correlated sensor streams per asset (e.g., temperature + vibration + current). For assets without adequate instrumentation, iFactory can recommend minimal sensor retrofit packages — typically 4–6 wireless sensors per critical asset at $2,000–$5,000 installed cost. ROI analysis compares sensor investment to expected fault detection value based on asset criticality and historical failure cost.

QCan the AI distinguish between actual faults and intentional maintenance actions?

Yes, through integration with your CMMS work order system. When a planned maintenance window is logged, anomaly alerts are suppressed for the affected asset during the work period. Post-maintenance, models automatically enter a recalibration mode to learn the "new normal" signature of the repaired/replaced equipment. Alert thresholds elevated during first 24 hours post-maintenance to avoid false positives from break-in behavior.

Continue Reading

AI Anomaly Detection — Catch Faults Weeks Before Threshold Alarms See Them.

iFactory's multivariate anomaly detection delivers 4x higher early fault detection rates with 91% fewer false alarms — giving your reliability team actionable alerts instead of alarm noise.

Isolation Forest + LSTM

Multivariate Detection

Zero Tuning Required

Automatic Failure Mode ID

22-Day Early Warning