Every cloud-based analytics platform for power generation carries three architectural risks that regulated operators cannot accept: sensor-to-alert latency measured in seconds while bearing failures develop in milliseconds, data egress that violates NERC CIP Electronic Security Perimeter requirements the moment it leaves the plant boundary, and internet-dependency that creates a single point of failure for operational intelligence during grid events when that intelligence is needed most. iFactory eliminates all three by running every AI inference workload on NVIDIA edge infrastructure deployed inside your facility — with zero cloud dependency, zero data egress, and sub-10ms anomaly detection latency that threshold-based DCS alarms are architecturally incapable of matching. Book a free plant assessment to see on-premise AI deployed on your infrastructure and inside your perimeter.

On-premise AI-driven power plant operations means running every predictive maintenance model, heat rate optimisation algorithm, vision inspection inference, and digital twin simulation on servers physically located inside your facility — with no cloud dependency, no data leaving your Electronic Security Perimeter, and no internet connectivity required for operational AI. iFactory delivers this on NVIDIA DGX and EGX edge infrastructure, achieving sub-10ms inference latency and full air-gap capability for NERC CIP, IEC 62443, and equivalent regulatory environments.

Why On-Premise Architecture Is Not a Preference — It Is a Regulatory Requirement

For bulk electric system operators, nuclear-adjacent facilities, and critical infrastructure generators, on-premise AI is not a deployment preference. It is the only architecture that satisfies NERC CIP, IEC 62443, and equivalent national cybersecurity frameworks without a multi-month compliance middleware project. Book a demo to see on-premise compliance architecture configured for your regulatory environment.

CIP-011 requires protection of BES Cyber System Information. Every cloud AI platform that transmits operational technology data outside your Electronic Security Perimeter creates a documented compliance exposure — regardless of encryption. On-premise deployment eliminates this risk by architecture, not by configuration workaround.

Cloud-dependent AI platforms lose operational intelligence during internet disruptions — precisely the conditions when grid events, demand response activations, and equipment stress peak simultaneously. iFactory on NVIDIA edge operates with full AI capability during network isolation, intentional black-start conditions, and cyberattack scenarios.

A bearing failure signature develops over 72+ hours — but the actionable detection window is measured in minutes. Cloud round-trip latency of 200ms to 2 seconds is not a performance inconvenience. It is the architectural reason cloud platforms detect failures at threshold-crossing rather than signature-emergence — eliminating the 72-hour prediction window entirely.

Your combustion parameters, heat rate deviation history, and failure pattern library represent decades of operational intellectual property. Cloud AI platforms co-mingle this data in shared training environments. On-premise deployment keeps your operational IP inside your facility, on your hardware, under your access controls — permanently.

Cloud AI platforms are built for enterprise IT. iFactory is built for power generation OT — on your servers, inside your perimeter, with zero internet dependency from day one. NERC CIP compliant by architecture.

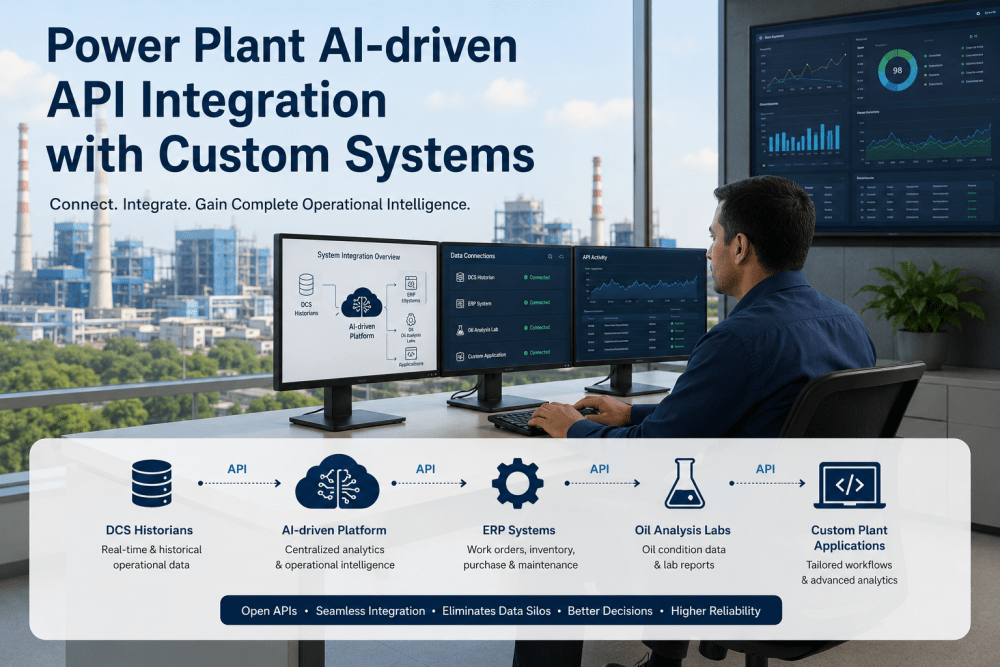

The iFactory On-Premise Architecture — Three Infrastructure Tiers

iFactory's NVIDIA-powered on-premise deployment operates across three infrastructure tiers — from central AI processing to field-level edge nodes to point-of-capture sensor integration — each fully autonomous and collectively delivering complete plant AI coverage without a single cloud dependency.

Deployed in your server room within the Electronic Security Perimeter. Runs all primary AI workloads simultaneously — multi-sensor failure prediction across every asset class, heat rate optimisation models, digital twin simulation, fleet-wide analytics, and continuous model retraining in background compute partitions. 320GB GPU memory processes your entire plant's sensor stream without queuing, batching, or latency.

EGX nodes deployed at turbine skids, HRSG bays, switchyard enclosures, and BOP equipment locations — processing local sensor feeds and vision data with sub-10ms inference latency at the asset level. When network connectivity to the central DGX is disrupted — intentionally or otherwise — EGX nodes continue full AI inference autonomously. No single point of failure, no degraded-mode operation, no alarm storm during network isolation.

Jetson modules embedded with inspection robots, thermal camera arrays, and vibration sensor networks — running computer vision inference at the moment of image or signal capture. No buffering, no transmission delay, no cloud round-trip. Anomaly data flows directly to EGX and DGX with timestamp correlation across the asset hierarchy. Every finding is preserved inside your facility boundary with full chain of custody.

Book a 30-minute demo and we will demonstrate multi-sensor correlation on your asset class — showing exactly what your current DCS alarm configuration cannot detect and iFactory can.

What On-Premise AI Delivers That Cloud Platforms Cannot

The comparison between on-premise and cloud AI for power generation is not a cost or convenience discussion. It is a physical constraint imposed by latency physics, regulatory compliance architecture, and operational continuity requirements.

Implementation Roadmap — On-Premise AI Deployment in 6 to 8 Weeks

iFactory's on-premise deployment follows a structured commissioning sequence — validated at each phase before proceeding, with zero generation impact at every stage. First live predictive alert within 8 weeks of hardware delivery. Book a demo to receive your plant-specific on-premise deployment plan.

iFactory engineers assess server room, control building, and field enclosure capacity. NVIDIA DGX and EGX placement confirmed within your Electronic Security Perimeter. Network isolation architecture designed — zero firewall rule changes to production control networks. NERC CIP asset inventory updated with NVIDIA servers documented as Electronic Access Control or Monitoring Systems.

NVIDIA DGX deployed in control building server room. EGX edge nodes installed at turbine, HRSG, and switchyard field locations. DCS and SCADA feeds connected via OPC-UA, Modbus, or MQTT — read-only connections only, no write-back to control systems at any stage. PI Historian sync configured. iFactory platform installed and validated against your specific control system stack. Book a demo to confirm compatibility with your DCS configuration.

GPU-accelerated training runs against 18 to 36 months of your historian data and documented failure events — calibrating models specifically to your equipment configuration, operating profile, fuel mix, and seasonal load pattern. Models calibrated per asset class: turbines, HRSGs, generators, transformers, and BOP systems. Heat rate baseline established per generating unit. Vision models calibrated to your equipment surfaces and thermal signatures.

Predictive alerts activate across turbines, HRSGs, generators, and BOP equipment. First condition-based work orders generate automatically from AI findings. NERC CIP audit trail active and logging every maintenance decision with immutable timestamps. Operator mobile workflows live. Digital twin simulation activated for planned outage optimisation modelling.

Every closed work order outcome feeds GPU model retraining in background compute partitions — improving detection accuracy continuously without interrupting live inference. Fleet-wide expansion as ROI is confirmed across additional sites. Ninety-day on-site support included. Multi-site unified dashboard configured across all on-premise deployments. Book a demo to see fleet-wide multi-site dashboard architecture.

Cloud platforms require months of middleware, network segmentation projects, and compliance documentation to approximate what iFactory delivers on NVIDIA edge infrastructure from day one. First live alert in 6 to 8 weeks.

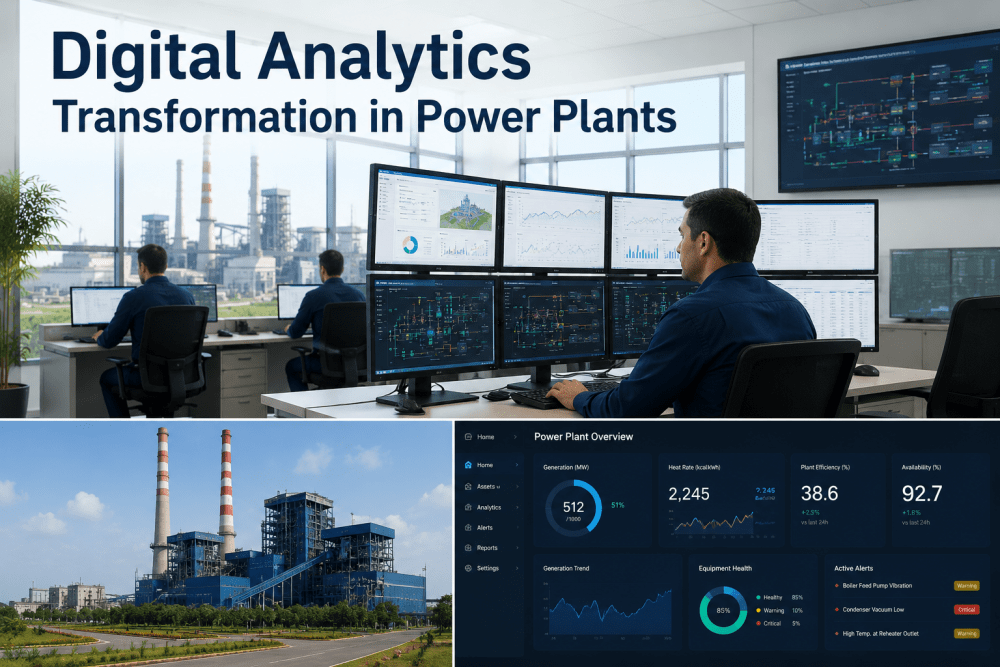

Value iFactory On-Premise Delivers — Quantified by Asset Class

Every figure below is measured from plants running the full on-premise NVIDIA stack for a minimum 12-month operational period.

Sub-10ms anomaly detection catches failure signatures 72+ hours before a forced trip — compared to threshold alarms that trigger at the moment of failure. $4.8M in avoided production losses per 500MW CCGT annually.

GPU-accelerated heat rate optimisation continuously models combustion parameters against design efficiency. Real-time corrections applied through operator advisory — recovering 5 to 8% heat rate deviation that DCS setpoint control cannot address.

Multi-sensor correlation at plant data rates — only achievable with GPU parallelism processing 3,000+ parameters simultaneously. Cloud platforms achieve 60 to 70% accuracy at 24 hours. iFactory on NVIDIA achieves 93% at 72 hours.

On-premise architecture satisfies CIP-005 through CIP-013 by design. No data egress, no external network dependency, full air-gap available. NERC audit trail generated automatically from every AI-informed maintenance decision.

NERC CIP, OSHA, and EPA audit trail assembled automatically from iFactory on-premise records. What previously required 14 days of manual record compilation is now a 2-hour automated export — formatted for regulator submission without additional documentation.

Every AI inference, every model training cycle, every anomaly alert, and every compliance record generated inside your facility boundary. No external service agreements for data processing. No cloud vendor access to your operational technology data — ever.

Regional Compliance Coverage — On-Premise Architecture Satisfies Every Major Framework

iFactory's on-premise NVIDIA deployment satisfies the data sovereignty, OT cybersecurity, and operational continuity requirements of every major power generation regulatory regime — by architectural design, not compliance workaround.

| Region | Applicable Frameworks | On-Premise Architecture Compliance | iFactory Coverage |

|---|---|---|---|

| USA / Canada | NERC CIP-005 to CIP-013, FERC reliability orders, NIST SP 800-82 ICS security, CISA critical infrastructure directives, NRC 10 CFR 50 (nuclear-adjacent facilities) | All AI inside Electronic Security Perimeter — CIP-005 network diagram updated, CIP-011 data protection satisfied by zero egress, CIP-013 supply chain risk managed by on-premise architecture | NERC CIP audit trail auto-generated per maintenance decision. AES-256 at rest, TLS 1.3 in transit. Full air-gap capability for high-impact BES. CISA ICS-CERT incident reporting records structured in iFactory |

| United Kingdom / EU | EU NIS2 Directive (OT cybersecurity for critical infrastructure), IEC 62443 industrial security zones, GDPR data sovereignty, UK Grid Code reliability, EU ETS carbon data integrity requirements | IEC 62443 security zones enforced at NVIDIA edge level. GDPR Article 44 data transfer restrictions satisfied — no data processed outside facility. NIS2 OT network segmentation maintained by on-premise architecture | IEC 62443 zone documentation, GDPR data processing records, NIS2 OT incident reporting trail, EU ETS carbon monitoring data integrity certificates — all generated from on-premise iFactory instance |

| Australia | Security of Critical Infrastructure (SOCI) Act 2018, ASD Essential Eight OT controls, AEMO NEM operational reliability standards, Privacy Act data residency requirements, ISO 27001 OT alignment | SOCI Act critical infrastructure protection satisfied by on-premise deployment — no foreign cloud processing. ASD Essential Eight application control and patching implemented at NVIDIA server level. All operational data remains onshore | SOCI Act critical asset register documentation, ASD Essential Eight compliance evidence, AEMO NEM reliability reporting records, onshore data residency certification — all maintained within iFactory on-premise instance |

| Germany | BSI IT-Grundschutz (KRITIS cybersecurity), BSIG critical infrastructure law, EU NIS2 OT security obligations, VGB power plant cybersecurity guidelines, BDSG data protection requirements | BSI IT-Grundschutz compliant — on-premise deployment satisfies KRITIS IT-Sicherheitskatalog. BDSG data protection satisfied with zero cloud data transfer. VGB cybersecurity guidelines met by IEC 62443-aligned NVIDIA edge architecture | BSI Grundschutz audit trail, KRITIS incident reporting records, BDSG data processing documentation, VGB-aligned OT security zone records — all generated within iFactory on-premise instance without external data exposure |

| Saudi Arabia / UAE | NCA ECC-1 (Saudi national OT cybersecurity controls), CITC data localisation requirements, UAE NESA Critical Infrastructure Protection, Saudi Aramco OT cybersecurity standards, IEC 62443 mandatory for ARAMCO-adjacent operations | NCA ECC-1 OT technical controls met by on-premise NVIDIA architecture. CITC data localisation satisfied — all AI processing occurs within the facility. NESA critical infrastructure protection requirements met without cloud dependency | NCA ECC-1 compliance documentation, CITC localisation certification, NESA incident reporting records, Saudi Aramco OT security audit trail, multilingual Arabic platform outputs for Saudi and UAE operator teams |

iFactory On-Premise vs Cloud-Based Analytics Platforms

The architectural comparison below reflects the fundamental design difference between iFactory's on-premise NVIDIA deployment and every major cloud-dependent CMMS and analytics platform in the power generation market.

| Capability | iFactory | MaintainX | UpKeep | Fiix | Limble | IBM Maximo | Hippo CMMS |

|---|---|---|---|---|---|---|---|

| On-premise NVIDIA edge deployment | Full — native | Cloud only | Cloud only | Hybrid only | Cloud only | On-prem available | Cloud only |

| Full air-gap operation — zero internet required | Full air-gap | Not available | Not available | Not available | Not available | Requires config | Not available |

| AI inference latency — sensor to alert | Under 10ms | 200ms–2s | 200ms–2s | 200ms–2s | 200ms–2s | Varies by config | 200ms–2s |

| Zero data egress from plant boundary | By architecture | Data leaves plant | Data leaves plant | Data leaves plant | Data leaves plant | Config dependent | Data leaves plant |

| NERC CIP-005 to CIP-013 native compliance | CIP-005–013 | Not supported | Not supported | Not supported | Not supported | Customer managed | Not supported |

| GPU-accelerated multi-sensor AI — 3,000+ parameters | NVIDIA native | Rule-based only | Rule-based only | Basic ML | Rule-based only | Add-on required | Not available |

| 72-hour failure prediction accuracy | 93% accuracy | Not available | Not available | Limited | Not available | Add-on required | Not available |

| Autonomous operation during network disruption | Full autonomy | Offline — no AI | Offline — no AI | Offline — no AI | Offline — no AI | Partial only | Offline — no AI |

| Data sovereignty — operational IP stays on-site | Guaranteed | Not guaranteed | Not guaranteed | Not guaranteed | Not guaranteed | Config dependent | Not guaranteed |

| Time to first live predictive alert | 6–8 weeks | Days (CMMS only) | Days (CMMS only) | Weeks (CMMS only) | Days (CMMS only) | 12–24 months | Weeks (CMMS only) |

Results — Plants Running iFactory On-Premise NVIDIA Infrastructure

Production outcomes measured from plants that completed a minimum 12-month operational period on the full iFactory on-premise NVIDIA platform stack.

Combined value from forced outage avoidance ($4.8M), fuel cost recovery ($1M to $3M), scaffolding elimination ($200K+ per inspection cycle), and compliance preparation time reduction ($1M+ in avoided violations) — measured over 12-month deployment periods at plants running the full on-premise NVIDIA stack.

Deploy GPU-Accelerated AI Across Your Plant in 6 to 8 Weeks — On Your Infrastructure, Inside Your Perimeter

iFactory on NVIDIA connects to your existing DCS, SCADA, and historian. No rip-and-replace. No cloud dependency. NERC CIP compliant from day one — first live predictive alert within 8 weeks of hardware delivery.

Frequently Asked Questions

Continue Reading

Connected resources in the iFactory power plant AI and on-premise analytics cluster