In the 400 milliseconds it takes a production line camera to capture a single frame, a trained computer vision model can analyze 2 million pixels, identify 47 different defect categories, cross-reference historical failure patterns, assign a severity score, and trigger a reject signal — all before the next part arrives. Human inspectors, at their sharpest, catch somewhere between 70% and 85% of defects on a fast-moving line. AI vision models now routinely exceed 99%. This is not incremental automation; it is a fundamental rewrite of what quality inspection can be. And for manufacturers facing tighter margins, stricter customer specifications, and thinner labor markets, it has stopped being optional.

99.7%

Defect Detection Accuracy

400ms

Frame Analysis Speed

24/7

Zero-Fatigue Operation

50%

Drop in Scrap & Rework

Why Human Inspection Alone Can't Keep Up Anymore

Modern manufacturing lines run at speeds that exceed the biological limits of visual attention. A human inspector staring at a conveyor moving at 400 parts per minute is being asked to make 6.6 quality decisions per second, every second, for an 8-hour shift. Fatigue sets in at hour 2. Detection accuracy falls off a cliff at hour 4. By hour 6, inspectors are pattern-matching from memory, not actually seeing. AI computer vision is the only technology that scales with the speed, consistency, and precision that modern quality standards demand.

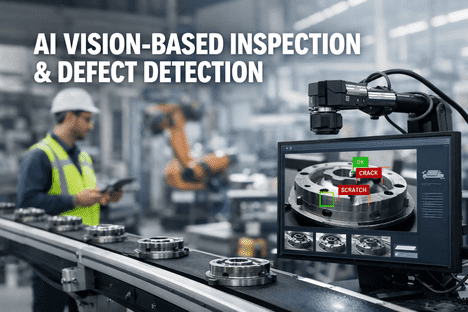

How AI Vision Sees a Part

01

High-resolution cameras capture each part from multiple angles — sub-millimeter detail across every surface.

02

Trained neural networks scan every pixel against learned defect signatures — cracks, porosity, dimensional deviation, surface flaws.

03

Bounding boxes locate defects with confidence scores. The part is classified, logged, and routed automatically.

04

Every inspection builds the training dataset. The model gets smarter with every shift — something no human team can match.

Curious how this looks running on your actual parts? Book a 30-minute demo and we'll show live AI vision inspection on real production samples — no slides, just your defects and our detection accuracy.

The Neural Pipeline Behind Every Detection

AI vision inspection is not magic — it's a deliberately engineered pipeline of image capture, pre-processing, deep learning inference, and decision routing. Understanding each stage matters because it determines where deployment succeeds and where it struggles. Here is the actual flow of data from physical part to plant action, in under half a second.

Stage 1

Image Capture

Area-scan / line-scan cameras · multi-spectral lighting

Industrial cameras synchronized with conveyor triggers capture the part under controlled lighting — standardizing shadows, reflections, and focal depth for every frame.

Stage 2

Pre-Processing

Normalization · denoising · ROI extraction

Raw images are color-corrected, noise-filtered, and cropped to the region of interest. This removes environmental variability before the model even sees the data.

Stage 3

CNN Inference

Convolutional networks · anomaly detection · segmentation

Deep learning models trained on millions of labeled defect images analyze every pixel, identifying and localizing flaws with bounding boxes and confidence scores.

Stage 4

Decision & Action

Rule engine · PLC integration · work order API

Classified defects trigger physical actions — reject arms, line stops, alerts — and simultaneously create digital records, work orders, and training data feedback.

The Defect Taxonomy AI Can Detect

Modern vision models are not limited to simple pass/fail checks. They classify defects by category, severity, location, and even probable root cause — giving quality engineers the granular data they need to fix problems, not just catch them.

Surface Defects

Scratches & abrasions

Dents & deformations

Pitting & corrosion

Coating voids

Contamination & stains

Structural Defects

Cracks & fractures

Porosity & voids

Weld undercut

Incomplete fusion

Delamination

Dimensional Issues

Out-of-tolerance dimensions

Warpage & bow

Edge burrs

Hole size deviations

Profile mismatches

Assembly Errors

Missing components

Wrong orientation

Incorrect part variant

Misalignment & offset

Fastener issues

Packaging & Labeling

Label misprint / missing

Seal integrity flaws

Fill-level deviation

Foreign objects

Barcode unreadable

Print & Surface Quality

Color deviation

Print alignment errors

Gloss / texture variance

Ink splatter & smear

Missing text elements

Trained on Your Defects, Not Generic Demos

The AI Doesn't Learn From Stock Photos. It Learns From Your Line.

iFactory's vision models are fine-tuned on your specific parts, materials, lighting conditions, and defect library — not trained once in a lab and sold off the shelf. The result: accuracy that matches your reality, not someone else's demo.

Human vs AI Vision: The Head-to-Head Numbers

Nothing makes the case for AI inspection as clearly as placing human capability and AI capability side by side across the metrics that actually matter to quality leaders. This is not about replacing inspectors — it's about being honest about where each performs.

Inspection Speed (parts/min)

Consistency Across Shifts

Fatigue-Related Error Rate

Human Inspection

AI Vision Inspection

These aren't vendor projections — they're field-validated results from live deployments across automotive, aerospace, electronics, and heavy industry. Book a demo to see the same metrics benchmarked on a sample run of your own parts.

The 4-Stage Maturity Ladder to Autonomous Inspection

Nobody deploys autonomous AI inspection on day one. The journey from manual inspection to self-learning vision systems moves through four distinct stages. Knowing where you are — and where the next win is — turns a vague AI ambition into a concrete roadmap.

Starting Point

Manual / Rule-Based

Human inspectors with paper checklists, or basic machine vision doing threshold-based pass/fail. No learning, no adaptation, no systemic data capture. Most plants start here.

Paper checklists · Simple PLC logic · Limited traceability

Early Adoption

AI-Augmented

Deep learning models deployed on critical inspection stations. AI flags defects, humans validate. Detection accuracy climbs. Data collection becomes systematic and digital.

CNN models · Shop-floor deployment · Human-in-loop

iFactory Target Zone

Integrated & Closed-Loop

Vision inspection ties directly to PLCs, MES, and CMMS. Detected defects trigger real-time line adjustments, automatic work orders, and root cause dashboards. Quality data flows into every decision.

PLC sync · Auto work orders · Root cause analytics

Future State

Autonomous & Self-Learning

AI models retrain themselves on new defects, auto-calibrate to material changes, and propose process improvements. Human role shifts entirely to exception management and strategic quality decisions.

Continuous learning · Zero drift · Predictive quality

Where AI Vision Is Already Delivering Measurable ROI

These aren't aspirational numbers from whitepapers — they are results documented across operating AI inspection deployments over the past three years. The value is not evenly distributed. It shows up biggest in high-volume, high-cost-of-failure environments where even a small accuracy gain flows straight to the bottom line.

50%

Scrap & Rework Reduction

Catching defects at the station where they occur — not three processes later — eliminates downstream value-add on already-defective parts.

75%

Faster Inspection Throughput

Vision systems operate at line speed without slowing production. The inspection station stops being the bottleneck it used to be.

40%

Warranty Claim Reduction

Defects caught in-plant never reach customers. Warranty exposure, recalls, and brand damage drop in direct proportion to detection accuracy.

90%

Inspection Data Coverage

Every part gets inspected, every defect gets logged. Manual sampling of 1 in 100 is replaced with 100% inspection — building a quality dataset that compounds forever.

3.2x

ROI Within 18 Months

Documented payback across manufacturing AI vision deployments — often faster in high-volume environments where scrap savings alone justify the investment.

65%

Faster Root Cause Resolution

Every detected defect is tagged by type, location, shift, and asset. Root cause investigations that used to take days of guesswork close in hours with hard data.

Common Concerns, Straight Answers

AI inspection is a serious capital and operational commitment. Quality leaders evaluating it deserve direct answers to the concerns that actually keep deployments from closing — not marketing bullet points.

Our parts are too complex for AI to learn

Modern vision models handle geometry most humans can't process — multi-angle captures, 3D reconstructions, and transfer learning from similar part families accelerate training even for highly specialized components.

We don't have labeled defect data to train on

You don't need it. Unsupervised anomaly detection learns what "normal" looks like and flags deviations without pre-labeled defects — then becomes more accurate as findings are validated over time.

Our line conditions are too inconsistent

Controlled lighting stations and data augmentation during training deliberately expose models to the variability they'll see in production. The worse your conditions today, the bigger the AI gain tomorrow.

Integration with our PLCs and MES will take forever

Standard OPC-UA, EtherNet/IP, and REST integrations mean most vision systems connect to existing automation layers in days, not months — with full closed-loop control from detection to reject arm.

What happens if the AI is wrong?

Confidence scoring, human-in-the-loop validation for uncertain cases, and continuous feedback loops mean false positives and false negatives both trend downward over time — not upward.

This sounds expensive

Typical ROI is 12 to 18 months in high-volume manufacturing. One avoided recall, one prevented scrap batch, or one warranty claim reduction can offset the entire initial deployment cost.

Live Vision Demo on Your Parts

Stop Estimating Quality. Start Measuring Every Single Part.

Bring a dozen production samples — good parts and defective ones — to the demo. We'll run iFactory's vision models against them in real time, show you the detection accuracy, and map exactly what a 4-to-6 week deployment looks like for your line.

Frequently Asked Questions

What is AI-powered defect detection?

AI-powered defect detection uses computer vision and deep learning to automatically identify product defects from camera images. Convolutional neural networks trained on thousands of defect images learn to spot cracks, scratches, dimensional errors, and assembly issues with accuracy that typically exceeds 99% — far beyond what manual inspection can achieve at production speed.

How does computer vision inspection work in manufacturing?

Industrial cameras capture images of each part under controlled lighting. A trained AI model analyzes the pixels against learned defect patterns in milliseconds, localizes any flaws with bounding boxes, assigns confidence scores, and triggers automated actions — rejecting parts, alerting operators, or adjusting upstream processes. The entire cycle typically completes in under 500 milliseconds.

Can AI vision be retrained for new products or defect types?

Yes. Modern AI vision platforms support continuous retraining as new products launch or new defect types emerge. Transfer learning accelerates training by leveraging knowledge from similar part families, and incremental updates can be pushed to production models without downtime. The longer a vision system runs, the smarter it becomes.

How much data does AI vision need to start working?

It depends on the approach. Supervised detection typically needs 500 to 2,000 labeled defect images per category, while unsupervised anomaly detection can start with just hundreds of good-part images. Transfer learning from pre-trained models dramatically reduces data requirements, especially for common defect types like cracks, scratches, or weld issues.

Will AI vision replace our quality inspectors?

No — it repositions them. AI handles the high-volume, repetitive detection work that causes human fatigue. Skilled inspectors focus on edge cases, root cause investigations, supplier quality, and process improvement — higher-value work with direct impact on quality outcomes. Teams consistently report higher job satisfaction after AI deployment.

What is the typical ROI timeline for AI inspection?

Most manufacturing deployments see positive ROI within 12 to 18 months. In high-volume operations, payback can be faster — 6 to 9 months is common when scrap reduction alone offsets the investment. Over 3 to 5 years, documented ROI of 300%+ is typical across automotive, electronics, and food & beverage deployments.