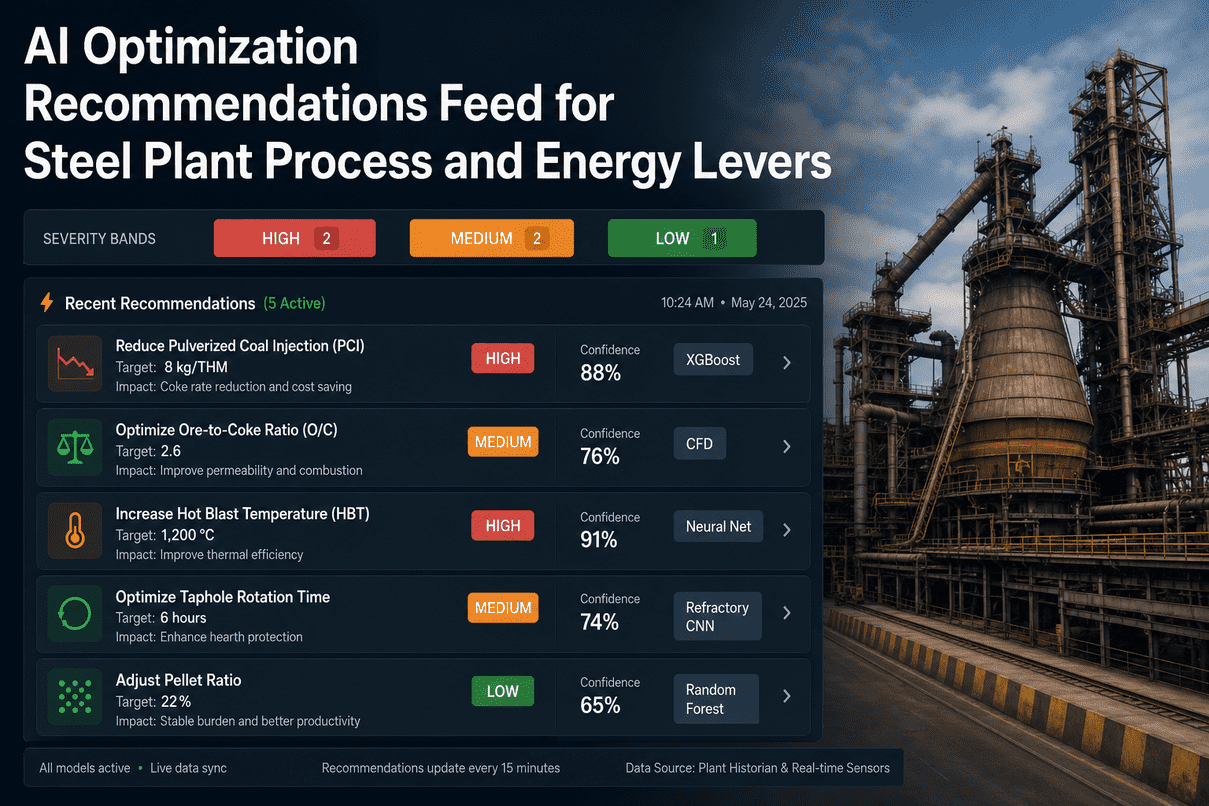

A modern blast furnace generates several thousand tags a minute — chemistry, temperatures, pressures, raceway data, burden profiles, hearth thermocouples, stove cycle status. The hard part is not collecting it. The hard part is deciding which two or three signals matter right now, and what to do about them. The iFactory AI Optimization Recommendations Feed sits on top of every model running on your on-site appliance — the digital twin, the SPC quality predictor, the hearth wear model, the burden CFD — and turns their output into a short, ranked feed of operational levers a shift operator can read in thirty seconds. Every card carries a severity band, a confidence percentage, the model badge, and the change it suggests in the units the operator already works in. Decision support, not autopilot — operators approve, defer, or reject, and every action is logged. Walk through the live feed with us on a live webinar on May 13, 2026 — register here.

A Live Recommendations Feed,

Ranked By Severity And Confidence

Coke saving, burden distribution, hot blast temperature, hearth protection, pellet ratio — every active recommendation tagged HIGH / MEDIUM / LOW, scored for confidence, badged with the model that produced it. Operators approve, defer, or reject. Every action audit-logged.

Three Severity Bands. One Way Of Reading Each One.

A recommendation is more than a number. The severity band tells the operator how urgently to look at it; the confidence tells them how much weight to put on it; the model badge tells them where it came from. Together those three pieces are enough to triage the feed without opening every card.

Process drift that, if left, lands inside a quality-hold zone or a safety threshold within hours. Operator action is expected unless explicitly deferred with a reason.

An optimisation lever the model can defend with material expected savings or risk reduction over the next 8–24 hours. Operator owns the timing — the model owns the rationale.

A directional suggestion the model has identified but with effect that's small, slow-moving, or low-confidence. Often surfaced for shift-handover discussion rather than immediate action.

Five Active Recommendations · Ranked, Badged, Scored

An illustrative snapshot of what the feed looks like at the start of a 14:00 shift on a 5,000 m³ blast furnace. Numbers and severities are representative — your feed is built against your furnace, your historian, and your operating envelope. We can walk through your data in a discovery session.

Every Recommendation Carries A Badge — So Operators Know Where It Came From

There is no single model behind the feed. Different parts of the furnace have different physics, different timescales, and different best-fit techniques. Each recommendation surfaces the badge of the model that produced it, so the operator and the metallurgist can drill into the reasoning rather than treating the feed as a black box.

Gradient-boosted regressors trained on your historian. Strong on tabular relationships between chemistry, blast conditions, burden composition, and fuel rate. Used for PCI, pellet ratio, and slag-basicity recommendations.

3D fluid + particle simulation of the shaft and bosh. Reads radar profilometer data to reconstruct burden layers, predicts gas-flow centring and pressure-drop response. Slow but rigorous — used for burden distribution recommendations.

Convolutional neural network trained on hearth thermocouple time-series, identifies wall-washing patterns and tap-hole-driven wear signatures. Coupled to the inverse heat-transfer solver for hearth-protection recommendations.

Feed-forward neural network on stove cycle data — dome temperatures, BFG calorific value, oxygen enrichment, on-gas/on-blast timing. Outputs a recommended HBT setpoint inside the envelope the stoves can sustain.

Anatomy Of A Recommendation Card

Every card carries the same eight elements. Once an operator has read three of these in a week, they are reading them all the same way — at a glance.

Approve, Defer, Reject — Every Action Logged

A recommendation is not an instruction. The operator owns the call, and the audit trail preserves the reasoning either way. The feed is designed around the workflow operators already run, not against it.

Operator accepts. Setpoint change is sent to the relevant DCS / HMI on a write-confirm pattern (no autopilot). Outcome captured automatically over the next 1–24 hours and stored against the recommendation.

Operator wants more data, or the timing is wrong. Recommendation parks in the feed with a "deferred until" timestamp and re-surfaces. The model can re-rank the recommendation if conditions change.

Operator disagrees, with a reason. The reason is high-value training data — the next monthly retraining cycle uses it to refine when this kind of recommendation should and should not be raised.

The audit trail. Every recommendation, every action, every override, every outcome is logged with timestamp, operator ID, model version, and the inputs the model saw. Exportable for ISO 9001 / IATF 16949 reviews and internal post-mortems.

Decision Support, Not Autopilot

- A ranked, time-stamped, model-badged feed of operational levers

- Built on your historian, your envelope, your operating data

- Honest about confidence — including when confidence is low

- An aid to shift handover, not a replacement for the handover meeting

- Audit-logged, versioned, exportable

- An autopilot that moves setpoints without operator approval

- A claim that every recommendation is right — some will be wrong

- A replacement for metallurgist judgement on edge-case decisions

- A static dashboard — recommendations re-rank as conditions change

- A black box — every model has a badge and an explanation path

What Operators & Plant Heads Ask First

Three to seven active items at any moment, roughly. The feed is tuned to surface what matters now — not to maximise the number of suggestions. If the feed shows fifteen items, something is wrong with the tuning, not the furnace.

The feed flags it explicitly. For example, a HIGH hearth-protection recommendation that asks for tap-hole rotation may conflict with a MEDIUM coke-saving recommendation that assumed steady tap rotation. The conflict is shown on both cards and the higher-severity item takes precedence in ranking.

Yes — with a logged reason. The system does not block operator authority. What it does is preserve the trail: the recommendation was raised at this time, with this confidence, the operator overrode for this reason, and the outcome was X. Useful for post-mortems and for refining future recommendations.

Honestly: it depends. A recommendation with a fast, observable outcome (PCI rate change, hot blast step) is validated within hours. A slow-effect recommendation (pellet ratio, burden distribution) takes shifts to validate. Wrong recommendations that don't get caught surface in the monthly retraining review against actual outcomes.

Yes, with deliberate friction. We integrate with major DCS systems (Siemens, ABB, Rockwell, Honeywell) on a write-confirm pattern — the recommendation can populate a setpoint candidate, but the operator's confirmation is what actually moves it. Talk to a deployment engineer for the integration scope on your stack.

The metallurgist wins. The feed is decision support, not authority. When that happens, the disagreement gets logged, the metallurgist's reasoning is captured, and the model retrains on it. Most of our highest-value model improvements over the first six months come from exactly these disagreements.

See The Feed Running On Your Furnace's Data

A working session, not a pitch: bring 90 days of historian data — chemistry, blast conditions, burden, hearth thermocouples — and our team walks through what the recommendations feed would have surfaced at three or four real moments in your last campaign quarter. No commitment, no slides, just the model output on your data.