A power plant operator's hardest decisions are not the ones they make every shift — they are the ones that come up two or three times a year. Should we accept the up-rate the dispatcher just offered, or hold at current load? Should we switch to the cheaper coal blend the procurement team negotiated, or stay on the certified fuel through the next outage? Should we trim the condenser cooling water flow now that the river is at its summer minimum? Each of those decisions has a number behind it that your existing physics models can compute — but a full thermodynamic simulation of the plant takes hours, the engineer who runs it is in the middle of three other things, and by the time the answer comes back the dispatcher has moved on. The iFactory Scenario Evaluator is the layer that closes that gap. A surrogate ML model, calibrated against your physics-based digital twin, runs each what-if scenario in seconds rather than hours — orders of magnitude faster than the underlying simulation it learned from. Fast enough that an operator can ask the question, and the engineer can have a defensible answer back inside the same conversation. The Evaluator runs on an on-site NVIDIA RTX PRO 6000 Blackwell workstation — 96 GB of GDDR7 memory holds the full surrogate plus a year of historian context. Walk through the model stack and a live scenario at our live webinar on May 13, 2026 — register here.

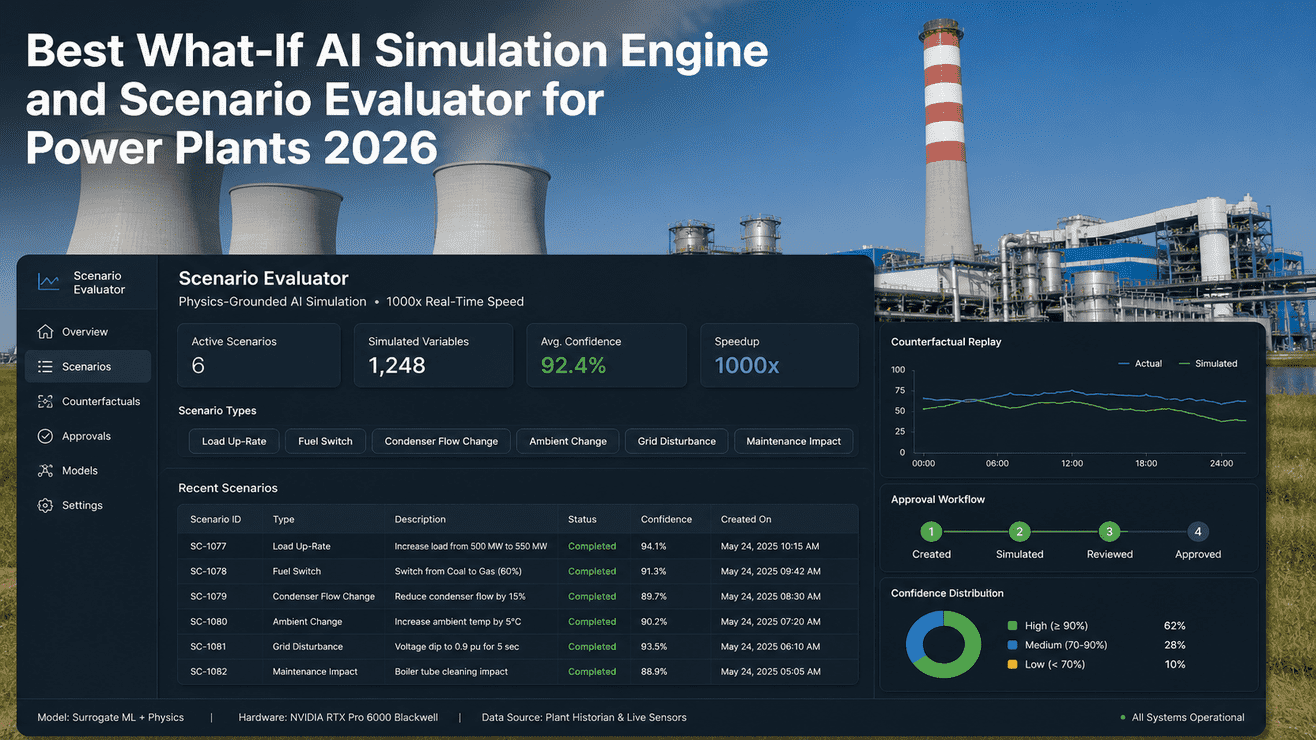

Scenario Evaluator: What-If Simulation,

For The Decisions That Don't Wait

Load up-rate, fuel switch, condenser flow change, ambient swing, partial shutdown — each what-if evaluated by a physics-grounded surrogate ML in seconds, not hours. Answers in the units engineers already work in: heat rate, MW, °C, ppm. Honest about confidence. Owned by you outright.

Five Kinds Of What-If, One Engine

Most operating decisions an engineer faces fall into one of five scenario classes. The Evaluator ships with a surrogate trained on each, calibrated against your plant's physics model and your last 18–36 months of historian data. Custom scenarios extend the same framework.

Move generation up or down by 5–25% over a defined ramp. Returns expected heat rate, fuel consumption, condenser duty, and which equipment hits its design margin first.

Substitute a different coal blend, gas calorific value, or biomass co-firing ratio. Returns expected boiler heat absorption, NOx / SOx, slag tendency, and ash chemistry effects.

Step condenser cooling water flow, cooling tower fan staging, or air-cooled condenser pitch. Returns expected back-pressure, vacuum, and the back-pressure-to-output sensitivity at current load.

Project plant performance under a forecast ambient profile — temperature, humidity, river temperature, dust loading. Useful for de-rating decisions and dispatch planning across heat waves and cold snaps.

Take a mill, fan, pump, or feedwater train offline. Returns expected MW capability, redundancy margin, and the constraints that bind first as the remaining equipment picks up the load.

"What Would Have Happened If We Had Done X Instead?"

Counterfactual replay points the surrogate at a real moment in your historian and asks the alternative-history question. The plant's actual trajectory is the baseline. The surrogate runs the same period under the alternative decision and shows the divergence — heat rate, MW, fuel, emissions — over the hours that followed. Useful for post-event reviews, incentive-scheme tuning, and operator coaching.

Read it as evidence, not gospel. A counterfactual is a model's best estimate of an unrun history. It carries a confidence band, and the band is wider for scenarios further from observed operation. Useful for review meetings; less suited for live decisions where the actual physics never ran.

Six Recent Scenarios — How They Look In The System

An illustrative snapshot of the scenario inbox at the start of an engineering shift. Numbers and outcomes are representative — your evaluator is calibrated against your plant. Engineers can walk through your data in a discovery session.

From Proposal To Execution — Every Step Logged

A scenario is not an instruction. The Evaluator produces evidence; the engineer and the plant manager own the call. The workflow preserves the full chain of reasoning — proposal, model output, reviewer, decision, and post-execution outcome — for audit and for learning.

Engineer or operator opens a scenario, sets the parameters in the units they work in, runs the surrogate. The model returns the projected outcome, the confidence band, and the binding constraints expected.

Reviewer (shift super, plant manager, or technical authority depending on scope) sees the surrogate output, the historian baseline, and any cross-references to similar past scenarios. They approve, defer, or reject — with a reason captured.

If approved, the scenario can populate setpoint candidates on the DCS / HMI on a write-confirm pattern (no autopilot). Operator confirmation is what actually moves the setpoint. Monitoring triggers populated automatically.

After the scenario period closes, actual outcome is captured from the historian and compared to the surrogate's prediction. The delta is logged. Periodic retraining uses these deltas to sharpen the surrogate.

Surrogate ML, Calibrated Against The Physics That Built It

The Evaluator is not a black-box neural network running guesses. It is a surrogate trained on the output of your physics-based digital twin — thermodynamic cycle models, rotor dynamics, electromagnetic models — across a structured envelope of operating points. The physics is the source of truth. The surrogate is the speed.

Thermodynamic cycle for boilers and HRSGs, rotor dynamics for turbines, electromagnetic models for generators, hydraulics for cooling. Calibrated against your last 18–36 months of historian data, with deviation baselines per parameter. This is the slow, rigorous engine.

Neural network trained on tens of thousands of physics simulations across the plant's operating envelope, with physics-informed loss terms that enforce conservation laws. Returns the same answers as the underlying twin — within a known confidence band — at a fraction of the runtime.

Every scenario, every reviewer decision, every post-execution outcome captured with a stable scenario ID. Exportable for ISO 50001 reviews, regulatory dispatch audits, and internal post-event analysis. The audit trail is what makes the Evaluator defensible to a regulator.

A Confidence Band That Means Something

Every scenario carries a confidence percentage. It is not a vibe. It is computed from the surrogate's training-data density around the requested operating point — high where the surrogate has seen many similar conditions, low where the surrogate is extrapolating outside the envelope it was trained on.

A Workstation, Not A Data Centre

A scenario evaluator works best where engineers work — in the engineering office, near the control room, accessible without VPN. A workstation-class GPU with serious memory beats a far-off rack of inference servers for this job. The RTX PRO 6000 Blackwell happens to be the right card for it.

Enough headroom to hold the full plant surrogate in memory plus a year of historian context plus several scenario runs in flight. Means an engineer running a sweep doesn't have to wait for memory paging.

The compute that turns a multi-hour CFD run into a multi-second surrogate evaluation. Fifth-generation Tensor Cores accelerate the physics-informed neural network at FP4 / FP8 precision where the surrogate can use it.

Sits in a tower or deskside chassis in the engineering office. Power and Ethernet are the only on-site requirements. No data centre, no rack, no remote VPN — engineers run scenarios where they think.

What Engineers & Plant Managers Ask First

It doesn't replace it. The full-physics simulator is the source of truth — that's what the surrogate is trained against. The Evaluator gives you a faster way to ask the simulator's questions, and an audit trail around the answers. For unusual or safety-critical scenarios you still run the full simulator.

For scenarios inside the trained envelope, surrogate evaluations typically return in 2–10 seconds. The underlying full-physics simulation those answers were trained from would take 30 minutes to several hours depending on scope. The speedup is real but problem-dependent — we share specific benchmarks against your physics model in the proposal.

Yes, it can. Surrogates extrapolate poorly outside their training envelope, and a confident-looking answer in a sparse region of the operating space is exactly the kind of failure mode to watch for. That's why every scenario carries a confidence band tied to training-data density, and why low-confidence scenarios are flagged with a recommendation to run the full physics twin instead.

Yes, on a write-confirm pattern with deliberate friction. An approved scenario can populate setpoint candidates on the DCS, but only the operator's confirmation moves the setpoint. We integrate with the major DCS systems on read-only access plus a write-back queue. Talk to a deployment engineer for the integration scope on your stack.

Quarterly is the default cadence — the surrogate retrains on the latest physics model + the latest 90 days of historian data. Major plant changes (new turbine internals, fuel mix shifts, control philosophy changes) trigger an out-of-cycle retraining. The audit log preserves which surrogate version produced each historical scenario.

Workstation keeps running. You own the GPU, the surrogate weights, the full audit log, and the integration code. Renew support and quarterly retraining annually, run it in-house with our handover docs, or mix both. The Evaluator does not call out to any cloud service — no kill switch.

Bring A Real Scenario From Your Last Quarter. We'll Run It.

A working session, not a pitch: pick a real what-if from the last quarter — a load swing you wished you had numbers for, a fuel switch you debated, a derate decision your team disagreed on. We pre-build a scenario template on a representative plant model, walk through the surrogate output, and show what the audit trail and confidence band would have looked like in your context.