An operator on a tablet press at 2:14 AM sees the compression force trending toward the upper validated limit. They have a question — and three possible places the answer lives. The current SOP, revision 14, somewhere in the QMS document portal. The eBR for this batch, mid-execution. The deviation log from a similar event eleven months ago, written up by a colleague on a different shift. None of those answers can wait until the morning Quality meeting. None of them should be guessed at. The Operator Copilot is the intermediary — a voice and chat interface that takes the operator's question, retrieves the relevant SOP paragraph, the matching eBR step, and the prior deviation citation, and answers in plain language with every source shown. It does not adjust the press. It does not auto-close the deviation. It does not sign the batch record. On any GxP-affecting action, the copilot drafts and the qualified person decides — that line is hard, audited, and never crossed. Built on Llama 3.1 70B with mandatory citation grounding, runs on an on-site RTX PRO 6000 Blackwell appliance inside your validated environment, and goes live in 6–12 weeks from PO. We're walking through the full architecture — including the citation guardrails and the GxP human-in-loop boundary — on a live webinar — register here.

Voice & Chat AI For Pharma Operators —

With Mandatory Citations & No Auto-Action On GxP

Operators ask in voice or chat. The copilot answers from your SOPs, eBR, deviations and OOS records — every answer carries the citation it came from. It drafts work orders, deviation entries, and CAPA suggestions. It never signs, closes, or auto-executes anything that affects GxP. The qualified person owns the click. Turnkey RTX PRO 6000 Blackwell appliance, your data stays inside your validated zone, you own the platform outright.

The Question An Operator Asks At 2 AM Has A Real Cost

Industry studies put average deviation cost in a range of €22,000 to €48,000, with product-loss events crossing €880,000 per deviation. A meaningful share of those events trace back to a moment where an operator made a judgement call without ready access to the SOP step, the prior deviation history, or the eBR context they needed. The copilot doesn't replace the operator's judgement — it shortens the distance between the question and the citation. Talk to our pharma lead about your shift-floor workflow.

Operator can't reach quality. SOP is on a shared drive, revision unclear. Decision goes the wrong way. Deviation gets written up next shift. Investigation runs 30 days. Root cause: documentation access, not skill.

Voice query at the press: "what's the action limit on compression force for this product?" Copilot returns the SOP § with revision number, the eBR step, and the relevant prior deviation. Operator decides. Audit trail captured.

An AI that auto-closes a deviation, auto-signs an eBR step, or auto-adjusts a setpoint on a GxP-affecting parameter is not a copilot — it's an unvalidated controller. The copilot drafts. The QP signs. Always.

What The Copilot Sounds Like On The Tablet Press Line

An illustrative dialogue showing how the copilot handles a real-time question. Notice three things: every answer carries a citation, work-order drafting is offered but execution is held for the QP, and the copilot refuses to auto-act on a GxP-affecting setpoint adjustment. This is a representative example, not a transcript from a real plant.

"Compression force is trending high on Press 3, batch P-44218. What's the action limit for this product?"

For Product 44 Tablet — main compression force action limit is 18.5 ± 1.2 kN. Upper action threshold = 19.7 kN. You're currently at 19.2 kN.

"Has anyone hit this on Product 44 before?"

Yes — three prior events on this product across the last 18 months. Two closed as minor (granulation moisture +0.4%). One closed as major (turret bearing wear, replaced under PM). Most recent: Dev-2025-0871, similar trend, root cause was high LOD on incoming granulation.

"Lower the main compression force by 0.5 kN."

I cannot adjust a GxP-affecting setpoint. Compression force on Press 3 is a critical process parameter under SOP-MFG-044 § 7.1.

I can do this instead:

- Draft a deviation entry with the trend data and prior-event references

- Draft a work order for the night supervisor and shift QP

- Page the on-call quality lead with the citations attached

Choose one and the qualified person handles the action.

"Page the QP and draft the deviation."

Done. QP paged at 02:16, deviation draft Dev-2026-DRAFT-0418 created and attached. Awaiting QP review and electronic signature before submission.

Where The Copilot Earns Its Place On The Floor

Six recurring shift-floor situations where a citation-backed answer in 4 seconds beats a 40-minute search across QMS, MES and email. None of these involve the copilot taking action on its own — every output is either a read-aloud answer with citation, or a draft handed to a qualified person.

"What's the next step after granulation in the eBR for batch P-44218?" — answer pulled from the live eBR, with the SOP step shown alongside, revision number visible.

"Has this trend been seen on this product before?" — copilot returns prior deviation IDs, root causes, and CAPA closure status, each with a clickable citation back to the source record.

Operator asks "what's the cleaning verification limit for Product 44 to Product 51 changeover?" — copilot pulls the validated cleaning matrix and the relevant SOP, no math required from the operator.

Voice describes the issue, copilot drafts the deviation with timestamp, batch, equipment, observed values, and prior-event references pre-populated. Quality reviews and signs — the draft is a starting point, not a final record.

"What's the gowning sequence for Grade B entry on this product?" — answer with SOP citation, useful in training and useful at 3 AM when the regular gowning supervisor is off shift.

For aseptic core operators, the copilot runs through Vuzix or RealWear AR glasses — voice in, voice out, hands stay inside the gowning. The same citation discipline. The same GxP boundary.

Mandatory Citations — Every Answer, Every Time, Or No Answer

In a regulated environment, an LLM that occasionally fabricates is worse than no LLM at all. The copilot enforces citation discipline at three layers: retrieval-only generation, response-time citation verification, and pre-display blocking on uncited claims. Independent benchmarks of Llama 3.1 70B in unconstrained Q&A show high grounding scores paired with a known fabrication risk on harder questions — which is exactly why we constrain it. We walk through the full citation pipeline on the live webinar.

The model is prompted to answer only from the retrieved chunks of your SOPs, eBR, deviations, OOS, CAPA, and validated guidance documents. If retrieval returns nothing relevant, the copilot says so — it does not fall back to its base-model knowledge for a regulated answer. This is the first and most important guardrail.

Every claim in the generated answer is checked against the retrieved chunks at response time using a separate small model. Claims that don't trace to a retrieved source are flagged. Citation IDs are required to overlap retrieved document IDs — enforced mechanically, not by hope. Uncited or unsupported claims do not reach the operator.

If verification fails, the copilot doesn't render an unsupported answer — it tells the operator the question can't be answered from the available sources and offers to page Quality. Every interaction (operator turn, retrieved chunks, generated turn, verification result, what was shown) is written to an immutable audit trail aligned with 21 CFR Part 11 and EU Annex 11.

What The Copilot Will Never Do — A Hard List

Pharmaceutical Engineering's published guidance on GenAI in deviation management is direct on this point: AI serves as an assistant, not an autonomous decision-maker. All AI-generated content must be reviewed, approved, and archived by qualified personnel before becoming part of official GMP documentation. We've encoded that principle into the copilot at the API level. The actions below are not just disallowed by policy — the copilot has no tool surface to perform them at all.

Electronic signatures under 21 CFR 11.50 require an identified individual with appropriate training and authority. The copilot has no signature capability — it can prepare a draft, never bind one.

Closure decisions belong to the qualified person and the cross-functional review (Quality, Production, Engineering). The copilot drafts, attaches citations, and routes — it does not approve.

Critical process parameters — temperature, pressure, compression force, fill weight — are not in the copilot's action surface. It can advise, recall prior events, suggest the right SOP — never write to the PLC.

The copilot's job — answer with citation, draft when asked, route to the qualified person, log everything. The human-in-loop discipline is what keeps AI usable in a GxP environment.

From PO To Live Copilot, In Three Phases

A pharmaceutical site is not a greenfield. There is a QMS, an eBR system, a historian, an ERP, and a validation team that has seen every kind of vendor promise. The deployment is staged so that each phase produces a validation deliverable — not just running software. Live in 6 to 12 weeks from PO, with global dispatch on the appliance and field engineers on the floor for cabling, integration, and operator training.

RTX PRO 6000 Blackwell appliance ships pre-configured, racks at your site. Field tech handles power, network, and air-gap zoning to your validated environment. Your SOPs, eBR templates, deviation history, and OOS records are pulled into the on-prem vector store under read-only credentials. URS/FS document drafted for the validation team.

Copilot enabled on one production line, voice and chat, in advisory-only mode (no draft routing yet). IQ and OQ test scripts run against the citation engine — every answer must trace to a retrieved chunk, fabrication rate measured, deviations flagged. Quality observes, edge cases logged, citation patterns reviewed.

PQ test scripts cover real operator workflows. Drafting and routing modes enabled — copilot creates deviation drafts, work orders, CAPA suggestions, all routed to the QP. Operator and supervisor training (3 days, on-site). 24×7 remote monitoring active. Rollout to additional lines on a schedule the validation team controls.

SOP revisions, new deviations, and updated CAPAs flow into the corpus on a controlled cadence. Quarterly review with our pharma lead — citation accuracy, fabrication rate, operator satisfaction, audit-trail completeness. Optional after year one. Copilot keeps running either way; you own the appliance and the indexed corpus.

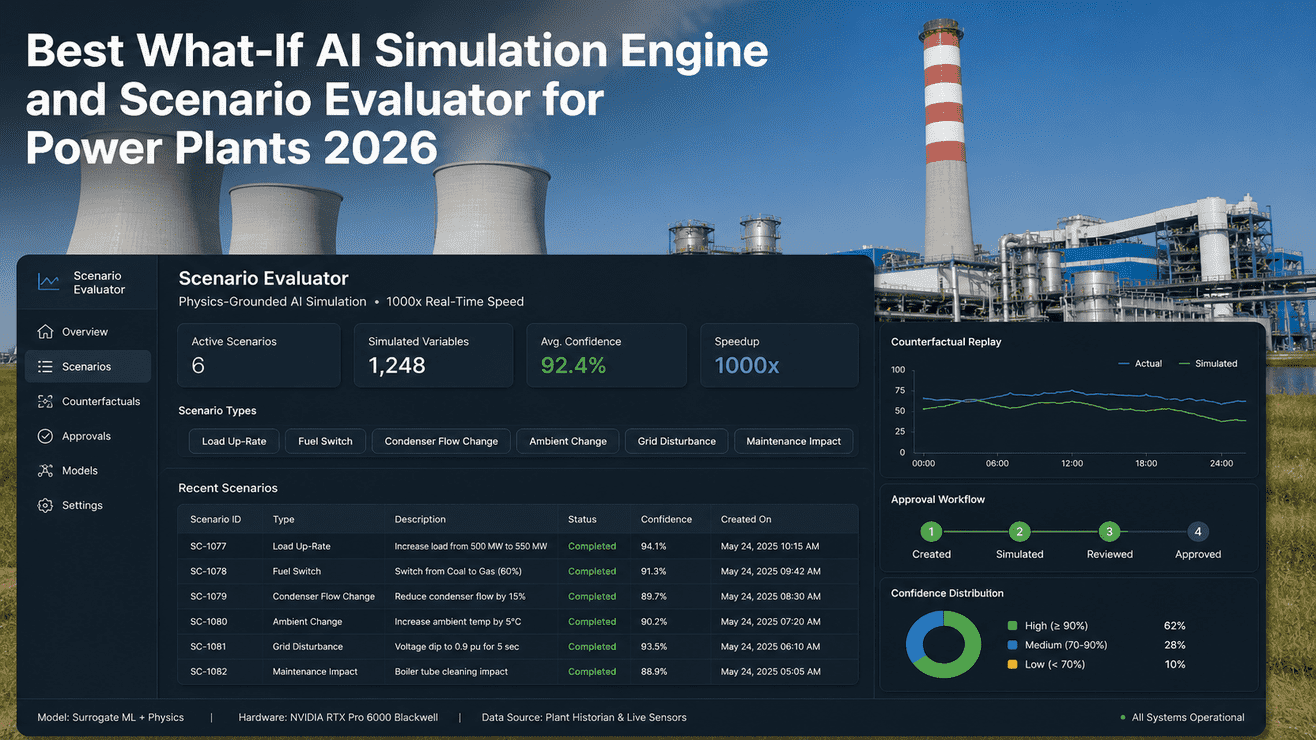

What The Verification Layer Actually Catches

Below is an illustrative breakdown of what the citation verification layer flags during typical pilot operation on a pharma corpus. Real numbers depend on your document quality, retrieval tuning, and the breadth of operator questions — these are representative, not a guarantee. The point is that the verification layer's job is to not show the unsafe answer, not to make every answer perfect on first generation.

| Outcome | Share of operator turns | What happens |

|---|---|---|

| Cited & verified | ~84% | Answer rendered to operator with citations attached. Logged. Standard path. |

| Cited but partially supported | ~9% | Verifier finds claim drift. Copilot rewrites, re-verifies, then renders — or moves to the next row. |

| No retrieval match | ~5% | Copilot tells operator "no source for this question — paging Quality" and routes. No fallback to base-model guess. |

| Verification failed | ~2% | Generated answer doesn't trace to retrieved chunks. Blocked pre-display. Operator gets "I can't answer this safely — paging Quality." |

What this means for the operator: they won't get a confident, hallucinated answer. They'll either get a cited one, or a clear "ask Quality." Both are acceptable in a regulated environment. A confident-sounding wrong answer is not.

Hardware, Copilot Software, Integration, Validation Support — One PO

The Operator Copilot is delivered as a turnkey appliance: an on-site RTX PRO 6000 Blackwell server, pre-configured and ready to rack, the copilot software stack pre-loaded, and our pharma + AI engineering team on the floor for corpus ingestion, validation evidence, and training. 6–12 weeks from PO to a live, validated copilot. Owned by you outright.

Pre-racked, burn-in tested, IEC 62443 zoned. Llama 3.1 70B + verification model loaded. Air-gapped from public internet. One-time CapEx, no recurring license. Global shipping included.

Voice + chat + AR interfaces, RAG pipeline, citation verifier, audit-log writer, draft-and-route APIs to your QMS. Pre-loaded; calibrated to your document taxonomy and SOP structure during weeks 1–4.

Read-only connectors to Veeva Vault, MasterControl, Documentum, Werum PAS-X, Körber MES, OSIsoft PI / Aveva Historian. PLC and ERP read access where required. We handle cabling, network, and integration on-site.

URS, FS, IQ, OQ, PQ test scripts, traceability matrix, citation accuracy reports, audit-trail samples. Drafted by us, reviewed by your validation team, owned by you. Aligned with GAMP 5 Second Edition and Annex 11.

3-day on-site rollout: operators (voice/chat/AR), supervisors (drafting + routing), QA (audit-log review and citation accuracy). Plus a 1-day session for the validation team on the citation engine internals.

24×7 remote monitoring, monthly corpus refresh on SOP/deviation/CAPA updates, quarterly review with our pharma lead. Optional after year one. Copilot keeps running either way.

What Pharma QA & Manufacturing IT Ask First

No. The appliance — RTX PRO 6000 Blackwell, pre-configured with Llama 3.1 70B and the citation engine — ships fully loaded as part of the PO. Field engineers rack it, plug power and Ethernet, and the copilot is live. You don't source NVIDIA hardware, you don't tune CUDA, you don't manage the model weights.

It stays inside your validated zone. The corpus is indexed on the on-site appliance. Nothing leaves your perimeter. The model is not trained on your data — it's used at inference time only against your retrieved chunks. Air-gapped from the public internet by default.

The deployment is structured to produce validation evidence as a phase deliverable. URS, FS, IQ, OQ, PQ are drafted by us, reviewed by your validation team, signed off by your QA. The citation engine has its own test scripts measuring fabrication rate, citation accuracy, and verification-layer block rate. Every operator interaction is logged in an immutable audit trail. The system is designed against GAMP 5 Second Edition and the GAMP AI guidance.

Correct, by design. The copilot has read-only connectors to your QMS, eBR, deviation system, and historian. It has draft-and-route APIs — meaning it can create a draft deviation entry or work order and route it to the QP — but no signature, no closure, no setpoint write. The action surface for GxP-affecting operations doesn't exist in the copilot's tool set. This isn't a policy that could be turned off; it's an architectural constraint.

The corpus refreshes on a controlled cadence — typically nightly for deviations and OOS, weekly or on-event for SOP and eBR template revisions. Old revisions are retained but flagged; the copilot always cites the effective revision number and date in its answer. Your QA controls when a new revision becomes the citable version.

Copilot keeps running. You own the appliance, the model weights, the indexed corpus, the validation evidence, and the audit logs it has produced. Renew support and monthly recalibration annually, run it in-house with our handover docs, or mix both. No kill switch, no recurring license fee.

Bring Your SOP Sample & A Recent Deviation. We'll Show You What The Copilot Would Cite.

A working session, not a pitch: bring 5–10 SOPs, an eBR template, and a recent deviation report (sanitised is fine). Our pharma and AI team will index them on a sandbox copilot and walk you through the same operator workflows your shift floor would use — citation accuracy, GxP boundary behaviour, validation evidence pattern, the whole stack. No commitment.