Global enterprises invested $252 billion in AI last year. Yet 80-95% of manufacturing AI projects fail within 18 months. The paradox? Companies are building on cloud APIs designed for chatbots and consumer apps—not the millisecond-precision, offline-resilient, data-sovereign requirements of factory floors.

Indian factories face this harder than most. A textile mill in Coimbatore loses ₹30 lakhs when cloud AI goes offline during a power cut. An auto supplier in Pune can't deploy quality inspection because AWS latency is 300ms—their line runs at 400 units/minute. Meanwhile, manufacturers who deploy local LLMs achieve 95%+ success rates. The difference? They own their AI, don't rent it.

The $252 Billion AI Paradox: Why Indian Factories Need Local LLMs, Not Cloud APIs

Massive Investment, Massive Failure—Until You Go Local

The Brutal Truth Nobody Talks About

For every dollar invested in cloud-based manufacturing AI, 80 cents is wasted on failed projects that never make it past pilot. The issue isn't AI capability—it's deployment architecture. Cloud APIs optimized for Silicon Valley chatbots break under factory floor realities: millisecond latency requirements, intermittent connectivity, data sovereignty mandates, and costs that explode at production scale.

Why Cloud APIs Fail in Indian Factories: 5 Fatal Flaws

The Latency Killer

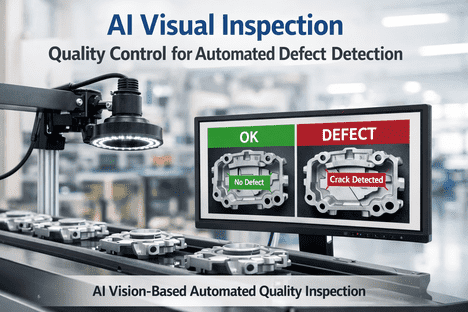

Cloud API calls: 200-500ms minimum. Production lines: 200-400 units/minute. By the time GPT-4 API returns a quality verdict, 50+ defective units have already shipped. Real-time is impossible.

The Cost Explosion

OpenAI API: $0.03 per 1K tokens. 10 production lines generating 50TB/month = ₹45 lakhs in API costs alone. Then add latency solutions (caching, edge proxies) doubling costs to ₹90 lakhs/month.

The Internet Dependency

Indian factory internet: 95-98% uptime (optimistic). Cloud APIs need 99.99%. Every power cut, ISP failure, or network hiccup = complete AI shutdown. Production stops.

Data Sovereignty Violation

Automotive OEMs mandate data stays in India. Defense suppliers can't use foreign clouds. Cloud APIs = automatic compliance failure. Many projects canceled post-pilot due to audit findings.

Model Drift & No Control

OpenAI updates GPT-4 → your prompts break overnight. No control over model behavior, no fine-tuning on your data, no domain expertise. Generic consumer AI trying to understand factory operations fails.

Vendor Lock-In Trap

Build on OpenAI API → entire AI stack depends on one vendor. Pricing changes, API deprecations, geopolitical restrictions = you're hostage. Migration to alternatives takes 6-12 months.

Tired of Cloud API Bills?

Calculate how much you're wasting on cloud APIs vs. local LLM deployment. Get cost comparison for your specific use case.

Cloud APIs vs. Local LLMs: The Real Comparison

☁️ Cloud APIs (OpenAI, Anthropic, Google)

- 200-500ms latency (unusable for real-time)

- ₹30-90 lakhs/month at production scale

- Requires constant internet (fails during outages)

- Data leaves India (compliance nightmare)

- Zero control over model updates

- Vendor lock-in (migration takes 6-12 months)

- Generic models (no factory domain knowledge)

- Per-token pricing (costs explode with scale)

Local LLMs (Llama, Mistral, Gemma)

- <5ms latency (true real-time capable)

- ₹3-8 lakhs/month (one-time hardware + electricity)

- Works offline 24/7 (zero internet dependency)

- 100% data sovereignty (never leaves premises)

- Full control (fine-tune on your factory data)

- Vendor neutral (switch models anytime)

- Domain-specific (trained on your operations)

- Fixed costs (scale linearly, not exponentially)

Want to see the difference in your factory? Book a live demonstration comparing cloud API vs. local LLM performance on your use case, or talk to our deployment team about architecture options.

Why Local LLMs Win: 3 Killer Advantages

Real-Time Performance

Process 1,000+ inference requests per second at <5ms latency. Enable use cases impossible with cloud: instant defect rejection, live process control, real-time optimization.

Predictable Economics

One-time GPU server investment (₹15-25 lakhs) + electricity (₹50K/month). No per-token costs that explode. 3-year TCO: 70-85% lower than cloud APIs.

Total Control

Own your models. Fine-tune on your data. Optimize for your use cases. No vendor can change pricing, deprecate APIs, or restrict access. True AI independence.

3-Year Cost Reality: Cloud APIs vs. Local LLMs

Cloud API Costs (Mid-Size Plant)

Local LLM Costs (Same Plant)

Cloud APIs cost 5.4x more over 3 years—with worse performance, no data sovereignty, and complete internet dependency.

See Your Exact Savings

Get customized 3-year TCO comparison for your factory. Understand true cloud API costs vs. local LLM investment.

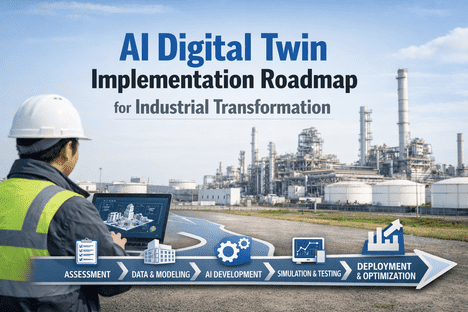

How to Deploy Local LLMs: 4-Step Practical Guide

Choose Your Model

Select open-source LLM based on use case: Llama 3 (70B) for complex reasoning, Mistral (7B) for speed, Gemma (7B) for efficiency. All deployable on-premises without licenses or API costs.

Setup Infrastructure

Deploy on industrial GPU servers (NVIDIA A40/A100) or edge devices (Jetson AGX). 8-16 GPUs handles 1,000+ inference/sec. One-time investment ₹15-25 lakhs for mid-size plant.

Fine-Tune on Your Data

Train on your specific use cases: quality inspection images, process parameters, maintenance logs. Model learns your factory's unique patterns—achieving 95%+ accuracy on your operations.

Deploy & Scale

Start with one production line, validate ROI in 60 days. Then scale across facility. No cloud costs multiplying—just electricity. Offline-first design means zero internet dependency.

Ready to deploy local LLMs? Our implementation team has deployed 40+ local LLM systems across Indian manufacturing, or schedule a technical consultation for your specific requirements.

Real Results: Bangalore Electronics Manufacturer

Problem: Using GPT-4 API for PCB defect detection. ₹38 lakhs/month costs, 300ms latency made real-time impossible, frequent API outages disrupted production.

Solution: Deployed Llama 3 70B locally on 8x A40 GPUs

Hardware paid back in 4 months through eliminated cloud costs. Now processing 2,000 inspections/sec without internet dependency.

Ready to Go Local?

Stop burning money on cloud APIs. Deploy local LLMs that deliver better performance at 1/5th the cost. Free architecture review.

Key Takeaways: Break Free from Cloud API Trap

- $252B invested, 80-95% fails—because cloud APIs aren't built for factory requirements

- Local LLMs deliver 5x better economics—₹60L vs ₹32Cr over 3 years for same capability

- Real-time is only possible locally—<5ms latency vs 200-500ms cloud roundtrip

- Offline-first = production resilience—works 24/7 regardless of internet status

- Data sovereignty built-in—100% compliance, zero foreign cloud exposure

- 4-month payback typical—hardware investment recovered through eliminated cloud costs

Stop Renting AI. Own It.

Deploy local LLMs that deliver real-time performance, data sovereignty, and 70-85% cost savings vs. cloud APIs.

Free consultation: assess your cloud API waste and local LLM opportunity.