Your factory's SCADA system just flagged a temperature anomaly on Line 3. In a traditional factory, an operator checks the dashboard, calls maintenance, waits for diagnosis, schedules a repair. Total response time: 4-8 hours. In an agentic AI factory, the AI agent detects the anomaly, correlates it with vibration and acoustic data, identifies a bearing degradation pattern, adjusts machine parameters to prevent immediate failure, generates a repair plan with parts list, and creates a maintenance work order — all within 90 seconds, without a single human touch. That's the shift from passive dashboards to active agents. Not AI that tells you what happened. AI that decides what to do — and does it. Deloitte predicts a 4x increase in agentic AI adoption in manufacturing by 2026 (from 6% to 24%). Samsung just committed to converting every factory to AI-driven autonomous production by 2030. The question isn't whether this is coming. It's whether your factory is architecturally ready. Book a free architecture consultation to assess your readiness with iFactory.

AI-Native Digital Transformation for Smart Manufacturing

Expert-led deep-dive on deploying agentic AI agents, UNS architecture, and autonomous decision-making — with live Q&A.

Register Now — Free Session →The Autonomy Spectrum: Where Does Your Factory Sit?

Not all AI is created equal. Most factories claiming "AI" are still stuck at Level 1 or 2 — dashboards and alerts. Agentic AI operates at Level 4 and 5 — where AI doesn't just inform, it acts. Here's the full spectrum, and where iFactory positions your operations.

Monitoring

Dashboards show what's happening. Humans interpret and decide.

SCADA screens, OEE dashboardsAlerting

System detects anomalies and notifies humans. Humans still act.

Threshold alarms, email alertsRecommending

AI analyzes and suggests actions. Humans approve and execute.

Predictive maintenance suggestions, GenAI copilotsActing — Bounded Autonomy

AI decides and acts within guardrails. Humans oversee exceptions.

Auto-adjusted parameters, auto-generated work ordersOrchestrating — Multi-Agent Autonomy

Multiple AI agents coordinate across production, logistics, and energy. Humans set goals.

Samsung's 2030 vision, iFactory's agentic architectureHow Agentic AI Actually Works on the Factory Floor

An AI agent isn't a chatbot. It's a closed-loop system that perceives, reasons, plans, and acts — autonomously, in real-time, within boundaries you define. Here's the decision loop that iFactory's agents execute thousands of times per shift.

Perceive

Ingest real-time sensor data — vibration, temperature, pressure, acoustic, vision — through iFactory's Unified Namespace at sub-10ms latency.

Reason

Correlate patterns across multiple data streams. Is this vibration spike + temperature rise consistent with bearing degradation? Cross-reference with historical maintenance logs.

Plan

Generate an action plan: adjust machine parameters to reduce load, schedule maintenance in next planned downtime window, identify replacement parts from inventory.

Act

Execute autonomously: adjust set points, create CMMS work order, notify maintenance team, log all decisions for audit trail. Human escalation only for safety-critical exceptions.

Learn

Outcome fed back into the model. Was the prediction correct? Did the adjustment prevent failure? The agent improves continuously with every decision cycle.

5 iFactory Agents That Run Your Factory

iFactory deploys specialized agents — each focused on a specific manufacturing function, all coordinating through the Unified Namespace. Here's what they do and the results they deliver.

Maintenance Agent

Predicts failures 48-72 hrs ahead. Auto-generates work orders with parts list, priority, and optimal scheduling window.

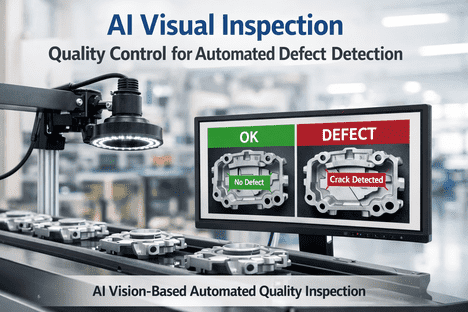

30-50% less unplanned downtimeQuality Agent

Computer vision inspects every unit in real-time. Detects micro-defects invisible to humans. Auto-adjusts process parameters to prevent drift.

20-35% scrap reduction, 0.1% defect escapeScheduling Agent

Simulates thousands of scenarios. Optimizes sequences across machines, workforce, materials, and delivery windows — autonomously.

15-30% throughput improvementEnergy Agent

Shifts energy-heavy processes to off-peak hours. Optimizes power consumption by analyzing grid demands and production requirements.

5-15% energy cost reductionKnowledge Agent

Captures tribal knowledge from retiring experts. Generates SOPs, provides real-time guidance to new operators via computer vision overlays.

Bridges the "Silver Tsunami" skills gapThe Architecture That Makes Agents Possible

Agents can't reason with raw, messy data. Without the right architecture, agentic AI hallucinates and prescribes incorrect fixes. iFactory's stack gives agents the contextualized, real-time data foundation they need.

| Layer | What It Does | Why Agents Need It |

|---|---|---|

| Unified Namespace | Single event-driven data bus — all machines, sensors, systems publish once | Agents reason across full factory context, not isolated data silos |

| Edge AI | Sub-10ms inference on factory floor — no cloud latency | Agents act in real-time; safety-critical decisions can't wait for cloud |

| Open Protocols | OPC UA + MQTT — vendor-agnostic connectivity | Agents connect to any device from any vendor without middleware |

| Governance Layer | Bounded autonomy, audit trails, human escalation paths | Agents operate within guardrails; every decision logged and traceable |

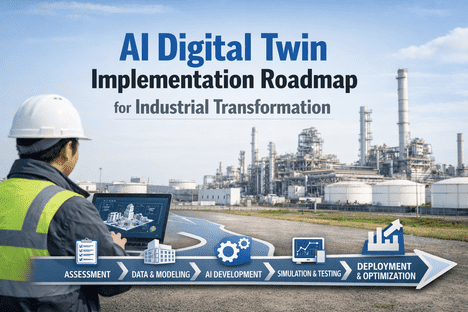

| Digital Twins | Virtual replicas of machines and processes for simulation | Agents test decisions virtually before executing physically |

Expert Perspective

A Co-pilot gives you answers. An Agent gives you outcomes.

The next phase of manufacturing innovation lies in building autonomous environments where AI truly understands operational contexts in real time.

You are moving from passive dashboards to active agents that don't just surface insights but execute decisions autonomously.

2026 is the year agentic AI moves from concept to deployment. The factories that win aren't the ones with the most data — they're the ones with the architecture that lets AI agents reason, decide, and act on that data in real-time. iFactory gives you that architecture today.

Deploy Agentic AI in Your Factory

iFactory's Architecture Blueprint identifies your highest-impact agentic use case and delivers a 90-day roadmap from pilot to production-scale autonomous operations.

Frequently Asked Questions

From Dashboards to Decisions. From Alerts to Actions.

The factories that win in 2026 don't just monitor — they act. iFactory's agentic architecture makes autonomous decision-making real, safe, and measurable.