That "affordable" cloud AI subscription is quietly becoming your biggest line item. Cloud costs scale linearly — but your production runs 24/7. Lenovo's 2026 TCO analysis found that on-premises AI infrastructure breaks even in under 4 months for high-utilization workloads, and delivers an 18x cost advantage per million tokens over cloud APIs across a 5-year lifecycle. For manufacturing, where inference runs continuously on live production data, the math is even more decisive. This guide gives you the complete TCO breakdown — CapEx vs OpEx, token economics, hidden costs, security exposure, and a 3-phase ROI roadmap that delivers measurable payback within 12 months. Book a free ROI consultation to model the numbers for your plant.

AI-Native Digital Transformation for Smart Manufacturing

Join iFactory's expert-led session covering edge AI ROI modeling, on-premise deployment economics, token cost analysis, and the 90-day pilot methodology — with live architecture review and open Q&A for your specific plant challenges.

Register Now — Free Session →The Hidden Economics: Why Cloud AI Gets Expensive at Production Scale

Cloud AI feels affordable in POC. A few API calls, modest per-token costs, no hardware to buy. But manufacturing doesn't run in POC mode — it runs 24/7/365. Here are the 5 cost traps that turn cloud AI from "affordable experiment" into "budget black hole" at production scale.

Token Costs Scale Linearly — Production Doesn't Stop

Cloud AI charges per token, per API call, per inference. In a POC with 10 machines, costs are negligible. Scale to 500 machines running quality inference every 2 seconds, and you're burning through millions of tokens per hour — 24 hours a day. Cloud costs scale linearly with usage; on-premise costs flatten after the initial investment.

Data Egress Fees — The Tax You Didn't Budget For

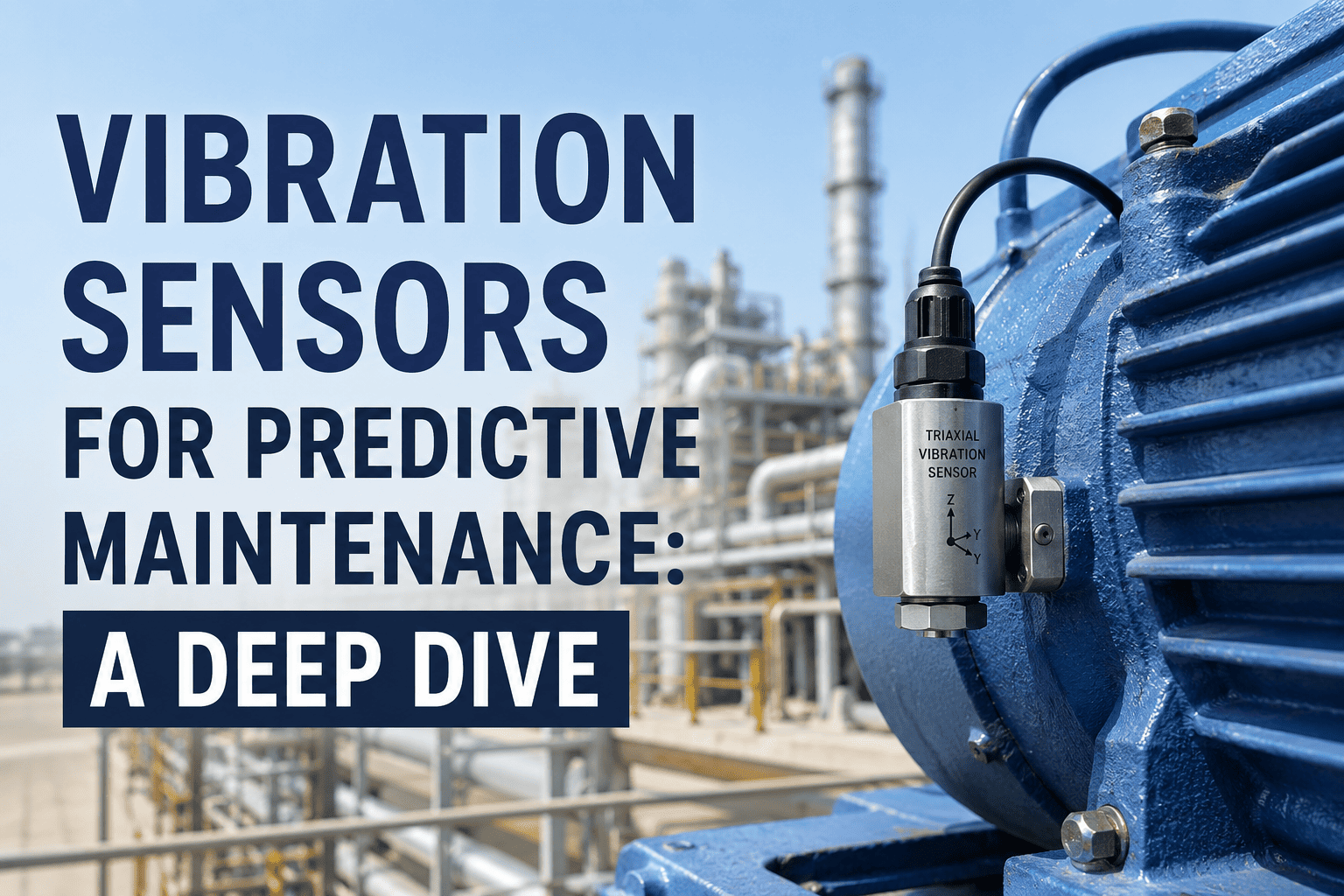

Every byte of sensor data you send to the cloud and every AI result you pull back incurs egress charges. Manufacturing generates massive data volumes — vibration sensors alone produce gigabytes per day. Data egress contributes 5–10% of added cost in AI-heavy environments, and it's the line item most TCO analyses miss.

Idle GPU Costs — Paying for Compute You Don't Use

Cloud GPU instances charge whether you're using them or not — and spinning them up/down for manufacturing inference creates latency spikes that defeat the purpose. Idle GPU clusters cause 15–20% of AI-related cost overruns. Reserved instances reduce hourly rates but lock you into multi-year commitments that still exceed on-premise TCO.

Latency Costs — When 200ms Means Scrap

Cloud AI round-trip latency averages 200ms+. In quality inspection, that's 200ms of product moving past the camera before the AI verdict arrives. At high line speeds, that delay means defective products ship — or good products get scrapped. Latency isn't just a performance metric — it's a yield cost.

Sovereign Risk — The Cost You Can't Put on a Spreadsheet

Cloud AI means your production recipes, quality parameters, and process IP are processed on third-party infrastructure — subject to the US CLOUD Act and multi-tenant security risks. A single data breach costs manufacturing $8.7 million on average. That's not a line item in your cloud bill — but it belongs in your TCO.

The TCO Comparison: Cloud AI vs On-Premise Edge AI

Here's the honest comparison — not just sticker price, but the total 5-year cost of running manufacturing AI at production scale. The numbers tell a clear story.

On-premises infrastructure achieves a breakeven point in under four months for high-utilization workloads. Owning the infrastructure yields up to an 18x cost advantage per million tokens compared to Model-as-a-Service APIs.

When GPU utilization exceeds a 20% threshold, on-premises infrastructure reaches a break-even point in as little as four to six months. The cost per million tokens on private hardware can be 10 to 15 times lower than cloud APIs.

Private AI data centers deliver roughly 35% TCO savings and about 70% OpEx savings over five years versus equivalent public cloud offerings. Latency improvements enable real-time processing and iterations.

iFactory's 3-Phase ROI Roadmap: Payback Within 12 Months

iFactory doesn't ask you to bet the budget on a 5-year projection. The 3-phase roadmap delivers measurable ROI at every stage — starting with your highest-impact use case and scaling only after proven payback.

Map Your Highest-ROI Use Case First

Define measurable outcomes before a single model is trained. iFactory audits your production data, identifies the use case with the highest financial impact (typically predictive maintenance — 300–500% ROI), and builds the cost model comparing cloud vs edge for your specific scenario.

Deploy on 5–10 Critical Machines — Measure Everything

Production-grade edge AI on your highest-value assets. iFactory instruments every data point: inference cost per machine, downtime prevented, defects caught, energy saved. You'll see exactly how much each AI decision is worth — with zero cloud API costs accumulating.

Expand Across Production Lines with Documented ROI

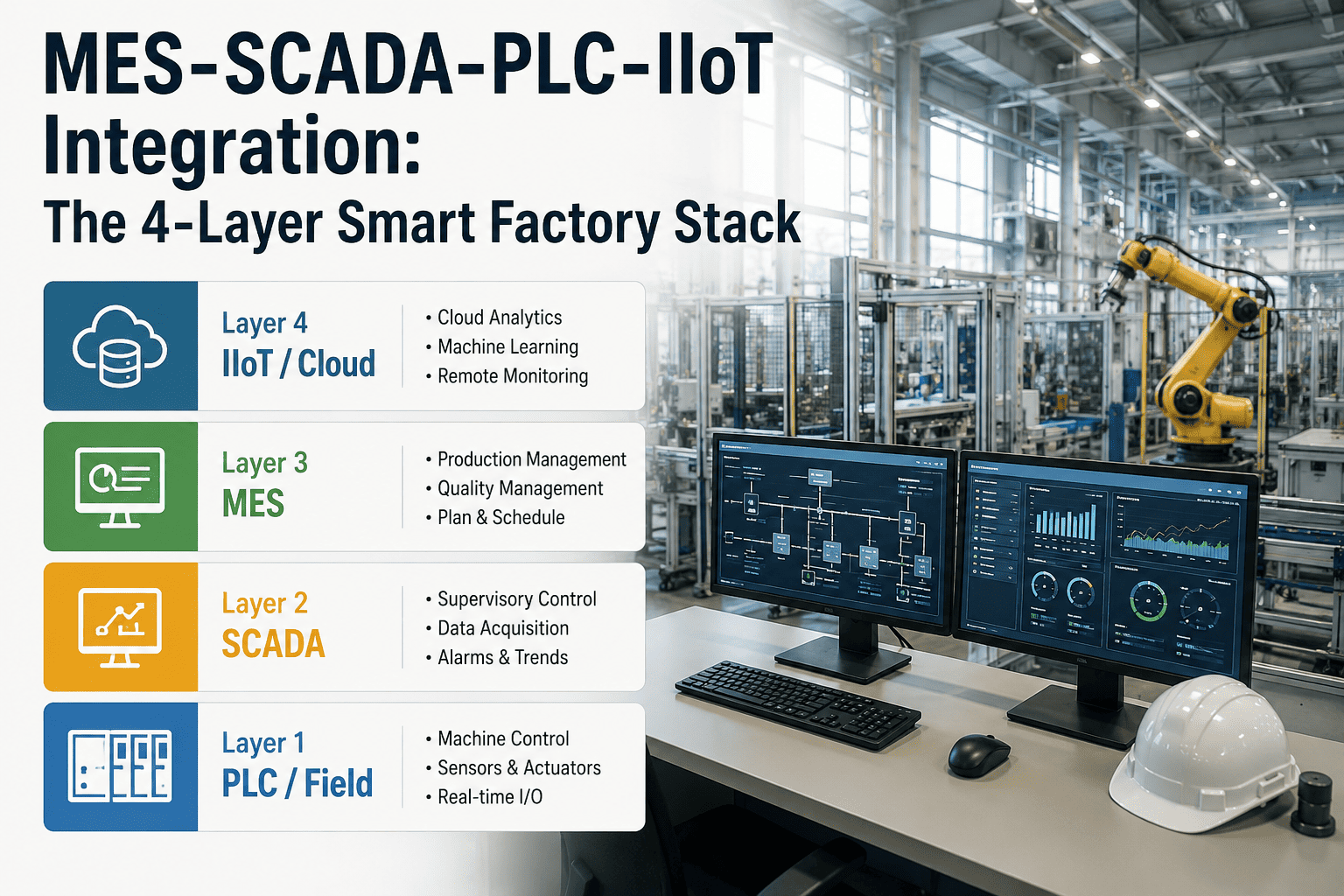

Scale to full production with proven economics — not projections. Connect MES, ERP, CMMS through the Unified Namespace. Deploy additional AI agents for scheduling, quality, and energy optimization. Deliver the board-ready business case with documented financial impact.

The cloud vs on-premise debate isn't about ideology — it's about math. Manufacturing AI runs 24/7 at high utilization on latency-sensitive workloads with sovereign data requirements. That's the exact profile where on-premise edge AI delivers 10–18x cost advantage. iFactory is built for this math: edge inference with zero per-token costs, sub-5ms latency, sovereign data, and a 90-day path to measurable ROI.

Stop Paying Per Token — Start Owning Your AI

iFactory deploys edge AI with zero cloud API costs — predictable CapEx, 10–18x lower cost per inference, and measurable ROI within 12 months.

Frequently Asked Questions

Cloud AI Costs Scale Linearly. Your Factory Runs 24/7. Do the Math.

iFactory delivers 10–18x lower cost per inference with edge AI — no per-token fees, no egress charges, no latency penalties. Measurable ROI within 12 months.