Choosing the wrong AI hardware for your factory floor is a six-figure mistake that takes 18 months to fix. NVIDIA now offers multiple Blackwell-powered platforms — but the IGX and DGX families solve fundamentally different problems. One is built for the data center. The other is built for the factory floor. Pick wrong, and you'll either overspend on hardware that can't survive OT environments, or deploy edge devices that can't handle your model training workloads. This guide gives plant managers, CTOs, and automation engineers the definitive technical comparison — with clear recommendations for every manufacturing AI use case. Book a free hardware consultation to map this to your specific plant requirements.

AI-Native Digital Transformation for Smart Manufacturing

Join iFactory's expert-led session covering edge AI hardware selection, IGX deployment architecture, sovereign data strategy, and the 90-day pilot methodology — with live architecture review and open Q&A for your specific plant challenges.

Register Now — Free Session →IGX vs DGX: The Core Difference in 30 Seconds

This isn't a "which is faster" comparison. IGX and DGX are built for entirely different layers of the manufacturing AI stack. Understanding where each platform belongs is the single most important hardware decision you'll make.

NVIDIA IGX Thor: Purpose-Built for the Factory Floor

IGX isn't a shrunk-down data center GPU. It's an entirely different category — an industrial-grade edge AI platform with functional safety, real-time sensor processing, and 10-year enterprise lifecycle support. Here's what makes it the right choice for manufacturing edge deployment.

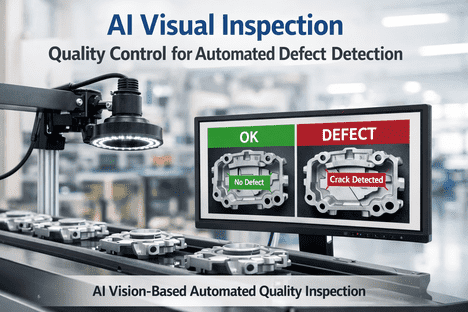

Blackwell Architecture at the Edge — Up to 5,581 FP4 TFLOPS

IGX Thor delivers up to 8x the AI compute of its predecessor (IGX Orin) with the Blackwell iGPU, plus an optional discrete GPU pushing total performance to 5,581 FP4 TFLOPS. This is enough to run multiple generative AI models simultaneously — defect detection, predictive maintenance, and NLP copilots — at the machine level.

Functional Safety — ASIL D / SIL 3 Certification Path

IGX Thor includes a dedicated Functional Safety Island — an independent safety processor that isolates safety-critical workloads. It's designed to meet ISO 26262 (ASIL D) and IEC 61508 (SIL 2/3). DGX has zero functional safety certifications. If your AI controls anything that could harm a human, IGX is the only compliant choice.

10-Year Lifecycle & Enterprise Support

Manufacturing equipment runs for 10–20 years. Consumer-grade AI hardware is obsolete in 18 months. IGX comes with NVIDIA AI Enterprise software and 10 years of support — firmware updates, security patches, and driver compatibility guaranteed. This matches your CAPEX cycles and prevents mid-deployment hardware obsolescence.

Industrial-Grade Durability — Built for OT Environments

IGX is designed for factory floors — not server rooms. Compact form factor, extended temperature ranges, vibration resistance, and industrial I/O for cameras, sensors, and PLCs. Ecosystem partners like Advantech, ADLINK, and Connect Tech deliver ruggedized IGX-powered systems certified for harsh environments.

NVIDIA DGX: The AI Training Powerhouse

DGX isn't for the factory floor — it's for building the intelligence that runs on the factory floor. Here's where DGX fits in the manufacturing AI stack and why it complements (not replaces) edge deployment.

Large-Scale AI Model Training

8x Blackwell GPUs with 5th-gen NVLink deliver 3x training and 15x inference performance over DGX H100. Train custom defect detection, predictive maintenance, and scheduling optimization models on your proprietary production data.

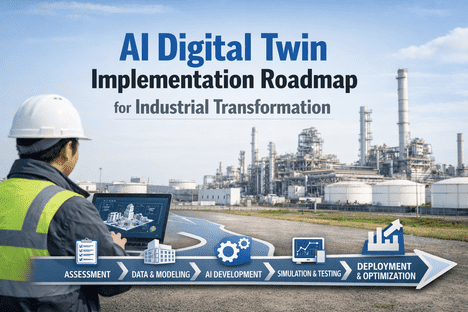

On-Premise Model Development

GB300 Grace Blackwell Ultra Superchip with 784GB coherent memory and 20 PFLOPS. Run models up to 1 trillion parameters from a deskside system — fine-tune on sensitive production data without sending anything to the cloud.

Rapid Prototyping & POC Development

GB10 Grace Blackwell Superchip with 128GB memory and 1 PFLOP FP4 performance. Prototype and fine-tune models locally before deploying to IGX at the edge — the fastest path from concept to production-ready AI.

Head-to-Head: IGX Thor vs DGX for Manufacturing

This is the comparison that matters. Not raw benchmarks — but which platform solves which manufacturing problem. Here's how they stack up across the criteria that actually drive hardware decisions on the factory floor.

IGX Thor is built for real-time physical AI at the edge. DGX is built for training the models that IGX runs. They're not competitors — they're two halves of the same manufacturing AI stack.

AI Compute Performance

IGX Thor: Up to 5,581 FP4 TFLOPS (with dGPU) — optimized for real-time inference on multiple concurrent AI models at the edge. 8x higher than IGX Orin predecessor.

DGX B200: 8x Blackwell GPUs with NVLink — 3x training and 15x inference over H100. Designed for training large models, not edge deployment.

Functional Safety & OT Compatibility

IGX Thor: Dedicated Functional Safety Island, ISO 26262 ASIL D, IEC 61508 SIL 2/3 certification path. Built for environments where humans and machines work side by side.

DGX B200: No functional safety certifications. Data center grade only — not rated for OT environments, vibration, extended temperatures, or human-proximity safety.

Deployment Environment & Power

IGX Thor: Compact module (T5000) and board kit (T7000). 40–130W power envelope. Extended temperature, vibration rated. Ruggedized systems from Advantech, ADLINK, Connect Tech, WOLF.

DGX B200: Full rack-mount server. 10kW+ power. Requires climate-controlled data center with liquid or precision air cooling. Not deployable on the factory floor.

Lifecycle Support & Total Cost of Ownership

IGX Thor: 10-year lifecycle with NVIDIA AI Enterprise. Long-term firmware, security patches, and driver support. Matches manufacturing CAPEX/depreciation cycles.

DGX B200: Enterprise support available but follows faster data center refresh cycles (3–5 years). Higher upfront cost ($200K+), designed for centralized AI teams.

The Right Architecture: How iFactory Connects IGX and DGX

The most effective manufacturing AI deployments don't choose between IGX and DGX — they use both in a unified architecture. iFactory's platform is the orchestration layer that connects edge inference to model training.

DGX Trains Your Custom Manufacturing AI Models

Use DGX (B200, Station, or Spark) to train defect detection, predictive maintenance, and scheduling models on your proprietary production data — on-premise, sovereign, never leaving your facility.

IGX Runs Trained Models at the Machine Level

Deploy trained models to IGX Thor edge gateways positioned at each production line. Real-time inference in sub-5ms, functional safety for human-proximity operations, air-gapped capable.

iFactory Connects Everything Through the Unified Namespace

iFactory's UNS is the single data bus connecting IGX inference outputs, DGX model pipelines, MES, ERP, CMMS, and every sensor on your floor. AI decisions flow into production workflows automatically — no integration middleware required.

The question isn't "IGX or DGX?" — it's "How do I connect them into a single, sovereign manufacturing AI architecture?" iFactory is built to answer exactly that. Train on DGX. Deploy on IGX. Orchestrate through the UNS. Govern with built-in compliance. That's the complete stack.

Get Your AI Hardware Blueprint — Free

iFactory maps the right NVIDIA hardware to every use case in your plant — from edge inference to model training — with a 90-day deployment roadmap.

Frequently Asked Questions

The Right NVIDIA Hardware + The Right Software Stack = Production AI

iFactory connects NVIDIA IGX edge inference to your entire manufacturing operation through the Unified Namespace. Train on DGX. Deploy on IGX. Orchestrate with iFactory.