Most food manufacturing plants believe they have real-time analytics data visibility — but the reality on the plant floor tells a different story. Dashboard refresh intervals running 15 to 45 minutes behind live production, retrieval errors during peak processing windows, and siloed data streams that never converge into a single operational picture are costing food manufacturers millions in preventable downtime, quality failures, and compliance exposure. If your analytics platform cannot surface a production anomaly before it becomes a reject batch, you do not have real-time visibility — you have a reporting delay with a modern interface. To see how purpose-built AI-driven analytics closes the food manufacturing visibility gap, Book a Demo with the iFactory manufacturing intelligence team today.

The Real-Time Analytics Data Problem Hiding Inside Food Manufacturing Plants

Why "Connected" Doesn't Mean "Visible" on the Plant Floor

The term "real-time analytics" has been so aggressively marketed across industrial software that food manufacturers often assume connectivity equals visibility. It does not. A sensor transmitting data every 200 milliseconds means nothing operationally if the analytics layer aggregating that signal refreshes on a 30-minute batch cycle, routes through a middleware layer with unmonitored queue depth, or displays production KPIs on a dashboard that plant floor operators cannot physically access during an active production run. The analytics visibility gap in food manufacturing is not primarily a sensor problem — it is a data architecture problem compounded by legacy reporting design that was never built for the decision latency requirements of modern food processing lines. Plants that have invested in IoT infrastructure without replacing their underlying analytics pipeline are experiencing the same production blind spots as facilities with no connected sensors at all.

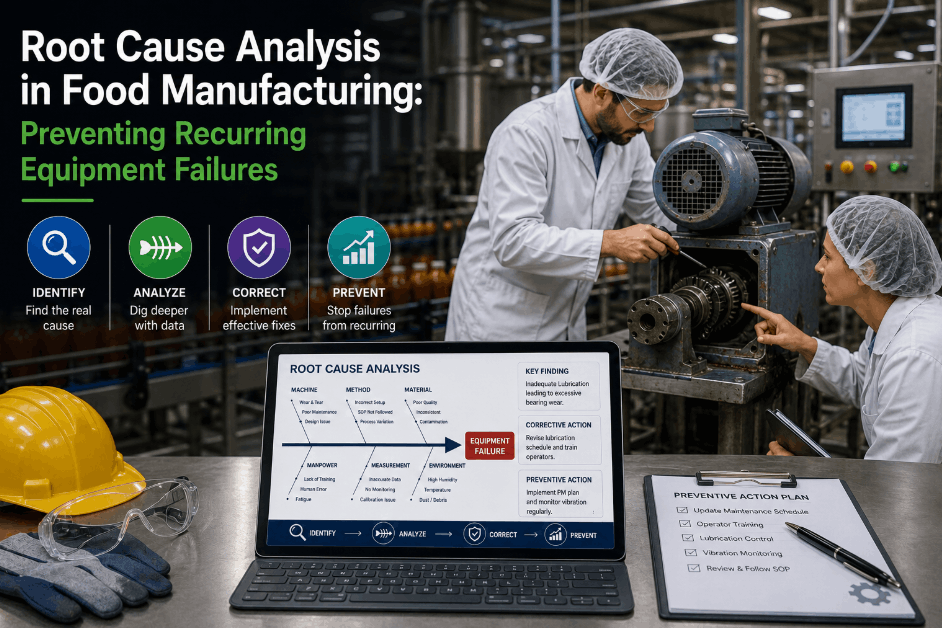

5 Root Causes of Real-Time Analytics Data Failure in Food Processing

Diagnosing the Visibility Gap Before It Becomes a Compliance or Quality Event

How Analytics Data Silos Amplify Food Manufacturing Risk

The Hidden Cost Structure of Fragmented Production Visibility

The financial impact of analytics data silos in food manufacturing extends well beyond the immediate cost of a quality reject or an unplanned maintenance event. When production intelligence is fragmented across disconnected systems, the downstream consequence is a compounding risk profile that affects four operational domains simultaneously: quality management, asset reliability, regulatory compliance, and labor efficiency. A quality deviation that a unified real-time analytics platform would surface in under 90 seconds can propagate undetected for 18 to 40 minutes across a siloed data environment — transforming a correctable process drift into a full batch hold, a customer complaint, or a regulatory notification. The annualized cost of this latency gap across a typical mid-volume food processing operation consistently exceeds the total cost of deploying a purpose-built AI-driven analytics platform. Food manufacturers who want to quantify their current visibility gap exposure can Book a Demo for a structured production intelligence gap assessment.

| Analytics Failure Mode | Primary Production Impact | Secondary Risk | Annualized Cost Range |

|---|---|---|---|

| Data Silo Fragmentation | Quality Deviation Propagation | Batch Hold & Rework Cost | $180K – $420K |

| Batch-Cycle Reporting Delay | Undetected Process Drift | Regulatory Non-Compliance | $95K – $310K |

| OT Network Blind Zones | Maintenance Event Missed | Unplanned Downtime Cascade | $120K – $280K |

| Reporting-Only Analytics | No Predictive Alerting | Increased Mean Time to Repair | $75K – $190K |

| Peak Load Retrieval Failures | Critical Data Loss During Max Run | Audit Gap & Traceability Failure | $60K – $240K |

What Genuine Real-Time Analytics Visibility Requires in Food Manufacturing

The Architecture Difference Between True and Simulated Real-Time Production Intelligence

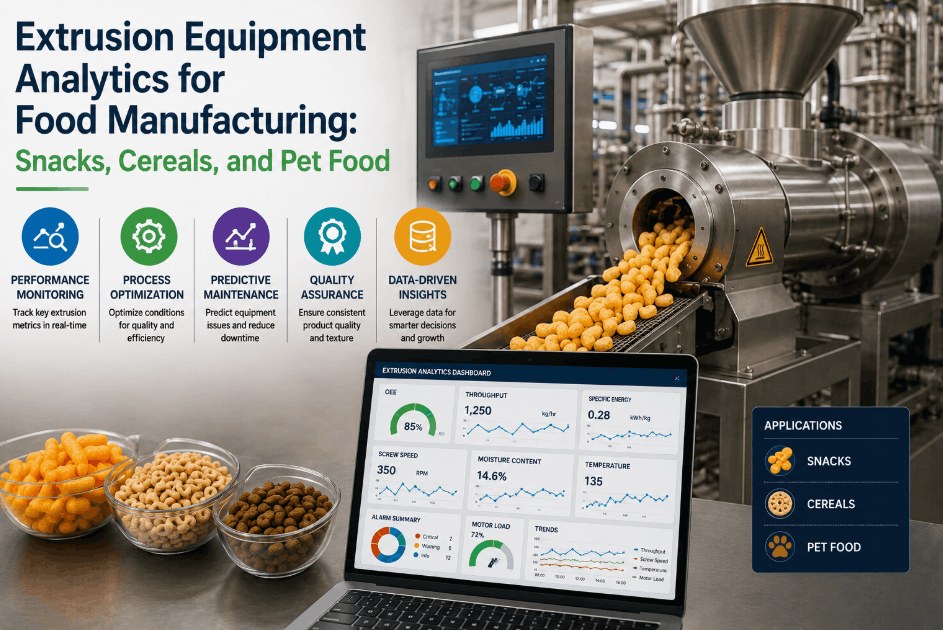

Genuine real-time analytics in a food manufacturing environment requires four architectural elements working in concert: a streaming data ingestion layer that processes sensor and system events continuously rather than in scheduled batches; a unified data model that normalizes signals from SCADA, QMS, CMMS, and MES into a single production context; an AI inference engine that runs anomaly detection, predictive maintenance scoring, and quality deviation classification against live data streams; and an event-driven alerting framework that pushes prioritized actions to operators on mobile devices before a process exceedance crosses a critical threshold. Platforms that claim real-time analytics capability without all four of these elements in native operation are delivering monitoring, not intelligence. The distinction between monitoring and intelligence is precisely where preventable downtime and quality losses reside in food manufacturing operations.

Fixing the Analytics Visibility Gap: A Practical Framework for Food Plant Directors

Five Diagnostic Steps Before Replacing or Upgrading Your Analytics Platform

Why AI-Driven Reporting Delay Is a Regulatory Risk, Not Just an Efficiency Problem

FSMA 204 Traceability and HACCP Compliance in an Analytics Latency Environment

The regulatory dimension of analytics data failure in food manufacturing has intensified significantly with the full enforcement of FSMA 204 traceability requirements and increasing retailer-mandated quality documentation standards. A reporting delay that was previously categorized as an efficiency problem now carries direct compliance liability. FSMA 204 requires food manufacturers to capture and maintain key data elements at every critical production stage — and to be able to produce traceability records within 24 hours of a regulatory request. An analytics platform that loses data integrity under peak load, fails to capture events during OT network interruptions, or stores traceability records in disconnected system silos cannot reliably meet this requirement. Food manufacturers operating under BRC, SQF, or retailer GFSI audit frameworks face compounding audit exposure when their analytics platform cannot produce an unbroken, timestamped production data chain. The compliance cost of an analytics visibility gap is no longer a theoretical risk — it is an enforcement reality. Operations leaders ready to evaluate their current traceability analytics architecture can Book a Demo and see iFactory's FSMA 204 compliance automation in a live food manufacturing environment.

Frequently Asked Questions

What causes real-time analytics data failure in food manufacturing plants?

The most common causes are batch-based data ingestion pipelines, analytics data silos across disconnected SCADA, QMS, and CMMS systems, and OT network segmentation creating unmonitored data transfer gaps. Each failure mode produces delayed or fragmented production intelligence that cannot drive the operator response times required in food processing environments.

How do analytics data silos increase food manufacturing risk?

Data silos prevent quality deviations and maintenance alerts from being contextualized against the full production picture. A quality signal that appears minor in isolation may indicate a critical upstream process drift when cross-referenced with adjacent system data. Siloed data means siloed insight — and slower corrective action across every production risk category.

What is the analytics visibility gap and why does it matter for food plants?

The analytics visibility gap is the time between when a production event occurs and when an operator receives actionable intelligence through their analytics platform. In food manufacturing, gaps of 15 to 45 minutes allow quality deviations and CCP exceedances to compound into batch-level losses before corrective action is possible. Closing this gap consistently delivers the highest measurable ROI improvement available to food manufacturing operations.

How does AI-driven reporting delay affect FSMA 204 compliance?

FSMA 204 requires complete, unbroken key data element records at every production stage with traceability documentation producible within 24 hours. Analytics platforms with reporting delays or data integrity gaps during peak load create record voids that cannot be remediated retroactively — generating direct regulatory exposure regardless of whether a food safety event occurred.

How can food manufacturers measure their actual analytics latency?

Trigger a known process event on your production line and measure elapsed time until your analytics platform surfaces it as an alert or dashboard update. Run this test under both standard and peak load conditions, then compare against your vendor's stated refresh rates. Most food plants discover their real latency is 3 to 8 times higher than documented platform specifications.

Can upgrading to a cloud-based analytics platform eliminate data silos in food manufacturing?

Cloud deployment alone does not eliminate data silos — it relocates them. A cloud platform that queries SCADA, CMMS, and QMS systems independently still produces fragmented intelligence. Eliminating silos requires a unified data ingestion layer that normalizes signals from all production systems into a single real-time operational context, regardless of whether the analytics engine runs in the cloud, at the edge, or in a hybrid configuration.

What is the typical ROI timeline for fixing analytics visibility gaps in food processing plants?

Food manufacturers who replace batch-cycle analytics with genuine real-time production intelligence typically see measurable OEE improvement within the first 60 to 90 days of full deployment. Full platform payback — accounting for reduced unplanned downtime, lower quality reject rates, and compliance documentation savings — is consistently achieved within 8 to 14 months across documented food and beverage deployments.