Every millisecond of latency in your production line is a liability. When a conveyor sensor detects a critical anomaly, the difference between an edge AI decision firing in 8 milliseconds and a cloud round-trip completing in 340 milliseconds is the difference between a corrected fault and an unplanned shutdown costing $260,000 per hour. Manufacturers clinging to cloud-only AI architectures are discovering this gap the hard way — not in boardrooms, but on factory floors, during third-shift incidents with no engineer on-site and no real-time response in reach.

iFactory Edge AI Intelligence

Edge AI vs Cloud AI in Manufacturing: Which Wins for OT Workloads?

Latency, cybersecurity, data sovereignty, and total cost compared — so your plant chooses the architecture that actually fits your operations.

<10ms

Edge AI inference latency on OT workloads

68%

Of manufacturers cite data sovereignty as a deployment barrier

3.2x

Higher alert accuracy with edge-local anomaly models

40%

Lower bandwidth cost versus full cloud streaming

The Architecture Decision Nobody Warns You About

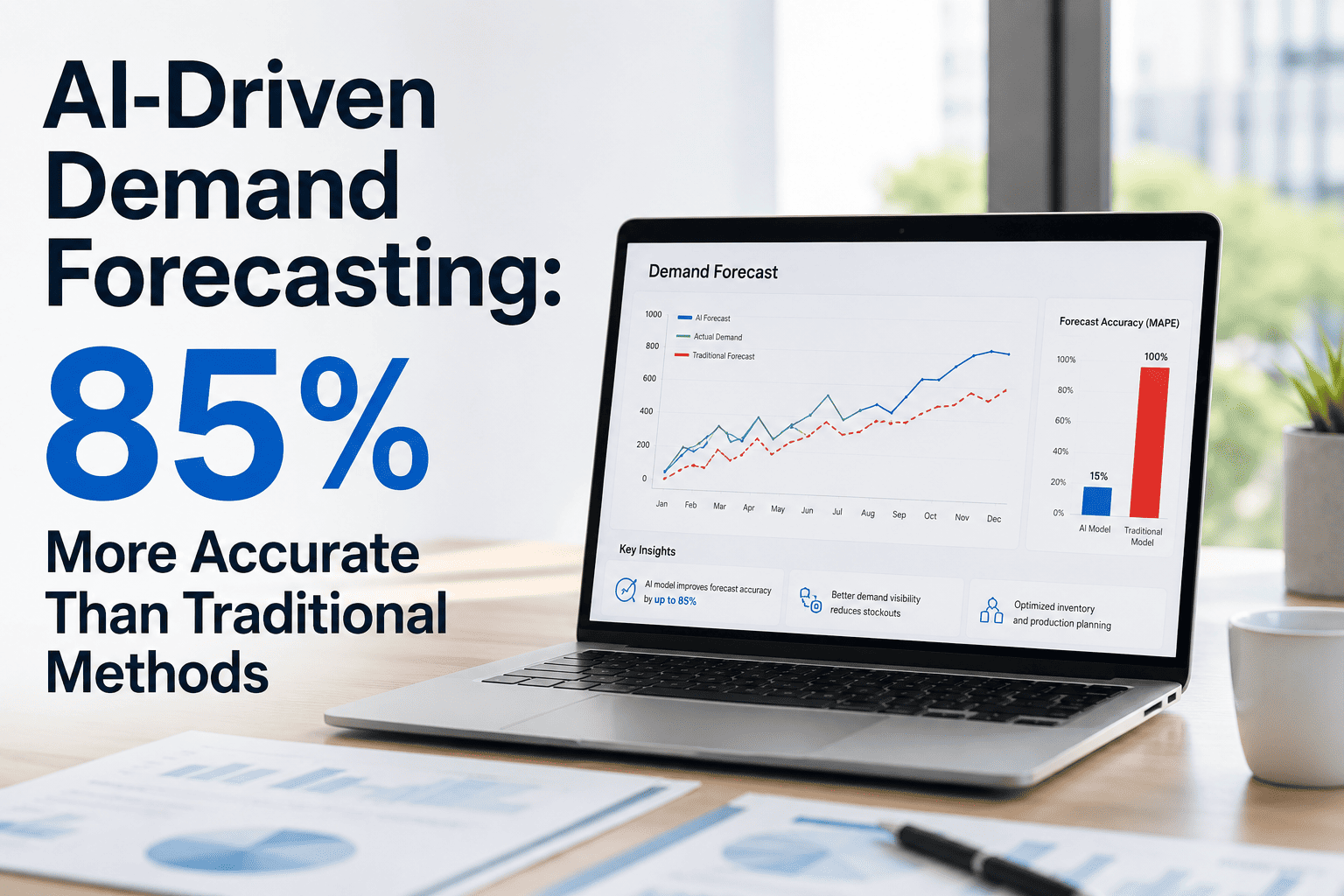

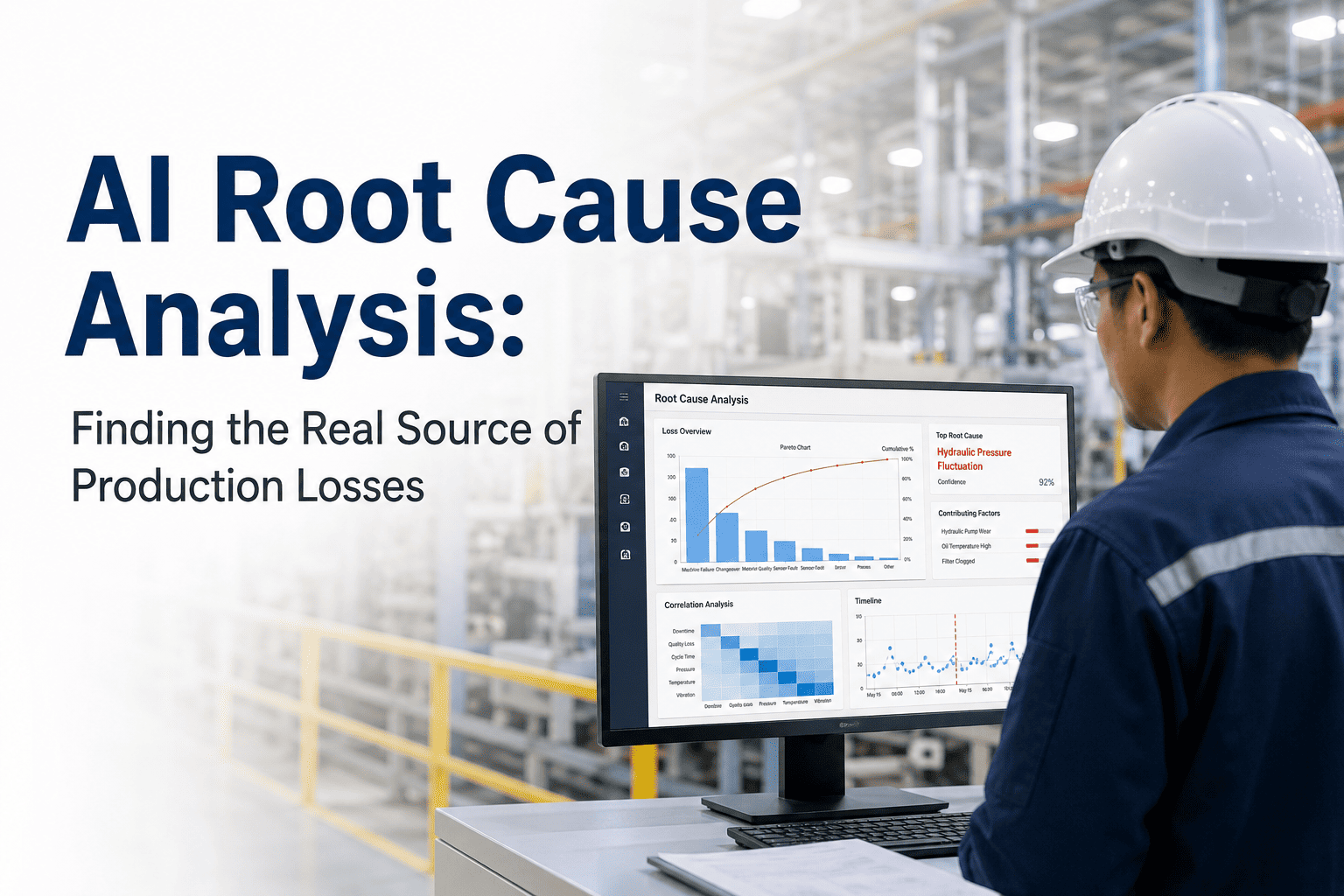

Most digital twin and predictive maintenance vendors lead with capability demonstrations — dashboards, anomaly scores, remaining useful life projections. What they rarely discuss upfront is the fundamental architectural question that determines whether any of those capabilities actually work in your environment: where does the AI inference run? Edge AI processes data locally, on or near the machine. Cloud AI transmits data to remote servers, infers, and sends results back. For IT workloads — CRM analytics, demand forecasting, ERP optimization — cloud AI is usually superior. For OT workloads, the calculus is far more complex.

Four Dimensions That Determine the Right Architecture

Latency & Real-Time Control

OT control loops operate at 10–100ms cycles. Cloud round-trips average 80–400ms depending on network path. For safety interlocks, motor protection, and closed-loop process control, cloud AI is architecturally disqualified. Edge inference runs in 2–15ms — inside the control window.

Cybersecurity & Air-Gap Compliance

Critical infrastructure sectors — defence supply chain, pharmaceuticals, energy — mandate air-gapped OT networks where cloud connectivity is prohibited by regulation or policy. Edge AI operates entirely within the air-gapped perimeter. Cloud AI requires network exceptions that auditors and CISOs routinely reject.

Data Sovereignty & IP Protection

Process parameters, production recipes, and throughput data represent core competitive IP. Streaming this data to a vendor's cloud infrastructure creates contractual, legal, and competitive exposure. Edge AI keeps proprietary operational data on-premises — it never leaves the facility boundary.

Bandwidth Cost & Connectivity

A single production line with 200 sensors generates 15–40 GB of raw data per day. Transmitting all of it to the cloud is expensive and unreliable in facilities with constrained WAN links. Edge AI processes locally and transmits only insights — reducing bandwidth consumption by 40–70%.

Legacy Architecture vs Edge-Optimised AI: The Real Comparison

Inference Latency

80–400ms round-trip; misses OT control windows

2–15ms local inference; inside every control loop

Air-Gap Compliance

Requires outbound internet; fails air-gap audits

Fully air-gap capable; no external connectivity required

Data Sovereignty

Raw process data leaves facility; IP exposure risk

All raw data stays on-premises; only insights transmitted

Network Resilience

AI blind during WAN outage; no offline operation

Full inference capability maintained during connectivity loss

Bandwidth Cost

Full sensor stream to cloud: high egress cost

Insight-only uplink: 40–70% bandwidth reduction

Model Accuracy

Generic cloud models; limited facility-specific training

Local models trained on your specific asset behaviour

Deployment Complexity

Firewall exceptions, IT approval cycles, VPN tunnels

OPC-UA/MQTT integration; no network architecture changes

Regulatory Readiness

GDPR, ITAR, CMMC gaps from data exfiltration

On-premises processing; audit-ready compliance posture

Where Cloud AI Still Wins

A honest architecture assessment acknowledges where cloud inference remains the right choice. For large-scale model training requiring GPU clusters, cross-facility fleet benchmarking across dozens of sites, and long-horizon demand forecasting that synthesises external data signals, cloud compute outperforms edge hardware. The winning architecture is not a binary choice — it is a hybrid. Edge handles inference, control response, and data sovereignty. Cloud handles model training, strategic analytics, and multi-site aggregation. iFactory's platform is engineered for exactly this separation: edge nodes handle real-time OT workloads while cloud services provide fleet-level intelligence without touching raw process data.

Operational Resilience

AI inference continues during WAN outages

Safety interlocks never dependent on internet connectivity

Third-shift autonomous response without remote dependency

Cost Reduction

40–70% lower data egress and bandwidth spend

Eliminated per-GB cloud ingestion fees at scale

Reduced network infrastructure investment for OT zones

Competitive Advantage

Process IP stays within facility boundary permanently

Faster model iteration using local operational data

Compliance posture enables regulated industry contracts

How iFactory Deploys Edge AI in Your Facility

iFactory's edge AI deployment begins with a sensor infrastructure audit and OT network assessment — typically completed in five to seven days. Industrial edge nodes are deployed adjacent to critical asset groups, integrating via OPC-UA and MQTT without requiring any changes to your existing SCADA or historian architecture. Local AI models begin learning asset-specific operating baselines within the first week. Anomaly detection goes live within four to six weeks. The cloud layer activates only for model retraining, cross-site benchmarking, and executive reporting — never for raw data transmission. Every deployment is configured to your data governance requirements before a single sensor goes live.

Frequently Asked Questions

What hardware does edge AI deployment require?

iFactory's edge nodes are purpose-built industrial computers rated for harsh environments — IP65, DIN-rail mountable, operating from -20°C to +70°C. Each node handles inference for 50–150 asset monitoring points. No GPU is required for inference workloads; standard industrial CPUs with onboard NPUs deliver sub-15ms latency at significantly lower cost and power draw than GPU-based alternatives.

Can edge AI models be updated without cloud connectivity?

Yes. Model updates are packaged and deployed via encrypted USB or local network transfer for air-gapped environments. For connected deployments, model updates push automatically from the cloud training layer to edge nodes during off-shift windows. In both cases, the previous model version remains active until the update is validated — zero-downtime model transitions are standard.

How does iFactory handle facilities with mixed connectivity — some lines online, some air-gapped?

Mixed environments are the most common deployment scenario. iFactory runs independent edge node clusters per network zone, with zone-specific data governance policies. Connected zones benefit from automatic model updates and cloud analytics. Air-gapped zones operate on local-only inference with scheduled manual update procedures. Both zones feed into the same operational dashboard — the data governance boundary is invisible to the maintenance team.

Does edge AI require our OT team to manage AI models directly?

No. Model management, retraining schedules, and performance monitoring are handled entirely by iFactory's platform and engineering team. Your OT team interacts only with the operational outputs: condition dashboards, predictive alerts, and maintenance recommendations. The AI infrastructure operates transparently in the background — the same way a PLC runs its logic without requiring operators to write ladder logic.

Edge AI Deployment for OT Environments

Your Facility Deserves AI That Works When the Network Doesn't

iFactory's edge-first architecture delivers real-time OT intelligence with full data sovereignty — deployed in weeks, not quarters, with measurable ROI at every phase.

<10ms

Edge inference latency

40%

Bandwidth cost reduction

4-6wk

Time to first value