Every year, manufacturers pay an invisible tax — not to a government, but to mediocre software. Bloated MES platforms that require 18-month deployments. Cloud dashboards that visualise the past but cannot predict the future. Point solutions that solve one problem while creating three more. The cost is not just licensing fees: it is unplanned downtime that bleeds $260,000 per hour, maintenance spend on machines that were already telling you they would fail, and competitors who quietly switched to AI-native platforms and never looked back. If you are evaluating manufacturing AI platforms today, the decision you make will determine whether your facility operates at its ceiling — or watches from the sideline as that ceiling rises for everyone else.

iFactory Implementation Intelligence

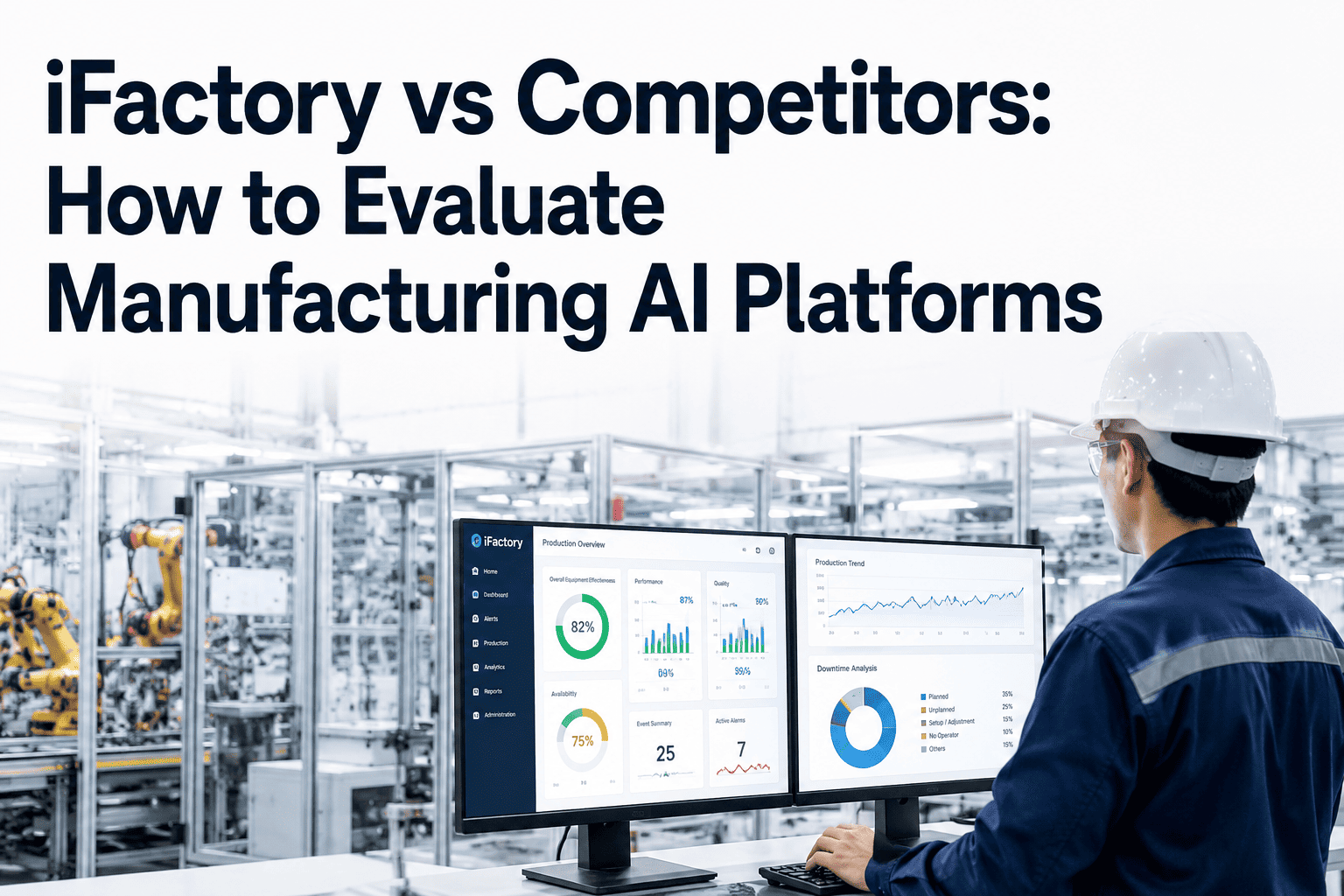

iFactory vs Competitors: How to Evaluate Manufacturing AI Platforms

A rigorous scoring framework that helps operations and technology leaders cut through vendor noise, compare platforms on dimensions that drive ROI, and choose the system that fits how modern factories actually work.

10–30x

ROI on AI platform investment

4–6 wk

Time to first measurable value

95%

Predictive maintenance adopters reporting positive ROI

$3.5M

Annual savings potential at scale

The Four Platform Categories You Will Encounter

Before comparing vendors, it is essential to understand what category each one occupies. Many sales teams position their products as "AI-powered" regardless of what the platform actually does. Here is the honest breakdown of what exists in the market today.

01

Legacy MES Platforms

Mature systems built for scheduling and execution tracking. Strong on workflow but architecturally unable to support real-time AI at the asset level. Typical deployment: 12–24 months. AI capability: bolted on via third-party modules.

02

Cloud Analytics Dashboards

Visualisation-first platforms that pull historian data and display trends. Excellent for reporting to leadership. Limited for operational action: they describe what happened, not what will happen. Predictive capability is often a premium add-on.

03

Point Maintenance Solutions

Vibration monitoring tools, thermal imaging services, and CMMS extensions. Solve a narrow problem well. Do not connect to energy, production, or financial systems. Require manual data synthesis across multiple disconnected tools.

04

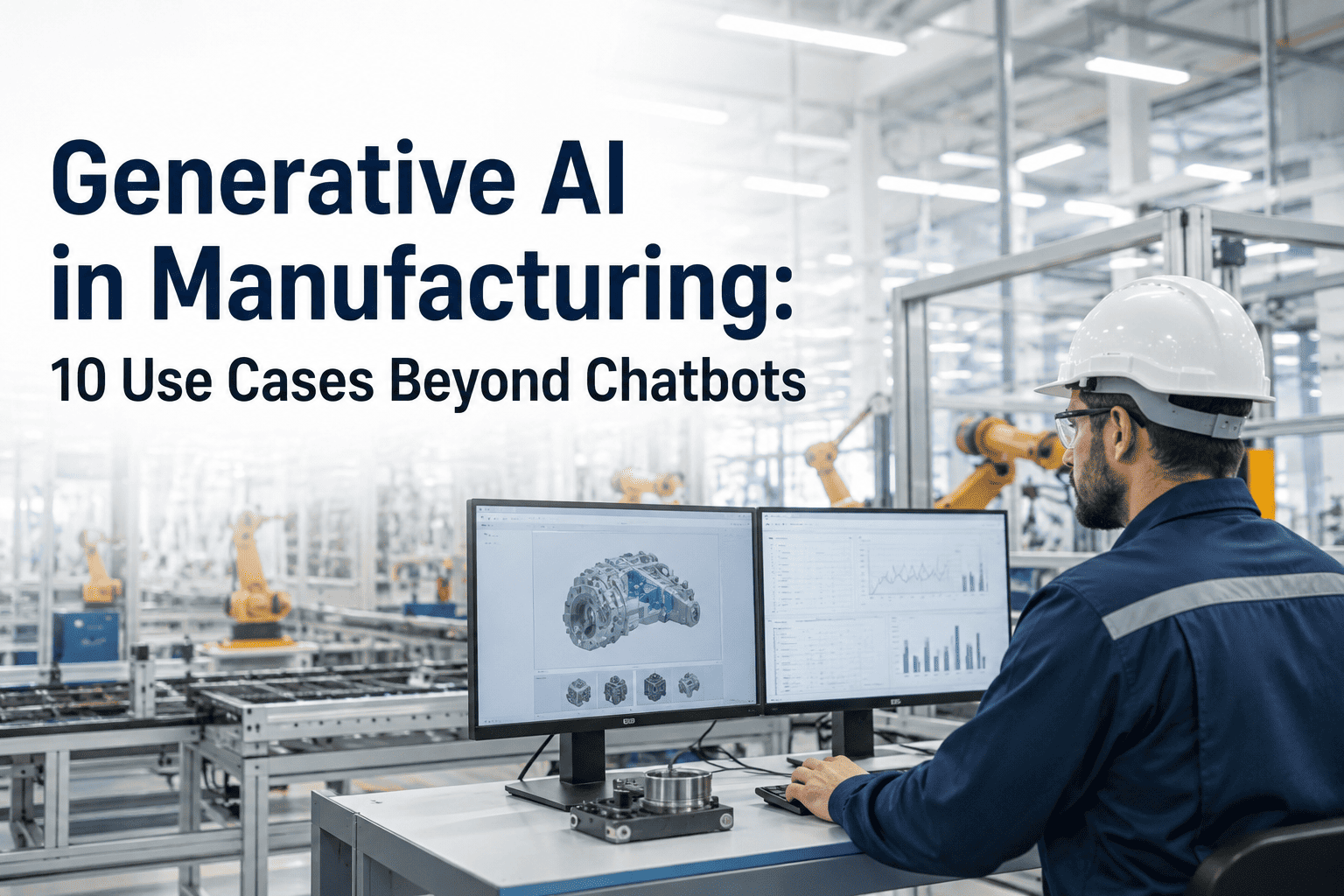

AI-Native Digital Twin Platforms

Purpose-built for unified asset intelligence. Ingests sensor, SCADA, ERP, and CMMS data simultaneously. Delivers predictive analytics, autonomous workflows, and natural language queries from a single integrated layer.

The Evaluation Scoring Framework

Enterprise technology leaders evaluating manufacturing AI platforms should score vendors across eight dimensions. Each dimension is weighted by its operational impact. The framework below is the same one facility directors and CIOs use when running formal RFP processes.

| Evaluation Dimension |

Weight |

Legacy MES |

Cloud Analytics |

Point Solutions |

iFactory |

| Time to first value |

High |

12–24 mo |

3–6 mo |

4–8 wk |

4–6 wk |

| Predictive accuracy |

High |

Rule-based |

Trend-based |

Asset-specific |

90%+ LSTM/ML |

| Sensor infrastructure req. |

Medium |

Heavy existing |

Historian only |

Dedicated sensors |

Flexible / $50–100/pt |

| ERP / CMMS integration |

High |

Native (slow) |

Limited |

Manual export |

OPC-UA / REST / MQTT |

| Autonomous work orders |

High |

No |

No |

No |

Yes — AI-generated |

| Energy monitoring |

Medium |

Separate module |

Basic |

Not included |

Integrated layer |

| Multi-facility scalability |

High |

Complex |

Dashboard only |

Not designed for |

Cross-site benchmarking |

| Total cost of ownership |

High |

$1.5M–$4M+ |

$200K–$800K |

$80K–$300K |

$680K → $2.1M saved Y1 |

Legacy Friction vs Optimised Excellence

The clearest way to illustrate platform value is not feature lists — it is the operational reality of each approach. Below is an honest comparison of how decisions get made under each model.

Legacy Friction — The Old Way

- Maintenance scheduled by calendar interval, not asset condition

- Failure discovered after production stops — reactive dispatching begins

- Energy waste identified quarterly in spreadsheet reviews

- Work orders created manually from technician observations

- ROI reported via 40-slide presentation, not live dashboards

- New asset onboarding requires months of model-building upfront

- Each facility runs its own disconnected reporting stack

- Compliance documentation assembled by hand from multiple systems

Optimised Excellence — The iFactory Way

- Condition-based alerts fire 14–21 days before failure occurs

- AI predicts failure window; maintenance planned without disruption

- Energy anomalies detected in real time, correlated to asset health

- Work orders auto-generated with correct parts, procedures, scheduling

- Live financial dashboards show saved cost against investment weekly

- Virtual twin testing cuts new asset ramp-up time by 30–40%

- Cross-facility benchmarking surfaces performance gaps instantly

- ISO 55000, OSHA, and ESG reports auto-generated from twin data

Three Dimensions That Determine Platform Selection

After running the scoring framework, most evaluation teams find that three dimensions dominate the final decision. These are not technical specifications — they are business outcomes that the platform either delivers or does not.

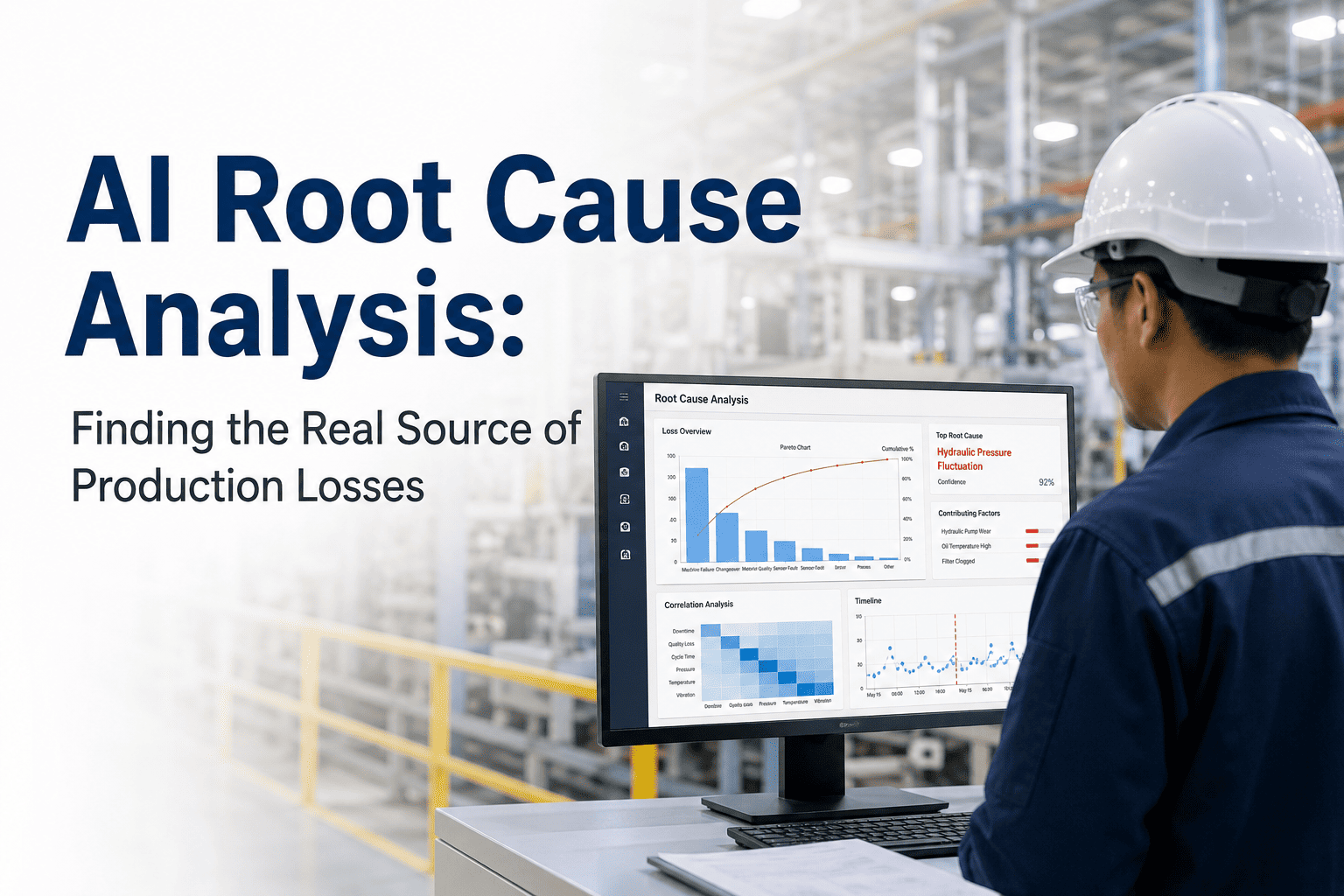

Workflow Acceleration

Platforms that reduce mean-time-to-repair by 35–50% do so because the alert arrives before failure, not after it. The right platform connects anomaly detection directly to work order creation, eliminating the manual steps where time and context are lost. Evaluate whether the platform shortens the loop from signal to action — or merely improves the post-mortem report.

Overhead Reduction

A platform that requires three FTEs to manage is not a productivity tool — it is a cost centre in different clothing. AI-native platforms should reduce the labour required to sustain high-quality maintenance programmes, not increase it. Measure: how many person-hours per week does the platform consume versus generate in avoided downtime savings?

Output Growth

The highest-ROI platforms do not just prevent losses — they increase throughput. When assets run closer to their design envelope without risk of failure, output per shift rises. Facilities using predictive analytics report 8–12% OEE improvement within the first year. The platform that makes this possible is one that models asset behaviour under varying production conditions, not just failure signatures.

Five Questions Every Vendor Must Answer

Before signing any contract, require every platform vendor to answer these five questions in writing. The quality and specificity of their answers will tell you more than any product demonstration.

01 — What is your definition of "predictive" and how is accuracy measured?

Many vendors call threshold-based alerting "predictive." True prediction involves ML models trained on historical failure data that output a probability and a time window. Require the vendor to show you a confusion matrix, a false positive rate, and a reference customer who can confirm 14+ day advance warning on a real failure.

02 — What happens to value delivery if our sensor infrastructure is limited?

Platforms dependent on rich existing instrumentation fail in the majority of industrial environments. Ask specifically: can the platform start with SCADA historian data alone, and what is the incremental cost and time to add wireless vibration sensors to 15 assets? The answer reveals real deployment flexibility versus sales-room assumptions.

03 — How does the platform generate measurable ROI in the first 90 days?

If the vendor cannot name a specific mechanism — avoided failure, eliminated PM, energy savings event — that produces documented dollar value within 90 days, the platform is not designed for phased value delivery. Demand a reference customer who achieved documented ROI in that window, with contact information.

04 — How does the platform integrate with our existing CMMS and ERP without disruption?

Integration that requires rip-and-replace of existing systems kills adoption. The correct answer involves standard protocol support (OPC-UA, MQTT, REST APIs) and a deployment model where the twin runs alongside current systems, adding intelligence on top without replacing execution workflows.

05 — What internal resources are required and what does your vendor team own?

Hidden implementation burden is the most common source of project failure. A platform that requires your team to build and train its own models, configure dashboards, and tune alert thresholds is an engineering project, not a platform. Require a written breakdown of vendor-owned versus customer-owned implementation activities for each phase.

iFactory Platform Evaluation

Stop Comparing Features. Start Comparing Outcomes.

iFactory answers every question on this framework with documented customer evidence, not slide decks. Our phased deployment model gets your first critical assets monitored in weeks, your first avoided failure documented in months, and your full ROI realised within 12–18 months.

$3.5M

Annual savings potential

10–30x

Return on investment