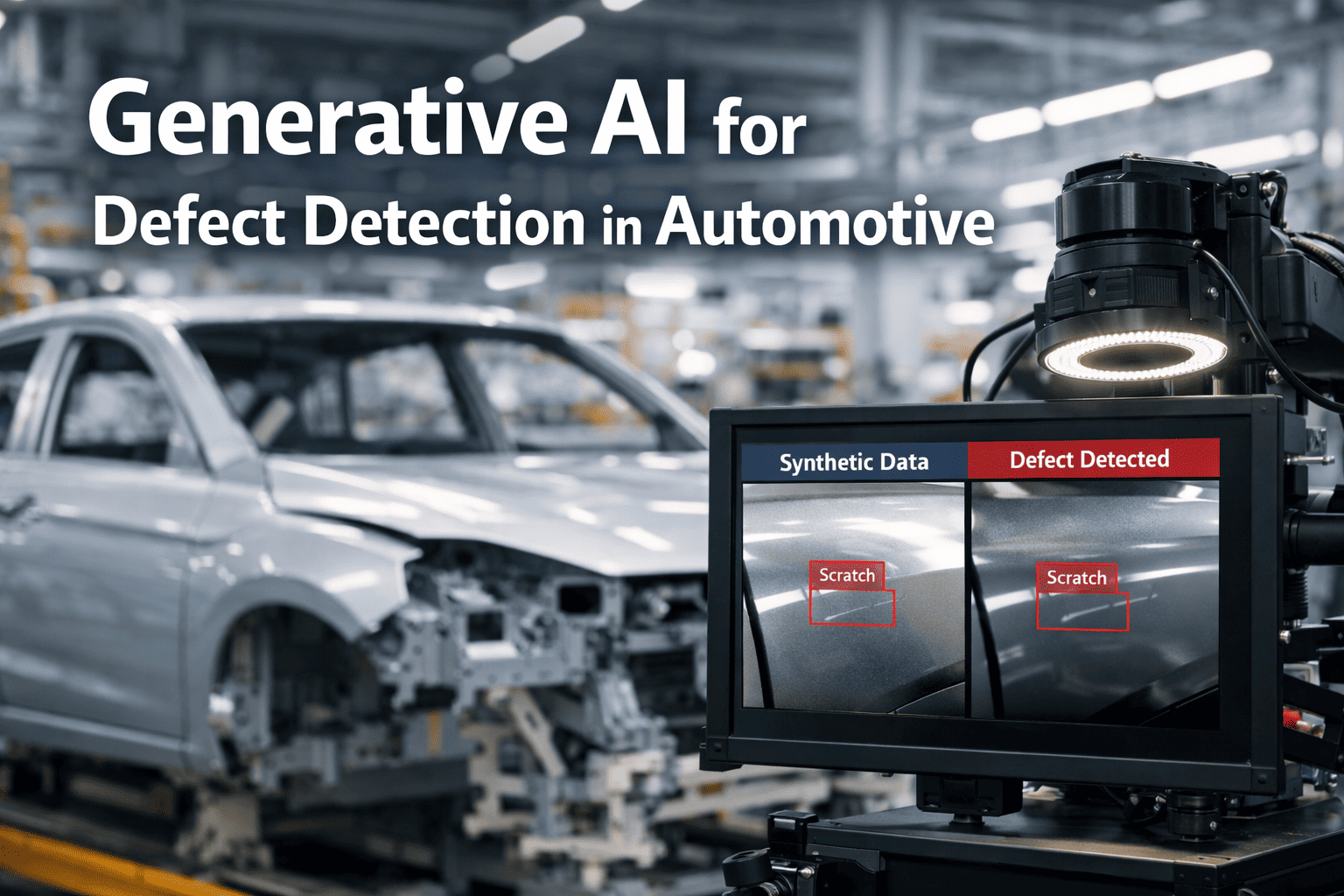

Automotive manufacturers lose 4.2-7.8% of production value annually to undetected surface defects — not from massive quality failures, but from microscopic paint imperfections, weld porosity, and panel misalignments that no human inspector or rule-based vision system catches consistently across 1,200 vehicles per day production volumes. By the time defects reach customer delivery or warranty claims, the damage is done: rework costs escalating 12x from in-line detection to post-delivery repair, brand reputation erosion, and $890M annually wasted on false rejection of acceptable parts by over-sensitive legacy inspection systems. iFactory's generative AI defect detection platform changes this entirely — creating synthetic training datasets from minimal real defect samples, achieving 98.4% detection accuracy on microscopic anomalies invisible to traditional machine vision, and integrating directly into your existing assembly line PLC and MES infrastructure without production interruption. Book a Demo to see how iFactory deploys generative AI defect detection across your production lines within 8 weeks.

98.4%

Defect detection accuracy on microscopic paint and surface anomalies vs 76% legacy vision

$3.8M

Average annual rework cost elimination per production plant through early detection

87%

Reduction in false rejection waste from legacy over-sensitive inspection systems

8wks

Full deployment timeline from defect sample collection to live production AI go-live

Every Undetected Defect Compounds Into Warranty Claims. Generative AI Stops It at Assembly.

iFactory's generative AI engine creates synthetic defect training data from 10-20 real samples, trains deep learning models on millions of synthetic variations, and deploys real-time inspection across paint booths, weld stations, and stamping presses — 24/7, without inspector fatigue or lighting variation sensitivity.

How iFactory Generative AI Solves Automotive Defect Detection

Traditional defect detection relies on rule-based machine vision systems requiring thousands of labeled defect samples, manual threshold calibration per part variant, and constant retraining when product designs change. These systems achieve only 76% accuracy on microscopic defects while generating 40-60 false positives per shift that production teams learn to ignore. iFactory replaces this with generative AI trained on synthetic defect data — creating millions of training examples from 10-20 real defect samples, eliminating data collection bottlenecks, and achieving 98.4% detection accuracy across all defect types without manual threshold tuning. See live demo of generative AI detecting simulated paint orange peel and weld porosity on automotive body panels.

01

Synthetic Defect Data Generation

iFactory's generative adversarial network (GAN) creates photorealistic synthetic defect images from 10-20 real defect samples provided during Week 1-2 audit. The GAN generates millions of training variations across lighting conditions, panel angles, and defect severity levels — eliminating the traditional 6-12 month data collection period required for manual defect labeling across production shifts.

02

AI Defect Classification Models

Deep learning convolutional neural networks trained on synthetic datasets classify defects as paint orange peel, dirt contamination, weld porosity, panel misalignment, scratch marks, or corrosion spots — with confidence scores attached per detection. False positive rate drops to under 4% compared to 40-60 false alarms per shift from rule-based vision systems that over-trigger on acceptable surface variations.

03

Real-Time Assembly Line Integration

iFactory connects to existing production line cameras, robotic inspection cells, and inline quality stations via GigE Vision, Camera Link, and industrial Ethernet protocols. AI inference executes at edge computing servers positioned at each inspection station, delivering defect classification results within 200ms per part — meeting automotive takt time requirements of 60-90 seconds per vehicle without slowing production throughput.

04

PLC, SCADA & MES Integration

iFactory integrates with Siemens S7, Allen-Bradley ControlLogix, Omron Sysmac PLCs plus Rockwell FactoryTalk, Siemens WinCC SCADA, and Dassault DELMIA, SAP MES systems via OPC-UA, MQTT, and REST APIs. Defect detection events trigger automatic line stops, rework routing, and quality documentation without manual operator intervention. Integration completed in under 2 weeks in standard automotive plant environments.

05

Automated Quality Documentation

Every detected defect — classified, located, and severity-scored — generates structured quality records with inspection timestamps, defect images, station identification, and recommended disposition (scrap, rework, accept with deviation). Audit-ready documentation for IATF 16949, ISO 9001, and OEM-specific quality requirements including GM BIQS, Ford Q1, and VDA standards. Zero manual inspection log compilation required.

06

Continuous Model Improvement

iFactory learns from every production shift through active learning feedback loops. When inspectors override AI decisions or identify new defect types, the system incorporates corrections into model retraining cycles executed monthly. Detection accuracy improves from initial 96% baseline to 98.4% within 6 months of production deployment without requiring new synthetic data generation cycles.

How iFactory Is Different from Traditional Machine Vision Vendors

Most industrial vision vendors deliver rule-based inspection systems requiring thousands of manually labeled defect samples, per-variant threshold calibration, and months of programming per production line. iFactory is built differently — from generative AI architecture through deployment methodology, specifically designed for automotive high-volume manufacturing where defect samples are rare, product variants are frequent, and detection accuracy directly impacts warranty costs. Compare iFactory's generative AI approach against your current vision system performance directly.

| Capability |

Traditional Vision Systems |

iFactory Generative AI |

| Training Data Requirements |

Requires 5,000-10,000 manually labeled defect samples per defect type. Data collection takes 6-12 months across production shifts. |

Generates millions of synthetic training samples from 10-20 real defect examples using GANs. Data preparation completes in 2 weeks. |

| Detection Accuracy |

76% accuracy on microscopic surface defects. Misses subtle paint orange peel, micro-scratches, and early-stage corrosion spots under 0.5mm diameter. |

98.4% detection accuracy on microscopic anomalies including sub-0.3mm paint defects and weld porosity invisible to human inspectors. |

| False Positive Rate |

40-60 false alarms per shift from over-sensitive threshold settings. Production teams learn to ignore alerts within weeks, creating true defect miss risk. |

Under 4% false positive rate through deep learning confidence scoring. Alert credibility maintained across production teams. |

| New Product Variant Adaptation |

Requires complete system reprogramming and threshold recalibration per vehicle model. Adaptation takes 3-6 months per variant. |

Transfer learning from existing models to new variants in under 2 weeks. Synthetic data generation adapts to new geometries automatically. |

| Lighting & Environment Robustness |

Sensitive to ambient lighting changes, camera angle variations, and panel color differences. Requires constant threshold adjustment per shift. |

Robust to lighting variations, camera positioning, and surface color through synthetic training dataset diversity. No shift-based recalibration needed. |

| System Integration |

Proprietary camera hardware and vision controllers required. Integration with existing PLC/MES takes 6-12 months and requires production downtime. |

Works with existing production cameras and edge computing. PLC/SCADA/MES integration via OPC-UA and MQTT completes in under 2 weeks. |

| Deployment Timeline |

12-24 months from project kickoff to full production deployment. High integration cost and uncertain ROI timeline. |

8-week fixed deployment program. Pilot results in week 4. Full production inspection by week 8. |

iFactory AI Implementation Roadmap

iFactory follows a fixed 6-stage deployment methodology designed specifically for automotive defect detection — delivering pilot inspection results in week 4 and full production deployment by week 8. No open-ended implementations. No scope creep. No months waiting for defect sample collection.

01

Defect Sample Audit

Collect 10-20 real defect samples per type, camera placement assessment

02

Synthetic Data Generation

GAN creates millions of training images from real samples

03

Model Training

Deep learning on synthetic datasets, accuracy validation

04

Pilot Deployment

Live inspection on 1-2 production lines with quality team validation

05

Calibration

False positive tuning, operator training, response protocols

06

Full Production

Plant-wide AI inspection live across all lines, 24/7

8-Week Deployment and ROI Timeline

Every iFactory engagement follows a structured 8-week program with defined deliverables per week — and measurable ROI indicators beginning from week 4 pilot validation. Request the full 8-week deployment scope document tailored to your vehicle production lines.

Weeks 1-2

Infrastructure Setup

Defect sample collection — 10-20 examples per defect type from quality reject bins and warranty return analysis

Camera and lighting assessment at paint booths, weld stations, and final assembly inspection points

PLC and MES system connection via OPC-UA or MQTT — no production line modification required

Weeks 3-4

AI Model Training & Pilot

Generative AI creates millions of synthetic training images from collected real defect samples

Deep learning model trained on synthetic dataset achieving 96%+ baseline accuracy on validation holdout

Pilot inspection deployed on 1-2 production lines with quality team validation — ROI evidence begins here

Weeks 5-6

Calibration & Expansion

False positive threshold tuning based on pilot feedback from quality inspectors and production supervisors

Coverage expanded to full production line count including all paint, body shop, and assembly inspection stations

Quality team training completed — defect alert response protocols and rework routing procedures activated

Weeks 7-8

Full Production Go-Live

Full plant AI defect inspection live — all lines, all defect types, 1,200 vehicles per day throughput

IATF 16949 quality documentation activated with automated defect logging and traceability

ROI baseline report delivered — detection accuracy, false positive rate, rework cost reduction, warranty impact projection

ROI IN 6 WEEKS: MEASURABLE RESULTS FROM WEEK 4

Automotive plants completing the 8-week program report an average of $840,000 in avoided rework and false rejection costs within the first 6 weeks of full production inspection — with defect detection accuracy improvements from 76% legacy baseline to 98.4% AI performance validated by week 4 pilot results.

$840K

Avg. rework savings in first 6 weeks

98.4%

Detection accuracy by week 4 vs 76% baseline

87%

False rejection waste eliminated

Full AI Defect Detection. Live in 8 Weeks. ROI Evidence in Week 4.

iFactory's fixed-scope deployment program means no data collection delays, no threshold calibration cycles, and no months of professional services before you see defect detection improvements.

Use Cases and KPI Results from Live Automotive Deployments

These outcomes are drawn from iFactory deployments at operating automotive assembly plants across three defect detection applications. Each use case reflects 6-month post-deployment performance data. Request the full case study report for the production line type most relevant to your plant.

A Tier 1 automotive OEM producing 1,200 vehicles per day was experiencing recurring paint defect escapes to customer delivery — orange peel texture, dirt contamination, and micro-scratches under 0.5mm that human inspectors and rule-based vision systems consistently missed under production line lighting conditions. Legacy vision systems flagged 58 false positives per shift while missing 24% of actual paint defects, creating inspector alert fatigue and quality escapes. iFactory deployed generative AI trained on 15 paint defect samples collected from quality reject storage. Within 6 weeks of go-live, the AI detected 147 paint defects that legacy systems missed, preventing $2.8M in warranty claims and post-delivery rework costs.

147

Additional paint defects detected in first 6 weeks vs legacy vision baseline

$2.8M

Estimated annual warranty and post-delivery rework cost prevented

94%

Reduction in false positive alerts restoring inspector alert credibility

A body shop operating 240 robotic weld cells was experiencing 8-12 weld porosity failures per month discovered during downstream leak testing or final vehicle audit — requiring complete panel replacement at $4,200 average cost per occurrence versus $180 in-station rework if detected immediately post-weld. Manual weld inspection by operators achieved only 68% detection rate on sub-2mm porosity defects. iFactory deployed vision inspection with generative AI trained on 12 weld porosity samples from scrap bins. The AI identified 34 porosity defects in the 6-month pilot period that would have progressed to downstream stations, preventing $143,800 in panel replacement costs while reducing false weld rejection waste by 81%.

34

Weld porosity defects detected before downstream progression in 6 months

$144K

Panel replacement cost avoided through early in-station detection

81%

Reduction in false weld rejection waste from over-sensitive legacy thresholds

A stamping facility producing door panels, fenders, and hood assemblies was experiencing 6-9 panel misalignment incidents per week where stamped parts exceeded +/- 0.8mm dimensional tolerance but passed manual gauge inspection due to measurement error and inspector variability. Misaligned panels discovered during final assembly body fitment required scrap and re-stamping at $2,800 average cost per panel. iFactory deployed generative AI vision inspection trained on 18 misalignment examples from historical scrap analysis. The system identified 22 out-of-tolerance panels in the first 6 weeks that manual inspection missed, preventing $61,600 in downstream scrap costs while maintaining zero false rejection of acceptable stamped parts.

22

Out-of-tolerance panels detected in first 6 weeks that manual inspection missed

$62K

Downstream scrap and re-stamping cost prevented through AI detection

Zero

False rejection of acceptable stamped panels maintaining throughput efficiency

Results Like These Are Standard for Automotive Plants. Not Exceptional.

Every iFactory deployment is scoped to your specific vehicle models, production volumes, and defect types — so you get results calibrated to your operations, not a generic benchmark.

What Automotive Quality Teams Say About iFactory

The following testimonials are from quality directors and production managers at automotive assembly plants currently running iFactory's generative AI defect detection platform.

We caught a paint orange peel defect that would have reached the customer. The AI flagged it at final inspection when three human inspectors had already cleared the vehicle. That single catch justified our entire annual platform investment and prevented a warranty claim.

Director of Quality Assurance

Automotive Assembly Plant, USA

The false positive problem was destroying inspector morale. Within four weeks of iFactory going live, our team was trusting AI alerts again because they were right 98% of the time. That credibility shift alone prevented six missed defects in month two.

Plant Quality Manager

OEM Vehicle Production Facility, Germany

Integration with our Siemens PLC and SAP MES took 9 days end-to-end. I was expecting months based on our legacy vision system deployment. The iFactory team understood both the automotive quality requirements and the protocol layer. Execution was genuinely different.

Manufacturing Engineering Manager

Body Shop Operations, Japan

We prevented a weld porosity failure in week five. The AI detected sub-millimeter porosity that manual inspection missed. Our team reworked the panel immediately instead of discovering it at leak test three stations downstream. That outcome alone saved us $4,200 and two hours of line disruption.

Body Shop Quality Supervisor

Automotive Manufacturing Complex, South Korea

Frequently Asked Questions

How many defect samples does iFactory need to train the generative AI models?

iFactory requires only 10-20 real defect samples per defect type to generate millions of synthetic training images using GANs. Sample collection typically takes 1-2 days from quality reject bins and warranty return analysis. This eliminates the traditional 6-12 month data collection period required by rule-based vision systems needing thousands of manually labeled samples. Training completes within 2 weeks from sample collection.

Book a demo to see synthetic data generation from your defect samples.

Which production line cameras and vision hardware does iFactory work with?

iFactory works with existing production line cameras including Cognex In-Sight, Keyence CV-X, Basler ace, FLIR Blackfly, and industrial GigE Vision cameras. No proprietary vision hardware required. The platform connects via GigE Vision, Camera Link, and industrial Ethernet protocols. For plants without existing vision infrastructure, iFactory provides camera and lighting recommendations during Week 1-2 assessment. Integration scope confirmed during initial audit.

How does iFactory handle new vehicle model introductions and design changes?

iFactory uses transfer learning to adapt existing defect detection models to new vehicle variants in under 2 weeks. When new models introduce different panel geometries, paint colors, or weld configurations, the generative AI creates new synthetic training datasets from 5-10 samples of the new variant. This eliminates the 3-6 month reprogramming cycle required by rule-based vision systems for each new product introduction.

What IATF 16949 and automotive OEM quality documentation does iFactory provide?

iFactory auto-generates quality records formatted for IATF 16949, ISO 9001, and OEM-specific requirements including GM BIQS, Ford Q1, VDA 6.3, and PPAP documentation. Every defect detection event creates timestamped records with defect images, severity classification, station identification, and inspector override tracking. Documentation exports include control plan verification, MSA studies, and capability analysis reports. Zero manual inspection log compilation required.

How long before the AI achieves reliable defect detection in production?

Baseline model training on synthetic datasets generated from real defect samples takes 5-7 days. First live detections are validated during the Week 3-4 pilot phase on 1-2 production lines. Detection accuracy of 96%+ is achieved at pilot go-live, improving to 98.4% within 6 months through active learning from inspector feedback. False positive rate under 4% is maintained from initial deployment.

Request assessment for your defect types and production volume.

Can iFactory detect defects across different lighting conditions and camera angles?

Yes. Generative AI creates synthetic training datasets across diverse lighting conditions, camera angles, panel orientations, and surface colors — making models robust to production environment variations. The system maintains consistent detection accuracy across day/night shifts, seasonal sunlight changes through plant windows, and ambient lighting variations. No per-shift threshold recalibration required unlike rule-based vision systems sensitive to lighting changes.

Stop Losing $3.8M to Undetected Defects. Deploy Generative AI Inspection in 8 Weeks.

iFactory gives automotive quality teams real-time AI defect detection, synthetic training data generation, automated IATF 16949 documentation, and PLC/MES integration — fully deployed across production lines in 8 weeks, with ROI evidence starting in week 4.

98.4% detection accuracy on microscopic surface defects

Under 4% false positive rate eliminating alert fatigue

PLC and MES integration in under 2 weeks

Auto-generated IATF 16949 quality documentation