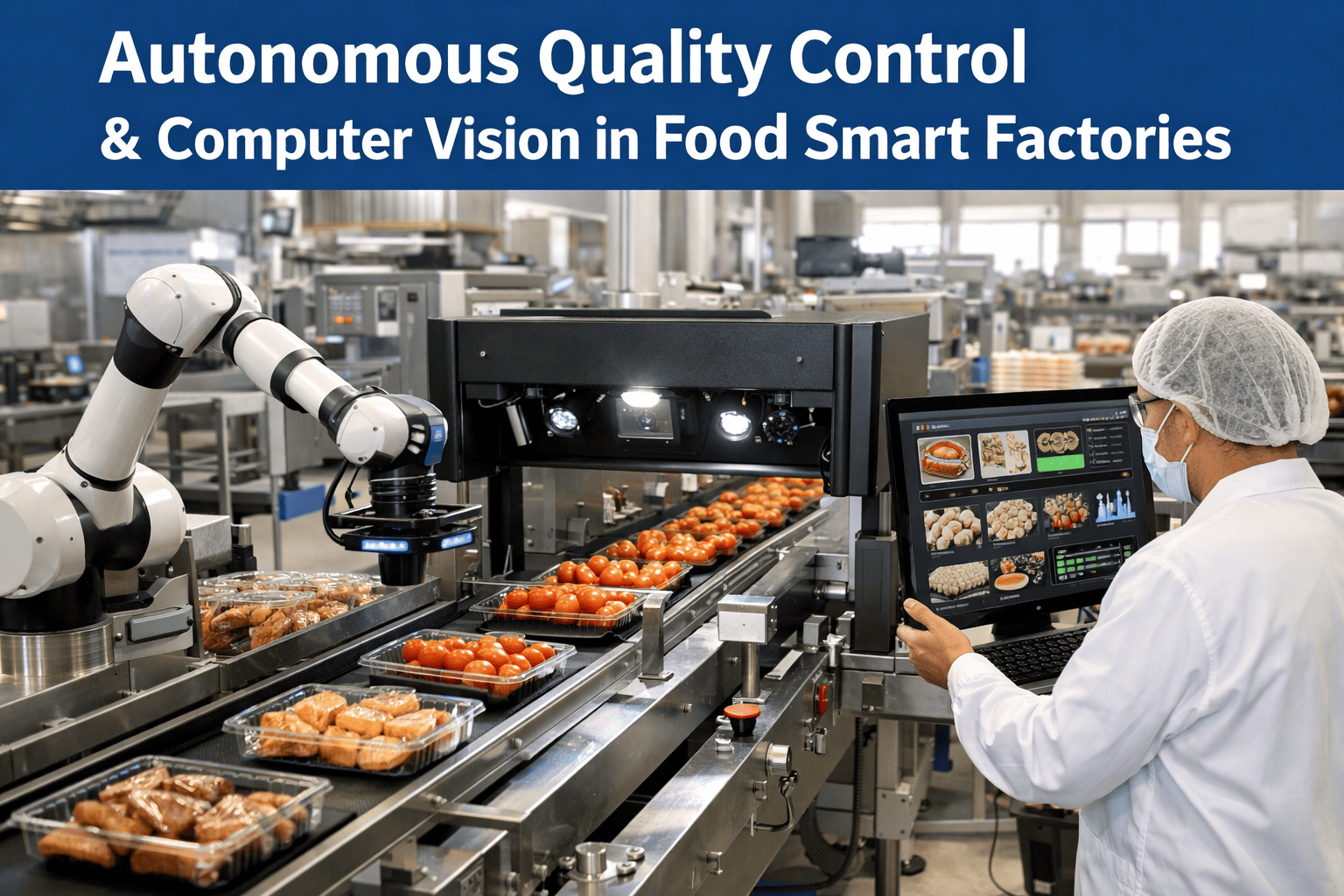

When a contaminated food product reaches a consumer, it doesn't just cost a recall — it costs lives, brand trust, and years of regulatory credibility. Unlike a manufacturing defect in electronics, a missed foreign object or undetected pathogen in food carries immediate human health consequences. That single reality is why 82% of food safety directors, when surveyed in 2024, said autonomous visual inspection was either their current deployment or their top planned investment for 2025. The food manufacturing sector operates under some of the strictest quality tolerances in industry: sub-millimeter foreign object detection, real-time color and texture grading, microbial contamination indicators, and packaging integrity verification across millions of units per shift. Relying on human inspectors alone for that throughput is a risk a growing number of food safety managers are no longer willing to accept.

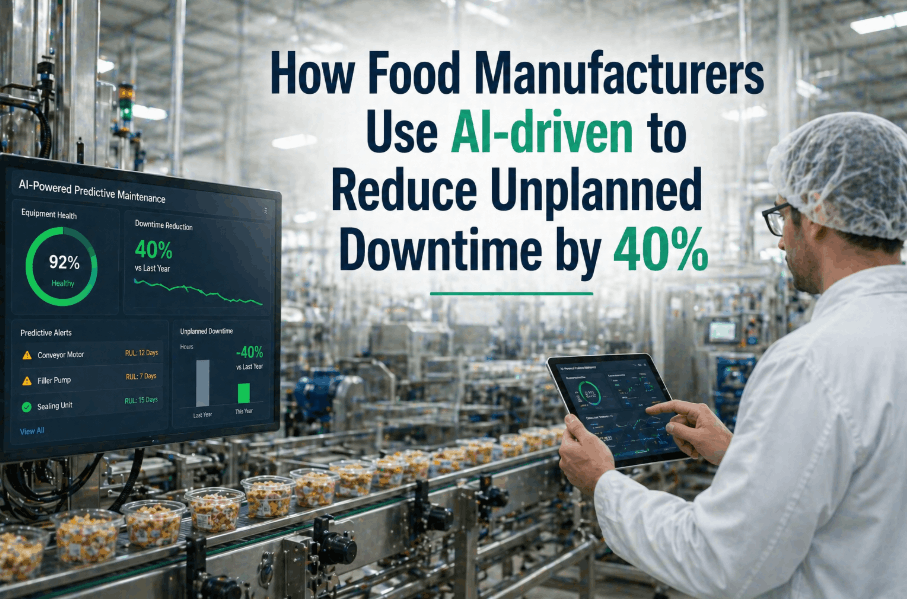

Autonomous Quality Control & Computer Vision in Food Smart Factories

A complete guide to deploying AI-powered visual inspection and autonomous quality systems where food safety demands zero tolerance — at production speed, with full traceability, and without human fatigue compromising detection rates.

The Quality Failures Food Manufacturers Cannot Afford to Miss

Before examining the technology, it's worth being precise about what autonomous quality control systems are protecting against. Food production lines generate multiple categories of defects that carry extraordinary consumer safety, regulatory, and brand reputation consequences.

Foreign Object Contamination

Metal fragments, plastic shards, glass particles, bone splinters, and insect matter that evade traditional metal detectors and X-ray systems. Computer vision detects irregular shapes, translucent contaminants, and sub-millimeter debris that conventional inspection technologies consistently miss at production speed.

Color & Texture Anomalies

Discoloration indicating spoilage, bruising, mold onset, or improper cooking temperatures. These visual indicators are the earliest signs of microbial contamination or process deviation — detectable by computer vision hours before traditional lab testing confirms a problem.

Packaging Integrity Failures

Seal breaches, label misalignment, missing allergen warnings, incorrect expiration dates, and tamper-evident closure failures. A single mislabeled allergen can trigger anaphylaxis in consumers and multi-million dollar recalls under FDA enforcement actions.

Portion & Weight Deviations

Underfilled packages violating net weight regulations, overfilled units creating margin erosion, and inconsistent portion sizes that damage brand consistency. Vision-based volumetric analysis detects deviations that traditional checkweighers miss on irregularly shaped products.

Hygiene & Sanitation Violations

Residue buildup on conveyor surfaces, improper PPE compliance by line workers, cross-contamination between allergen zones, and cleaning verification failures. Computer vision provides continuous environmental monitoring that periodic manual audits cannot match.

Process Deviation Detection

Temperature excursions visible through product appearance changes, mixing inconsistencies evident in texture variation, and fermentation anomalies detectable through color shift analysis. These process indicators allow intervention before defective batches reach downstream packaging.

Why Manual Quality Inspection Creates Structural Risk for Food Manufacturing

Human inspectors bring judgment and adaptability, but their physiology creates inspection gaps that are impossible to fully mitigate in high-speed food production environments.

Fatigue-Driven Detection Decay

Human visual acuity degrades by 20-30% after just 30 minutes of continuous inspection. On 8-hour shifts inspecting 200+ units per minute, detection rates for subtle defects like hairline seal breaches or early-stage discoloration drop below acceptable thresholds — precisely when cumulative contamination risk is highest.

Subjective Grading Inconsistency

Two inspectors grading the same product will disagree 15-25% of the time on borderline quality decisions. This subjectivity creates inconsistent customer experiences, unpredictable yield calculations, and audit vulnerabilities when regulators question the basis for pass/fail determinations.

Throughput Bottleneck

Manual inspection stations limit line speeds to what human eyes can process — typically 60-120 units per minute for detailed visual checks. As production demands increase, manufacturers face an impossible choice between inspection thoroughness and output volume, often compromising safety for speed.

Incomplete Traceability

Manual inspection generates subjective notes and batch-level records that lack the granular, unit-level traceability modern food safety regulations require. When a recall occurs, the absence of per-unit inspection data forces broader recall scopes — multiplying cost, waste, and consumer risk exposure.

Deploy iFactory Vision AI and Achieve Zero-Defect Food Production

iFactory's computer vision quality control gives food manufacturers real-time defect detection at full production speed — every unit inspected, every anomaly captured, every decision traceable.

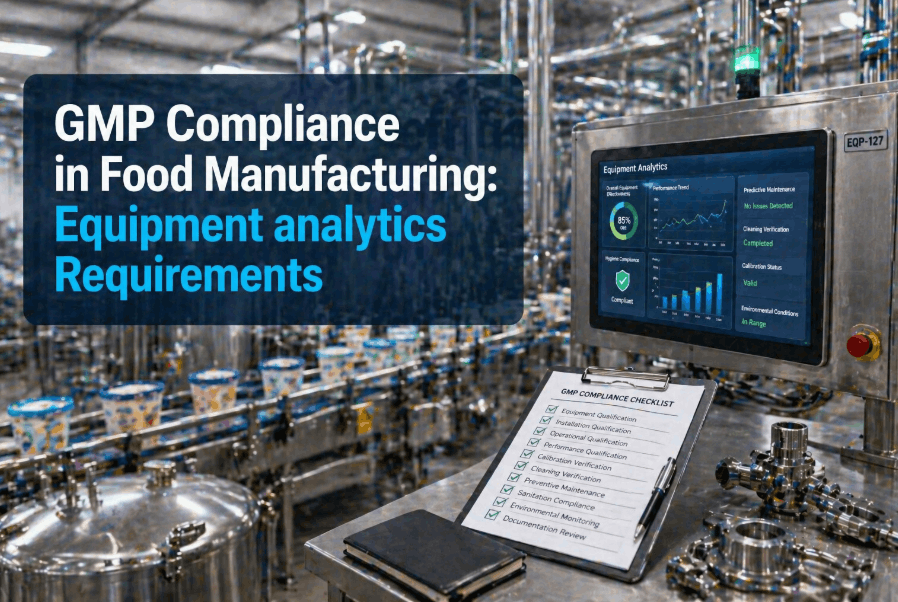

Detect foreign objects, color anomalies, packaging failures, and process deviations using deep learning models trained specifically for food manufacturing environments. Full integration with your existing production lines, SCADA systems, and ERP platforms — delivering FSMA, HACCP, and BRC compliance documentation automatically from a single AI-powered quality platform.

Computer Vision QC Architecture: Four Deployment Models for Food Safety

Not all vision-based quality systems are equivalent. Food manufacturers can choose from four distinct architectural approaches based on their defect detection requirements, production line complexity, and regulatory compliance needs.

Inline Full-Spectrum Vision Inspection

High-resolution cameras with multispectral imaging (visible, near-infrared, and hyperspectral) deployed at critical control points along the production line. Every unit is captured, analyzed, and classified in real time with automatic reject mechanisms. Ideal for high-value products where zero-defect tolerance is non-negotiable and every unit requires individual inspection documentation.

Statistical Vision Sampling with AI Escalation

Vision systems inspect a statistically significant sample from each batch, with AI models analyzing variance patterns to predict batch-wide quality. When anomaly rates exceed configurable thresholds, the system automatically escalates to 100% inline inspection. This model balances inspection cost with safety assurance for high-volume commodity products. Book a demo to see this adaptive inspection model for your production environment.

Multi-Point Process Vision Monitoring

Rather than inspecting finished products, vision systems monitor critical process parameters throughout the production workflow — mixing consistency, cooking color development, cooling uniformity, and sanitation compliance. Defects are prevented by catching process deviations before they produce defective output. This model generates continuous process control data for SPC charts and regulatory documentation.

Edge AI Vision with Federated Learning

Lightweight vision inference models run directly on smart cameras at each inspection point. Each camera learns from its own defect patterns while contributing anonymized model improvements to a facility-wide federated learning network. No raw images leave the edge device — only model weight updates are shared. Schedule a consultation to see how iFactory supports edge vision deployments for multi-line food facilities.

Regulatory Frameworks That Drive Autonomous QC Adoption in Food Manufacturing

For many food manufacturers, autonomous quality control isn't a preference — it's a compliance requirement. Understanding which frameworks apply to your production is foundational to vision system architecture planning.

The Evolution of Quality Control in Food Manufacturing

Understanding the chronological development of quality inspection technology in food production reveals why autonomous computer vision has become the standard for modern food safety.

Manual Visual Inspection Era

Food quality relied entirely on trained human inspectors at the end of production lines. No automated detection existed, inspection was subjective, and documentation consisted of handwritten logs. Defect detection rates rarely exceeded 70% for subtle contamination.

Metal Detectors & X-Ray Systems

First widespread deployment of automated foreign object detection in food lines. Metal detectors caught ferrous contaminants reliably, but missed plastic, glass, bone, and organic foreign matter — leaving critical detection gaps in non-metallic contamination.

Early Machine Vision Adoption

Rule-based machine vision systems deployed for simple sorting tasks — color grading produce, measuring fill levels, and reading barcodes. These systems required extensive manual programming for each product type and failed on natural product variability common in food manufacturing.

Deep Learning Transforms Food Inspection

Convolutional neural networks enabled vision systems to learn defect patterns from examples rather than programmed rules. For the first time, vision systems could handle the natural variation in food products — different sizes, shapes, and colors — while maintaining detection accuracy above 95%.

Hyperspectral & Multispectral Vision

Beyond-visible-light imaging enabled detection of chemical composition, moisture content, and early-stage spoilage invisible to standard cameras. Food manufacturers could now detect contamination at molecular levels before any visible signs appeared.

Autonomous Quality Control Standard

82% of food safety directors now deploy or plan autonomous vision QC. Real-time defect classification, predictive quality analytics, and fully automated reject-and-rework systems have become baseline requirements for food production safety.

Manual vs. Autonomous Vision Quality Control Comparison

A visual comparison of inspection coverage and risk exposure between human-dependent and AI-powered quality control systems in food manufacturing.

High-Risk Detection Gaps

100% Inspection Coverage

How iFactory Delivers Autonomous Quality Control for Food Manufacturing

iFactory is engineered from the ground up to support autonomous visual inspection for food manufacturers who demand zero-defect production without sacrificing line speed or creating inspection bottlenecks. The platform provides full computer vision quality capability within an architecture that integrates with your existing production infrastructure. Book a demo to see autonomous food quality inspection in action.

The platform covers foreign object detection, color and texture grading, packaging integrity verification, fill-level measurement, label compliance checking, and hygiene monitoring — all running at production speed with sub-50ms inference times per unit. Model updates and detection algorithm improvements are delivered through validated packages that your quality team reviews before deployment.

For food manufacturers with multiple production facilities, iFactory supports a federated quality architecture where each site maintains its own trained vision models while sharing anonymized defect pattern intelligence across the organization. Critically, raw production images remain at each facility — no centralized image database is created, protecting proprietary product formulations and process IP from exposure. Schedule a quality consultation to discuss multi-site vision QC deployment strategies.

Quantifying the Impact: Autonomous Vision QC Outcomes in Food Manufacturing

Data from food production facilities that have transitioned from manual to autonomous vision quality control reveals measurable improvements in safety, efficiency, and regulatory compliance.

Ready to Achieve Zero-Defect Food Production with Vision AI?

Speak with an iFactory quality specialist about autonomous inspection deployment tailored to your production line's safety and throughput requirements.

Whether you need full-spectrum inline inspection for high-value products, adaptive statistical sampling for commodity lines, or multi-point process vision monitoring — iFactory adapts to the quality architecture your consumers deserve. Detect every foreign object, grade every unit, and document every inspection automatically while running at full production speed.

Frequently Asked Questions

How does computer vision handle the natural variation in food products?

Unlike rule-based machine vision that requires exact specifications, iFactory's deep learning models are trained on thousands of examples of acceptable product variation — different sizes, shapes, colors, and textures within your quality standards. The AI learns the boundary between "natural variation" and "defect" from your own production data, continuously improving as it encounters new examples. This means the system handles seasonal ingredient changes, supplier variation, and recipe adjustments without manual reprogramming.

What happens when the vision system flags a false positive?

iFactory's quality dashboard presents all flagged rejections with captured images and classification confidence scores. Quality managers can review borderline decisions, reclassify false positives, and feed corrections back into the model through a simple annotation interface. The system continuously learns from these corrections, reducing false positive rates over time. Most facilities achieve false positive rates below 0.5% within 60 days of deployment, minimizing product waste from unnecessary rejections.

Can autonomous vision QC replace human inspectors entirely?

iFactory is designed to augment and elevate human quality teams, not eliminate them. The vision system handles high-speed, repetitive inspection at volumes no human team can sustain — freeing quality professionals to focus on root cause analysis, process improvement, and strategic quality management. Most facilities redeploy inspection staff to higher-value quality engineering roles rather than reducing headcount, resulting in stronger overall quality programs.

How quickly can vision models be trained for new product lines?

iFactory's transfer learning architecture means new product models typically require 500-2,000 labeled sample images and 2-4 weeks of supervised production to achieve target accuracy. For products similar to existing trained models — such as a new flavor variant of an existing product format — models can reach production accuracy in as few as 3-5 days. iFactory provides on-site training support and remote model optimization throughout the onboarding process.

What camera and lighting hardware is required?

A typical food production line requires: (1) Industrial-grade area scan or line scan cameras with appropriate resolution for your defect detection targets; (2) Structured lighting enclosures to eliminate ambient light interference and ensure consistent imaging conditions; (3) An edge inference unit at each inspection station for real-time processing. For a standard single-lane packaging line, this typically comprises 1-3 cameras, a lighting tunnel, and a compact GPU inference unit. iFactory conducts site assessments to specify exact hardware requirements based on your product characteristics, line speed, and defect detection targets.

How does iFactory integrate with existing production line equipment?

iFactory connects to existing SCADA systems, PLCs, and ERP platforms through standard industrial protocols — OPC UA, Modbus TCP, MQTT, and REST APIs. Reject mechanisms (pneumatic blow-offs, diverter gates, robotic pick-and-place) are controlled through digital I/O interfaces compatible with all major PLC brands. Quality data flows automatically to your MES and ERP systems for batch release, traceability, and compliance reporting without manual data entry.

-system-analytics-best-practices-for-food-and-beverage.png)

-what-food-manufacturers-must-do-now.png)