Automotive OEMs are facing a once-in-a-decade infrastructure decision: where does the AI run? ADAS training pipelines generate petabytes of sensor data per fleet. Plant computer vision streams 100+ cameras at line speed. Tier-1 supply chains need real-time predictive intelligence. Cloud bills are exploding — and TISAX, GDPR, and IP-protection mandates are tightening. SAP Sapphire 2026 in Orlando (May 11–13) is where the automotive industry's AI-infrastructure conversation gets practical. This page is the iFactory reference: how OEMs achieve ~35% TCO reduction and up to 70% OpEx savings by deploying AI on-prem on NVIDIA GB300 + H200 + Jetson — without giving up the elasticity of modern AI tooling.

Automotive AI On-Prem

Insights for OEMs at Sapphire 2026

Three days at the Orange County Convention Center where automotive OEMs are pressure-testing the next wave of AI infrastructure. Join the iFactory automotive team for a live walk-through of the on-prem reference architecture that powers ADAS data pipelines and plant AI — ~35% lower TCO, up to 70% OpEx savings, full sovereignty over your training data.

Why Automotive OEMs Are Moving AI On-Prem

Cloud was the right answer in 2018. By 2026, the math has flipped. ADAS training datasets are now measured in petabytes per fleet generation. Camera streams from a single body-in-white shop produce 30+ TB/day. The cloud egress bill alone exceeds the cost of the rack. Book a 30-minute briefing and we'll model the TCO crossover for your specific data volumes.

The On-Prem Stack for Automotive AI Workloads

Two workloads define automotive AI infrastructure decisions: ADAS / AD training (massive parallel GPU compute, petabytes of sensor data) and plant manufacturing AI (real-time vision at line speed, deterministic latency). They have different physics — but they share the same on-prem economics once data volumes cross the cloud-cost cliff.

Cloud egress for petabyte-scale training data is the dominant cost. ADAS data carries vehicle telemetry that triggers GDPR + competitive-IP concerns. Sovereign training is now table stakes.

Line-speed vision at 30 FPS across 100 cameras can't tolerate cloud round-trip. Predictive maintenance on robot fleets needs deterministic latency. Plant AI is by definition local AI.

How the On-Prem Stack Connects to S/4HANA & BTP

The on-prem AI compute layer doesn't replace SAP — it sits beside it. Master data, financial postings, and business logic live in S/4HANA. Process intelligence, cost transparency, and analytics live in BTP. The on-prem AI stack handles training, inference, and edge compute. The connection layer is the architecture decision that matters. Read the on-prem AI integration guide.

Five Workloads OEMs Are Bringing On-Prem First

Fleet sensor uploads → on-prem ingestion → multi-modal training cluster → deployed perception models. No vehicle data leaves the OEM perimeter. GDPR and competitive-IP boundary stay intact.

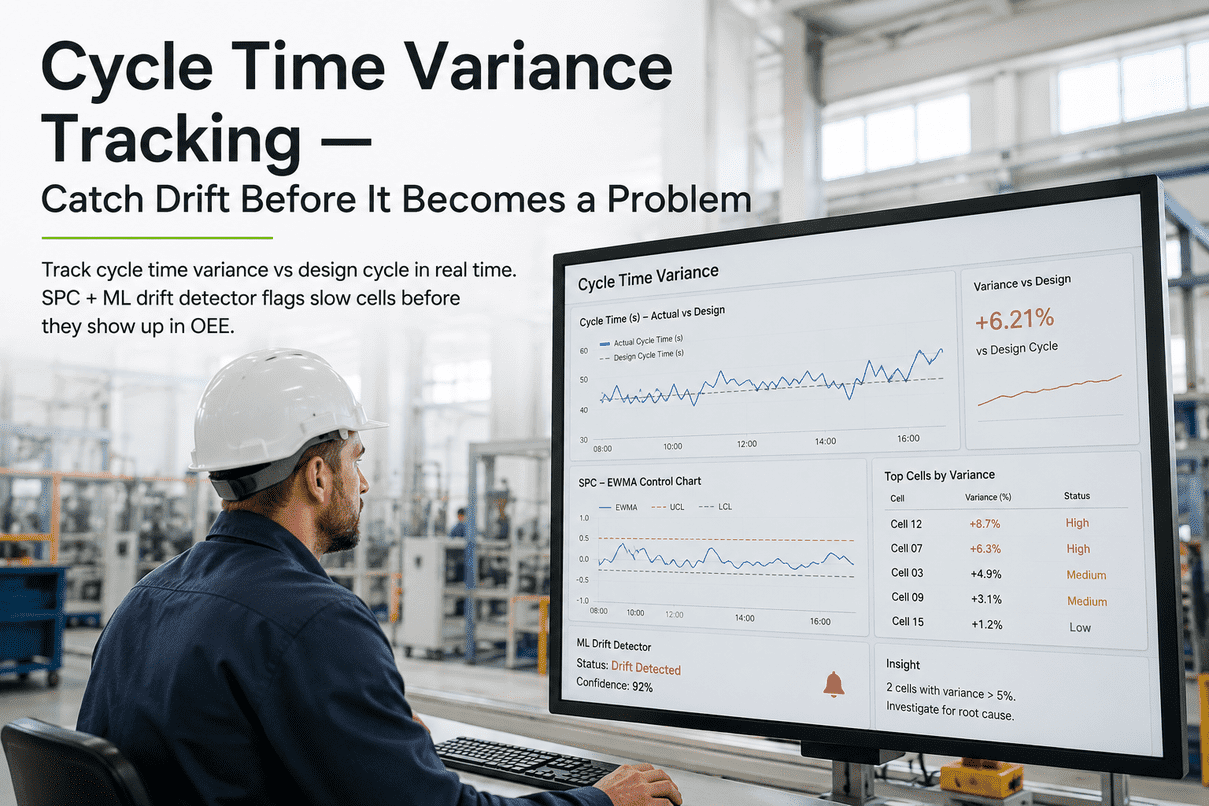

Welding spatter detection, paint defect classification, gap-and-flush metrology, and assembly verification across 100+ camera streams. Edge inference at line speed, training on plant-floor H200 cluster.

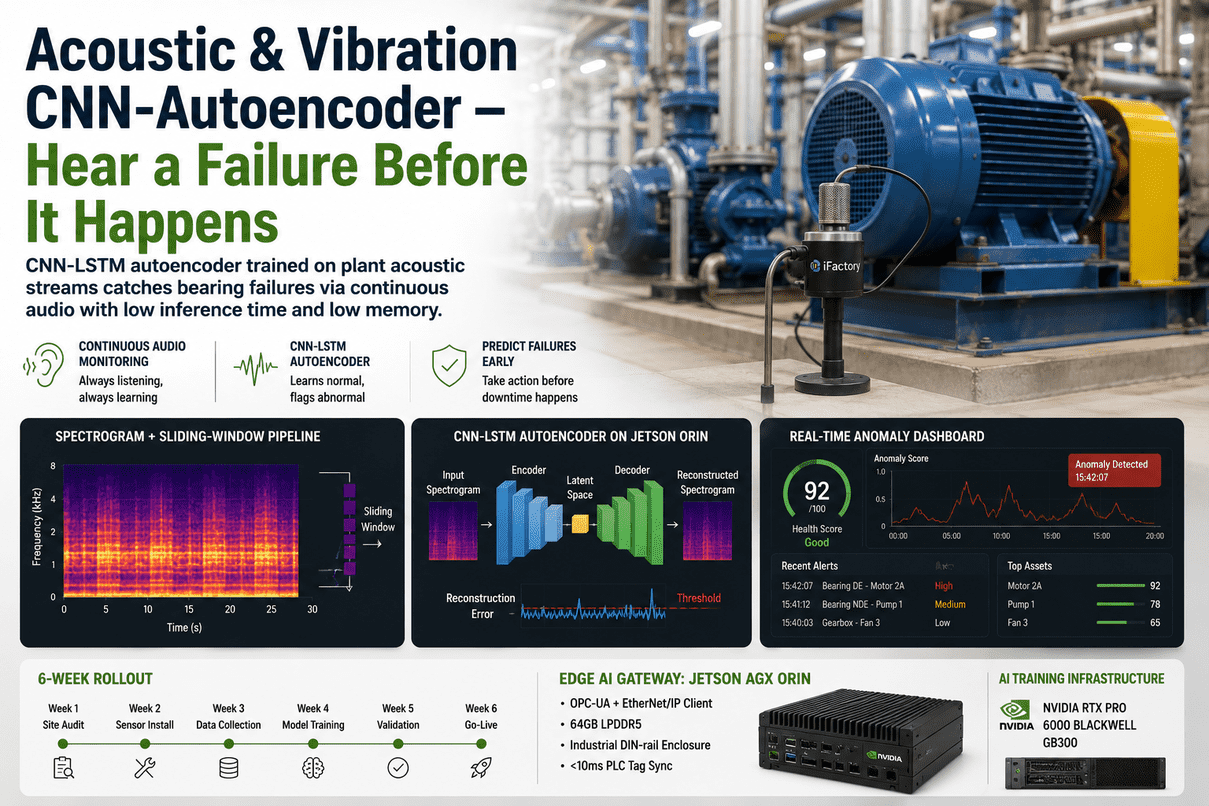

Vibration, current, and thermal signatures from 1,000+ robots feed LSTM and XGBoost models. Failures predicted 7–14 days ahead. Single platform manages full multi-vendor robot population.

Llama-class model fine-tuned on internal CAD specs, ECN history, supplier documentation, and ISO 26262 procedures. Engineers query natural-language. No proprietary IP transmits to public APIs.

Live signal from 5,000+ tier supplier feeds, geopolitical risk scores, lead-time drift, inventory exposure. Joins SAP S/4HANA materials data without exfiltrating supplier-confidential terms.

NVIDIA Omniverse running on-prem generates ADAS edge cases — pedestrian variations, weather, sensor noise. Avoids the licensing and data-residency pain of cloud-rendered synthetic libraries.

When Cloud Stops Being The Cheap Option

There's a specific data-volume and utilization point where on-prem becomes cheaper than cloud — and most automotive AI workloads sit well past it. Here's the curve OEM CFOs need to see.

TISAX, GDPR, ISO 26262 — Compliance Is Easier On-Prem

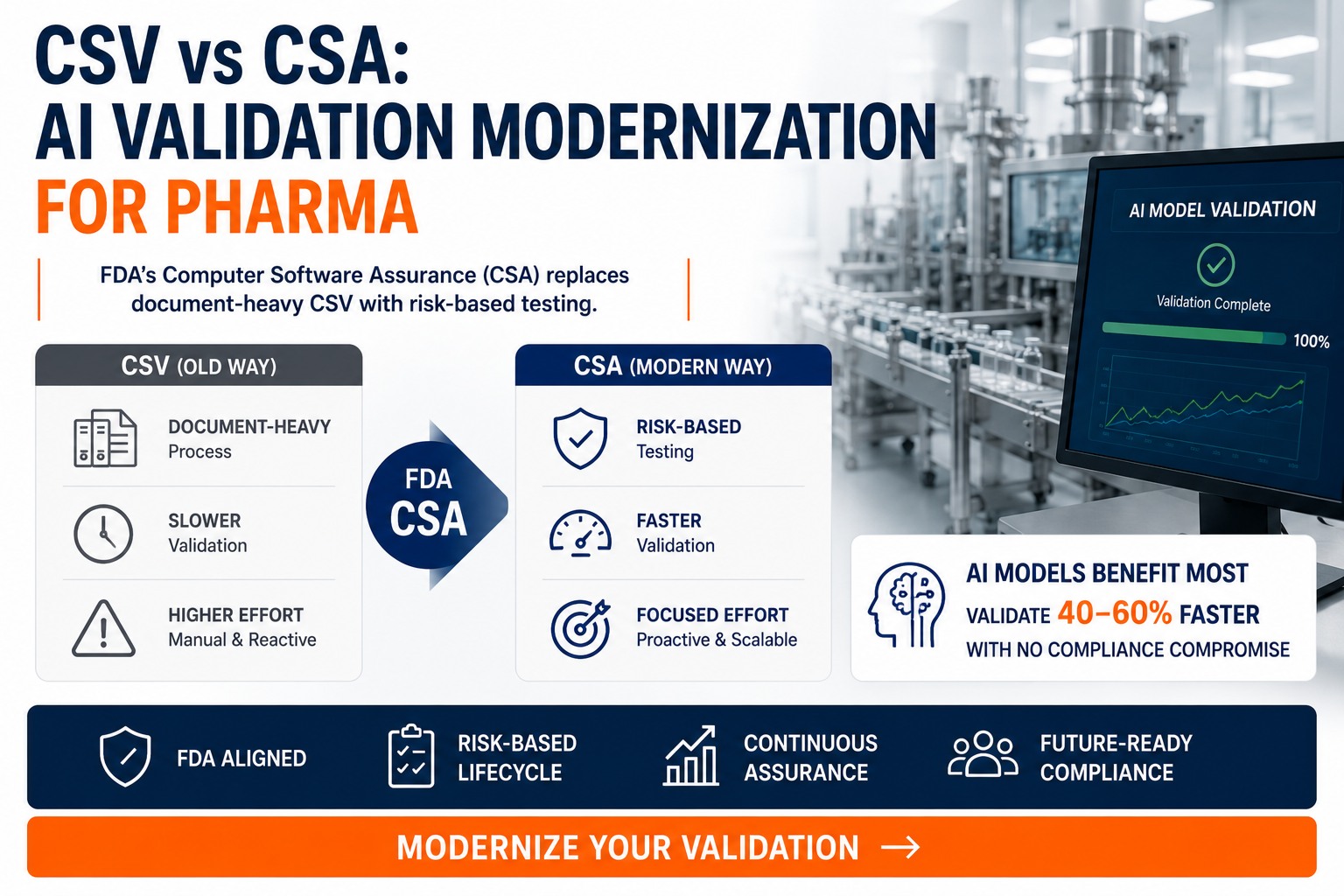

Automotive sits in the most demanding compliance overlap of any industry. TISAX for supplier IP. GDPR for vehicle telemetry. ISO 26262 for functional safety. Cybersecurity Management Systems under UNECE R155. Sovereign by-architecture removes whole categories of audit findings before they happen.

Supplier IP and engineering data exchange security. On-prem keeps proprietary CAD, simulation results, and supplier specifications inside the OEM firewall — no cross-border data flow disclosure.

Vehicle telemetry contains personal data — driving behavior, location, biometrics. On-prem training within the EU keeps it lawful by default. No data-transfer agreements. No cross-border ambiguity.

Functional safety lifecycle for ADAS and AD. Sovereign training enables full traceability — model weights, training data versions, validation evidence — which auditors increasingly want documented.

Cybersecurity Management System and Software Update Management System mandates. On-prem audit trails, change control, and air-gappable boundaries simplify compliance evidence.

What Automotive Architects Ask First

No. The migration story is hybrid-first — keep cloud for what it does well (burst training, geographically distributed pre-processing) and bring on-prem the workloads where data gravity, egress cost, or sovereignty mandates make it the obvious choice. Most OEMs land at 60/40 on-prem/cloud after the analysis.

It's complementary, not competing. The on-prem AI stack handles training, inference, and edge compute. SAP retains the role of business system of record. Aggregated AI outputs flow into SAP via validated APIs, with full audit trail. We share integration patterns at the Sapphire 1:1 sessions.

Yes — that's actually the on-prem advantage. Llama 3.1 70B, Mistral, NVIDIA Cosmos, and other open-weight models run sovereign on GB300 with full fine-tuning rights. No vendor lock to a closed-API gatekeeper. Your fine-tuned weights are your IP.

First plant or first ADAS pipeline live in 14–18 weeks. Multi-site rollout typically 12–18 months for a 5-plant OEM. The iFactory deployment team has supported 1,000+ enterprise AI implementations and we standardize the playbook by use case.

Built for OEM-Grade Deployments — Not Hyperscaler-Lite

Get the Automotive AI On-Prem Plan for Your OEM

Thirty minutes with our automotive deployment team. Bring your AI workload portfolio, current cloud spend, and TISAX scope. We'll map exactly which workloads to bring on-prem first, model the TCO crossover, and outline a 14-week first-deployment path. Talk to support if you'd like preliminary scoping before the call.