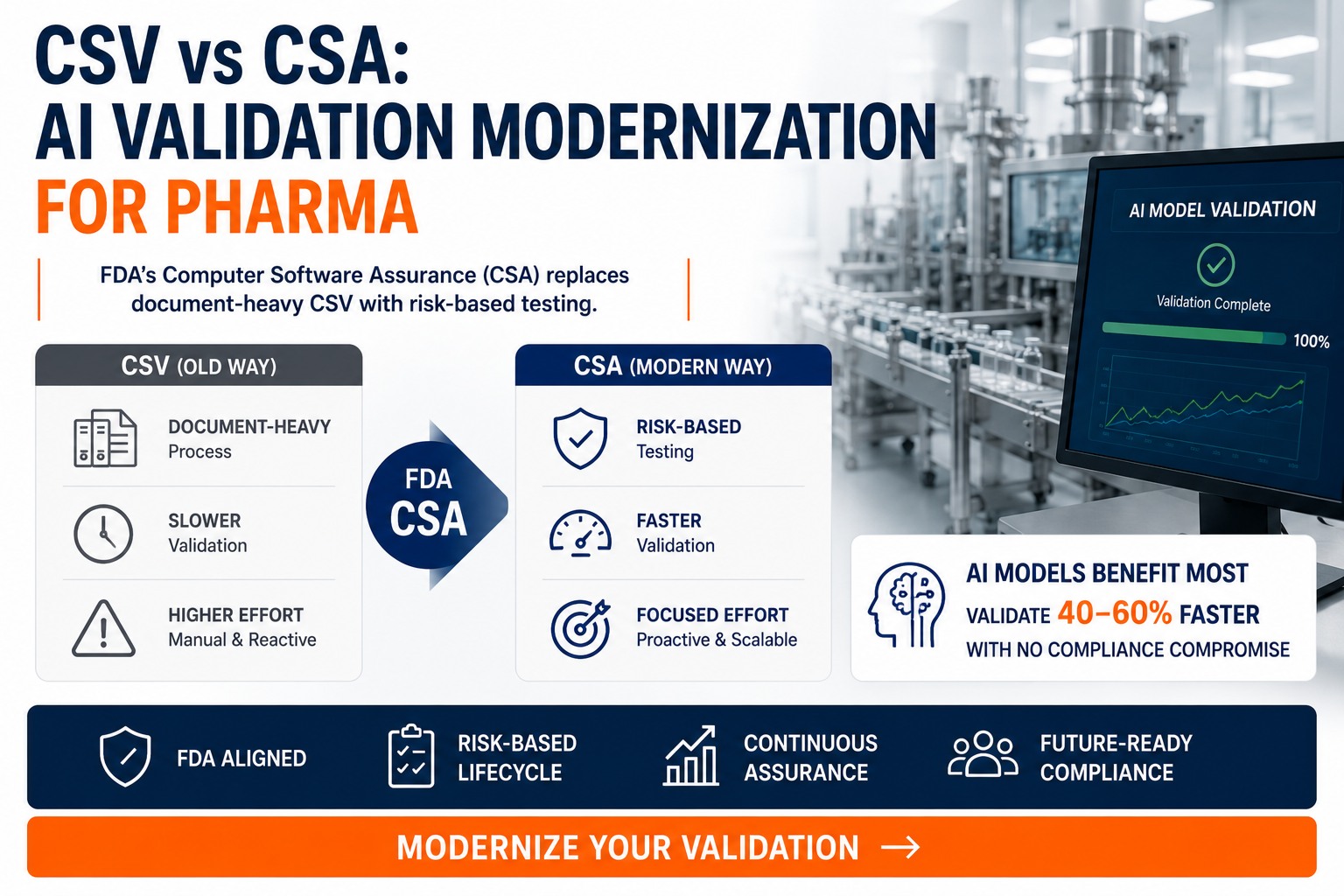

For thirty years, validation in pharma manufacturing was a paperwork problem disguised as a quality problem. CSV — Computer System Validation — generated binders. Hundreds of test cases for software functions that no engineer in the room actually believed carried meaningful risk. The deviation rate during the validation phase was higher than the deviation rate during the operating phase. The mountain of paper, the published industry critiques pointed out, did not equate to product quality, data integrity, or patient safety. It equated to documentation. On September 24, 2025, the FDA finalised a different framework: Computer Software Assurance, or CSA. CSA replaces document-first validation with risk-based assurance. Software functions are classified as either "high process risk" or "not high process risk", and the testing rigour is matched to the classification. Where CSV demanded the same exhaustive paperwork for every function regardless of risk, CSA asks first what the function actually does, who it affects, and what could go wrong — and only then decides what evidence is sufficient. ISPE's published 2023 industry data showed CSA-aligned organisations cutting validation cycle times by up to 40% with no compliance compromise. For AI models — which iterate, retrain, and update in ways the original CSV framework was never designed to handle — the CSA shift is not a marginal improvement. It is the difference between AI being unvalidatable and AI being routinely validated. This page walks through the framework difference, the four-step CSA process FDA published, why AI in particular benefits, and how iFactory delivers an end-to-end AI-native validation solution that lands the CSA-aligned validation pack as a deployment artefact rather than a post-hoc project. To see the validation evidence pack rendered live alongside the AI it validates, walk the iFactory booth at SAP Sapphire Orlando, May 11–13 2026 — register here.

CSV vs CSA — AI Validation Modernization For Pharma

Risk-First Assurance Replaces Document-First Validation. AI Models Benefit Most.

FDA's final Computer Software Assurance guidance — published 24 September 2025 — replaces document-heavy CSV with a four-step risk-based framework. ISPE-aligned data shows up to 40% reduction in validation cycle time with no compliance compromise. iFactory delivers an end-to-end AI-native validation solution that produces the CSA-aligned evidence pack as a deployment deliverable, not as a post-hoc project. Walk the iFactory booth at SAP Sapphire Orlando, May 11–13 to see the validation evidence rendered live alongside the AI it validates.

CSV And CSA Side By Side — What Actually Changed When FDA Finalised The Guidance

CSA is not a relaxation of regulatory expectations. It is a clarification of the flexibility that already existed within 21 CFR Part 820 and Part 11. The eight rows below — drawn from FDA's final guidance, ISPE GAMP 5 Second Edition, and the FDLI regulatory commentary — show where the old CSV framework and the new CSA framework actually diverge. Read the rows top-to-bottom for the philosophical shift, or scan the columns for what changes operationally on Monday morning. Talk to our validation lead about which rows apply to your QMS today.

What CSA does not change: 21 CFR Part 11 obligations on electronic signatures and audit trails are unchanged. EU Annex 11 still applies. GxP record retention rules unchanged. Validation evidence is still required and still inspectable. The shift is in how the evidence is generated and documented, not in whether evidence is required.

The Four Steps That Replace The Hundred-Page Validation Plan

FDA's final guidance describes CSA as a four-step process. Each step asks a specific question, produces a specific decision, and constrains the rigour of the next step. The shape is borrowed from risk management frameworks already familiar to anyone running ICH Q9 — but applied specifically to software assurance, which is where the previous CSV framework was deficient.

What is this software function actually for? What part of the production or quality process does it touch? Is it a function that creates, modifies, or controls a record that contributes to product quality, patient safety, or data integrity — or is it a function that does not? Critical thinking happens here, before any test scripts get written.

Given the intended use, classify the function as high process risk or not high process risk. The classification is binary, intentionally — to force a decision rather than allowing every function to be hedged into a middle category. High-risk functions get scripted testing; not-high-risk functions get lighter, often unscripted methods.

Match the test method to the risk class. Scripted testing for high-risk. Unscripted, exploratory, or ad-hoc testing for not-high-risk. Vendor SDLC artefacts and existing test evidence accepted as input where appropriate. Critical thinking on what evidence is sufficient — not what evidence is exhaustive.

Document what was done, what was found, and the decisions made — proportional to the risk class. The record exists to capture the assurance decision, not to demonstrate diligence by weight of paper. Records remain inspectable; what changes is the volume and the focus.

The fundamental difference from CSV: CSV started at step 4 and worked backwards — produce the documentation, justify it later. CSA starts at step 1 and works forwards — understand the risk, then document only what the risk warrants. Same rigour for the high-risk functions; far less waste on the low-risk ones.

CSA Was Written For Software That Iterates — Which Is What AI Models Do

Of every software class regulated under Part 820 and Part 11, AI models are the one CSV was least equipped to handle. AI models retrain. Retraining changes weights. Changed weights mean different outputs on edge-case inputs. Under classical CSV, every retrain triggered a fresh validation cycle — which made AI economically unfeasible for any GxP-affecting use case. CSA changes the calculation. Below are five characteristics of AI workloads where the CSA framework specifically improves the validation lifecycle.

An LLM that drafts an eBR section for human review is not the same risk class as an LLM that auto-closes a deviation. CSA's binary classification forces the team to put the question on the table: does this AI function actually touch a release decision, or does it produce a draft for human review? Most AI use cases are the latter — and now get appropriate, lighter assurance.

CSA explicitly supports iterative, agile-compatible lifecycles. AI retraining cadences — monthly, quarterly, on-event — fit cleanly into the CSA change-impact framework. A retrain on the same training distribution that produces metrics within the validated envelope does not retrigger the whole validation cycle. The CSV framework had no graceful way to express this.

iFactory's AI models, training pipelines, and audit-trail infrastructure come with SDLC artefacts, validation test packs, and traceability matrices. CSA explicitly accepts vendor evidence as part of the customer's assurance pack — eliminating the duplicative re-running of tests the vendor has already executed and documented.

CSA accepts ongoing monitoring of system performance as a legitimate part of the assurance lifecycle — not just point-in-time testing. AI models with continuous accuracy monitoring, drift detection, and retraining triggers fit this paradigm naturally. Monitoring is not an "additional burden" under CSA; it is part of the assurance package.

An LLM that searches SOPs and surfaces citations to a human is not the same documentation burden as a deterministic batch-record-numbering function. CSA allows the assurance documentation to scale with the actual risk and complexity, rather than producing 200 pages of identical-shape protocols for both. Faster to write, faster to inspect, faster to update.

CSA-Aligned Validation Pack As A Deployment Deliverable, Not A Post-Hoc Project

Most AI vendors ship the AI and leave validation as the customer's problem. iFactory delivers the AI and the CSA-aligned validation pack as a single integrated deliverable, calibrated to your QMS, signed by your validation team. The structure below shows what your team receives, when, and what it is structured to support — both the FDA inspection and the day-to-day operational change-impact decisions that follow go-live.

Per AI function — eBR drafter, operator copilot, optimizer, twin scenario engine. What it does, what it touches, who it serves, what records it creates or modifies. Drafted by us against your QMS taxonomy; reviewed and signed by your validation lead.

Each AI function classified as high process risk or not high process risk with the reasoning documented. Most AI functions land in the second category because they produce drafts or recommendations for human review — but the classification is yours to confirm, not ours to assert.

Where any AI function classifies as high process risk (rare, but it happens), full scripted IQ/OQ/PQ executed and recorded. Test data from your historical operating records. Acceptance criteria signed off by your validation team before execution.

For not-high-risk functions, ad-hoc and exploratory testing executed by SMEs against the intended use specification. Test results and observations captured in the audit-trail, with critical thinking applied to anomalies. CSA-acceptable, audit-ready, much faster than scripted.

iFactory's own development, training, and testing records — model cards, training data lineage, citation accuracy benchmarks, fabrication-rate reports — provided as input to your assurance pack. Per CSA, this evidence is acceptable as-is; no need to re-run our tests.

Every intended-use statement traced to its risk classification, its assurance activity, its evidence record, and its 21 CFR Part 11 / Annex 11 control mapping. The single document FDA inspectors and EU notified-body auditors actually open first.

All Phase 1 and Phase 2 records bound into a structured pack indexed against the four CSA process steps. Goes live the same day the AI does. Available to your inspection-readiness team, your QA, and to FDA / EMA inspectors on request.

The framework your team uses post-go-live to assess AI model retrains, software patches, and configuration changes. Defines what triggers re-validation and what does not. The thing that prevents your team from sliding back into CSV-style "revalidate everything" reflexes after we leave.

AI model accuracy, citation precision, drift detection, retraining trigger logs — captured automatically and rendered in the audit trail. Per CSA, ongoing monitoring is part of the assurance package, not an afterthought. Quarterly reports for your validation team's annual product review.

Each model retrain produces a delta-validation pack scoped to what changed. Same training distribution + within-envelope metrics = lightweight update; distribution drift or new feature = scoped re-validation. Aligned with CSA change-impact philosophy, not the CSV "revalidate everything" reflex.

Why this is end-to-end: the validation pack is generated alongside the AI deployment, not as a follow-on engagement. The same iFactory team that calibrates the model writes the intended-use specification. The same engineers that integrate with your historian deliver the traceability matrix. There are no validation gaps between deployment and inspection-ready — because both are produced as a single integrated workflow. See the validation pack rendered live alongside the AI it validates at Orlando.

iFactory On-Prem AI Server vs SAP-Style Cloud SaaS — The Structural Difference For GxP Validation

Most cloud ERP and quality systems used in pharma — including SAP S/4HANA Cloud, cloud QMS platforms, and cloud LIMS — are multi-tenant SaaS architectures. Per the published cloud-qualification guidance, customer validation in those environments is limited to configuration validation; the application code itself, the runtime, the storage layer, and the upgrade cadence are vendor-controlled. iFactory's deployment model is the opposite: a turnkey on-prem AI server racked in your control building or corporate data centre, owned outright by you, air-gapped from public internet by default. The seven rows below show why this structural difference matters for CSA validation, audit-trail control, and inspection readiness — and why iFactory pairs naturally with your existing SAP/MES/QMS landscape rather than competing with it.

This is not an SAP-replacement story. iFactory is an AI layer that sits alongside your SAP / Werum / MasterControl / Veeva landscape — pulling reference data through standard integrations, posting AI-drafted records back for human approval. SAP runs your ERP; iFactory runs your AI. The point above is structural: the AI workload — which produces the records, drafts, and recommendations regulators most carefully scrutinise — is materially easier to validate, audit, and own when it sits on hardware you control rather than in someone else's tenant. See the on-prem AI server stack live in Orlando alongside the validation pack it ships with.

If Your QMS Is CSV-Era — Three Realistic Migration Paths

Most pharma QMS systems were built when CSV was the only framework. The CSA shift creates a real choice for validation engineers and QA heads: do nothing, hybrid migrate, or full migrate. Below are the three options as they actually look in practice — including the one that's right for risk-averse QMS teams who don't want to be the first in their company to adopt the new framework.

Existing validated systems remain under CSV maintenance. New AI deployments — and only those — are validated under CSA from day one. The QMS team gradually builds CSA muscle memory on lower-stakes new systems before touching anything legacy. The risk-averse path that almost every multinational pharma is taking right now.

New AI deployments validated under CSA from day one (as Path A). Additionally, legacy systems coming up for periodic review or significant change are re-baselined under CSA at that natural inflection point — no big-bang migration, no separate project. Within 18 to 24 months, the QMS is majority-CSA without a disruptive transition. This is what iFactory deployments default to.

Multi-quarter programme to re-baseline every validated system under CSA, with a dedicated programme team and a published migration roadmap. High investment. High benefit (full ISPE 40% cycle-time reduction across the QMS). Reserved for organisations with strong validation muscle and executive sponsorship for the disruption.

What Validation Engineers & QA Heads Ask First

Final. FDA finalised the Computer Software Assurance for Production and Quality System Software guidance on 24 September 2025, after publishing the draft on 13 September 2022. The final supersedes Section 6 of the General Principles of Software Validation guidance. Aligned with GAMP 5 Second Edition (July 2022). Compatible with the 21 CFR Part 820 / ISO 13485:2016 harmonisation effective 2 February 2026.

No. CSA does not change Part 11 expectations on electronic signatures, audit trails, record retention, or system access controls. Part 11 obligations remain. CSA changes how you validate the software function that implements those Part 11 controls — it does not change whether the controls themselves are required.

For not-high-process-risk functions, yes — explicitly, per the final guidance. The published commentary from FDLI, ISPE, and PSC Software all confirm this. For high-process-risk functions, scripted testing is still the appropriate method. The key is that the risk classification is documented, defended by the SME, and traceable in the assurance pack. An inspector who challenges unscripted testing will be answered by the risk classification document, not by paperwork weight.

As a deployment artefact, not a post-hoc project. Phase 1 of the iFactory deployment produces the intended-use specifications and risk classifications. Phase 2 produces the scripted and unscripted assurance evidence. Phase 3 produces the traceability matrix and inspection-ready pack. By go-live week 12, your validation team has signed off the pack — the AI doesn't go live without the validation evidence in place. Year 1 onwards produces continuous-monitoring records and retrain-cycle update packs.

That's Path A above — and it's the most common path. We deliver the validation pack in CSV format. The cycle is longer and the documentation is heavier, but the underlying AI is identical. Most customers start on Path A for their first AI deployment, see the inspection-ready pack land on time, and then move to Path B (hybrid) for subsequent deployments. We don't push CSA where the customer's QA prefers the familiar framework.

Through the change-impact framework delivered in Phase 3. Each retrain triggers a scoped impact assessment: same training distribution + metrics within validated envelope = lightweight update with delta-validation pack. Distribution drift, new features, or out-of-envelope metrics = scoped re-validation against the affected functions. The framework eliminates the CSV reflex of revalidating the entire system for every retrain — which is what made AI economically infeasible under CSV in the first place.

The guidance is FDA-issued. Equivalent principles are reflected in EU Annex 11, ISPE GAMP 5 Second Edition, and the 21 CFR Part 820 / ISO 13485:2016 harmonisation effective February 2026. The risk-based, critical-thinking philosophy is converging across jurisdictions. iFactory's validation pack is structured to satisfy FDA CSA and EU Annex 11 expectations simultaneously — same evidence, different cover sheet.

The pack stays valid. You own all phase deliverables, the change-impact framework, and the continuous-monitoring infrastructure. Renew support and quarterly review with our validation lead annually, run it in-house with the handover docs, or mix both. The validation pack does not expire when support ends — it expires only when the underlying AI is retired or substantively changed.

See The CSA-Aligned Validation Pack Rendered Live Alongside The AI It Validates

Two ways forward. First: walk the iFactory booth at SAP Sapphire Orlando, May 11–13. Our validation lead will walk through a real CSA-aligned validation pack, rendered live alongside the AI it validates — the eBR drafter, the operator copilot, the heat rate optimizer — running on the on-prem AI server stack. Bring questions about your QMS framework. Second: a 30-minute working session with our validation lead — bring one AI use case you're considering, and we'll walk through the CSA risk classification and the assurance plan it would generate.