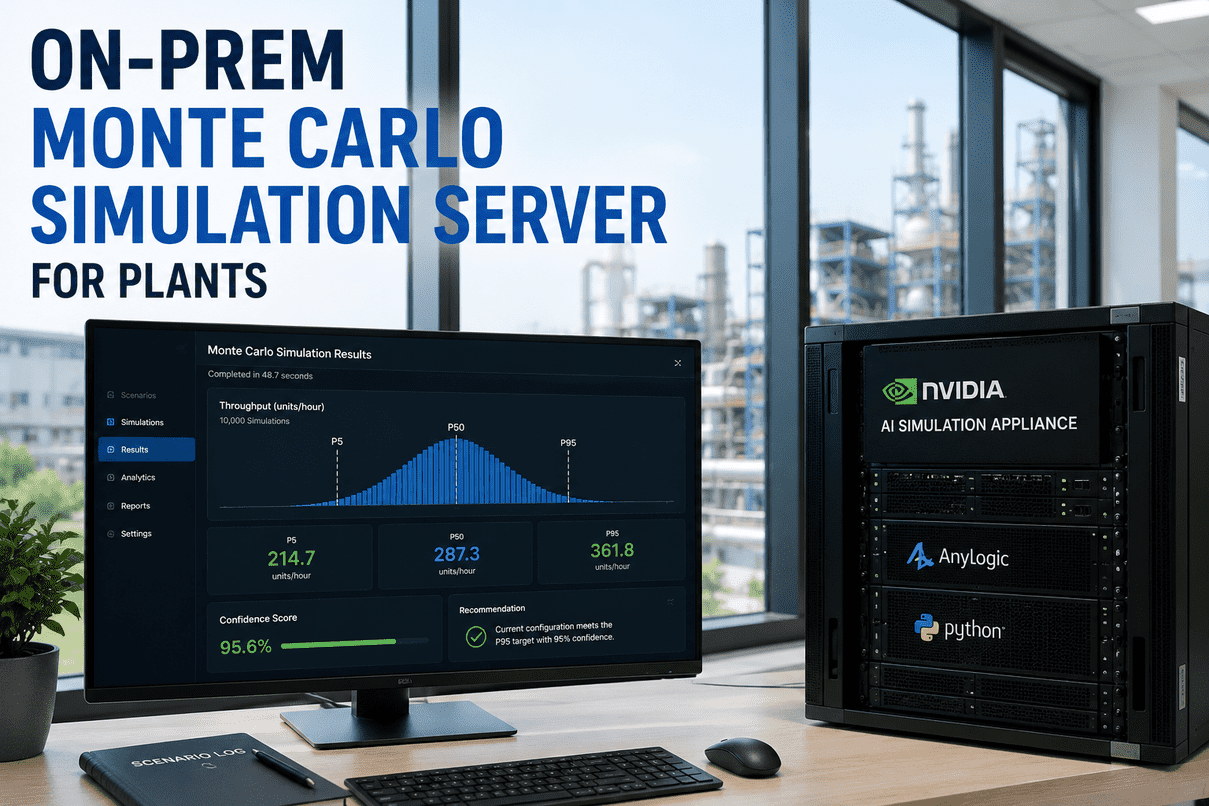

Plant decisions get made on single-point estimates because that is what most simulation tools return. One number, one chart, one answer that hides the variance underneath. The problem is that nothing in a real plant is a single point — throughput is a distribution, downtime is a distribution, energy demand is a distribution. iFactory ships an enterprise-grade NVIDIA AI server straight to your plant — racked, pre-loaded, and ready to run 10,000 discrete-event simulations on AnyLogic + Python in under 60 seconds. Submit a scenario. The appliance returns P5, P50, P95, a confidence score, and a recommendation card. Locally. No cloud round-trip. Get a quote — and a delivery date.

From Scenario On The Screen To Decision-Ready Card —

The On-Prem Monte Carlo Pipeline

iFactory delivers the full chain: enterprise NVIDIA AI server racked at your plant, AnyLogic + Python pre-loaded, your reference model pre-installed, 10,000 discrete-event runs orchestrated locally, P5/P50/P95 percentiles computed, confidence scored, and an LLM-summarised recommendation card returned in under 60 seconds. One PO; one go-live date; one team accountable for every layer of the stack.

An NVIDIA AI Server. Racked. Pre-Loaded. Ready in Days.

This is a physical product. Not a SaaS workspace. Not a cloud subscription. iFactory builds the appliance for your plant — operating system hardened, AnyLogic licensed and installed, Python simulation orchestrator pre-configured, your plant's reference model loaded, GPU drivers tuned for parallel DES execution. It ships racked. You connect power and Ethernet. The simulation pipeline is reading scenarios within days of unboxing.

Pre-configured AI hardware shipped to your site. Hardened OS, AnyLogic + Python pipeline pre-loaded, your reference model installed, GPU tuned for parallel discrete-event execution.

One-time purchase. No monthly fees, no per-seat charges, no per-scenario billing. The platform is yours forever — including the simulation models you build on top of it.

All scenarios, all model data, all simulation outputs stay behind your firewall. Nothing leaves your network. Compliant with regulator and corporate IT policy from day one.

Modify the simulation orchestrator, extend the Python pipeline, build your own scenario templates. Your engineering team owns the platform like any in-house tool.

Scenario submitted to recommendation card in under 60 seconds. 10,000 DES runs orchestrated on your local GPU — no scheduler queues, no shared cloud capacity.

From Scenario Screenshot to Recommendation Card · End-to-End

A scenario enters the pipeline as a structured input. Five stages later, it leaves as a decision-ready card with statistics, confidence, and a recommended action — all running locally on the appliance.

An engineer submits a scenario through the iFactory dashboard or via the Python API. Variables — production volume, downtime profile, demand curve, batch size, mix shift — are bound to probability distributions: Triangular, Normal, or Beta-PERT.

The Python orchestrator dispatches 10,000 discrete-event simulation runs across the GPU. Each run samples once from every distribution, then plays the scenario forward through the AnyLogic plant model — every machine state, every queue, every changeover modelled deterministically given the sampled inputs.

Output variables across all 10,000 runs are aggregated into empirical distributions. The pipeline surfaces P5 (downside / pessimistic), P50 (median / most likely), and P95 (upside / optimistic) — plus the full S-curve for any output the engineer wants to inspect.

The pipeline assesses the convergence of the percentile estimates against run count. Tight distributions earn high confidence; wide or multi-modal distributions earn lower confidence — and surface that uncertainty honestly to the decision-maker rather than hiding it behind a single number.

An LLM summarises the pipeline output into a structured recommendation card — scenario name, percentile band on the key output, confidence score, the cost of being wrong on the downside, the value of being right on the upside. One paragraph the plant manager reads in 30 seconds.

What Comes Out the Other End — In 30 Seconds Reading Time

An illustrative card from a packaging-line capacity scenario. The pipeline ran 10,000 simulations in 47 seconds on the on-prem appliance — submission to card.

Adding the second capper resolves the premium-SKU bottleneck across all 10,000 simulated runs. The downside case (P5 = +11%) still beats the do-nothing scenario by 7%. The upside case (P95 = +24%) requires demand for premium SKU to hold above 55% mix — sensitivity analysis ranks demand mix as the dominant variable. Capital cost recovers in 14–22 months at P50 throughput. Recommended: proceed to detailed engineering. Risk to flag: if premium SKU mix drops below 45%, throughput gain compresses to single digits.

The Hardware Spec — What Lands on Your Loading Dock

Three appliance tiers, all NVIDIA-powered, all pre-configured, all shipped racked and ready. Choose the tier that matches your scenario throughput and team size. The full configuration walkthrough — including custom variants — is part of the live event for buyers evaluating their order.

Our upcoming live event covers the inside of every appliance tier — GPU layout, thermal envelope, network topology, security architecture, and the exact AnyLogic + Python pipeline pre-loaded at the factory before shipment. Engineers walk through every layer with you.

Five Reasons Plant Engineers Are Moving Simulation Off the Cloud

The cloud-simulation pitch made sense five years ago. It does not anymore. The economics, the data sovereignty regulations, and the latency math have all shifted toward on-prem appliances — and the buyers running our quotes know it.

Cloud simulation vendors price by the compute hour, by the run, by the seat. Your simulation budget is a recurring tax. Buy the appliance once — run unlimited scenarios at zero marginal cost. The math flips at month 18.

Your scenario inputs contain capacity, demand, supplier, and cost data — exactly the information that is most sensitive in your business. Running it on a vendor cloud means trusting that vendor with your operational core. On-prem means it never leaves.

Cloud simulation pipelines wait in scheduler queues. Sub-minute scenario-to-card is impossible when there are 200 other tenants ahead of you. The on-prem appliance has zero queue — your scenarios are the only scenarios on the box.

Data localisation rules across India, EU, China, and the Middle East increasingly disallow operational data leaving the country, let alone the firewall. The appliance is automatically compliant — your data never crosses any border or vendor boundary.

Cloud simulation locks you into the vendor's roadmap. With source access on the on-prem appliance, your team can extend, integrate, and adapt the platform to your specific scenario library — with or without iFactory.

What OR Specialists & Plant IT Ask Before the Order

It runs on top. AnyLogic is the discrete-event simulation engine; iFactory pre-installs and licenses it on the appliance, plus the Python orchestrator that drives the Monte Carlo experiment, the percentile statistics layer, the confidence scorer, and the LLM that writes the recommendation card. AnyLogic remains the core simulation engine — your existing models port directly.

6–12 weeks PO to live. Most plants are running their first scenarios within 8 weeks. The bulk of that time is engineering scope, hardware build, and pre-test in our lab. Once the appliance lands at your site, the on-site cycle is days — power, network, calibration, training, go-live.

Yes. Any AnyLogic model file works on the appliance. The pre-load step in the build phase imports your reference model, validates it runs cleanly, and binds the parameter inputs to the Monte Carlo orchestrator. Your existing model investment carries forward.

The pipeline supports up to 100,000 runs on the Department tier and unlimited on Enterprise. Confidence convergence is monitored in real time — if the percentile estimates haven't stabilised by 10K, the orchestrator continues until they do. The choice between speed and confidence is yours, scenario by scenario.

Two Ways to Take Delivery — Both End With the Server in Your Plant

Get a fixed-price quote — our solutions architects map your scenario library, your model count, and your team size to one of the three appliance tiers. You leave with a quote, a signed scope document, and a delivery date. Or join our upcoming live event to walk inside every NVIDIA AI server configuration we ship — GPU layout, AnyLogic + Python pre-load, security architecture, the lot. Engineers walk every layer with you. Either path leads to the same outcome: appliance racked at your plant, scenarios running locally, perpetual license, source access, total data sovereignty.