The cloud-first era is over for serious AI workloads inside power plants. Operators of nuclear, thermal, gas, and hydro stations are converging on the same answer: build the AI data center on-site, behind the perimeter, on your own power. The reasons are practical—data sovereignty regulations, sub-50 ms inference for control-loop AI, fuel-contract privacy, and the simple fact that a power plant has more spare megawatts than most data centers ever will. This guide walks through what an on-premise AI data center actually looks like in 2026: the reference architecture, the cooling decision, the 2N+1 power design, the IT/OT segmentation that keeps a cyberattack from crossing the bridge, and a sizing approach you can apply to your own plant.

Upcoming iFactory Ai Live Webinar:

On-Premise AI Data Center Design for Power Plants

Join the iFactory team for a live walk-through of building a sovereign AI data center inside an operating power plant. Cover reference architecture, liquid vs air cooling, 2N+1 power redundancy, IT/OT segmentation, and air-gap options—built on 1,000+ enterprise implementations.

Five Reasons Power Plants Are Going Sovereign in 2026

A power plant has constraints that hyperscaler colocation cannot meet. The decision to go on-prem is not nostalgia—it is engineering, compliance, and economics aligning at the same time. Book a 30-minute call with our infrastructure engineers to identify which of these reasons hits hardest at your station.

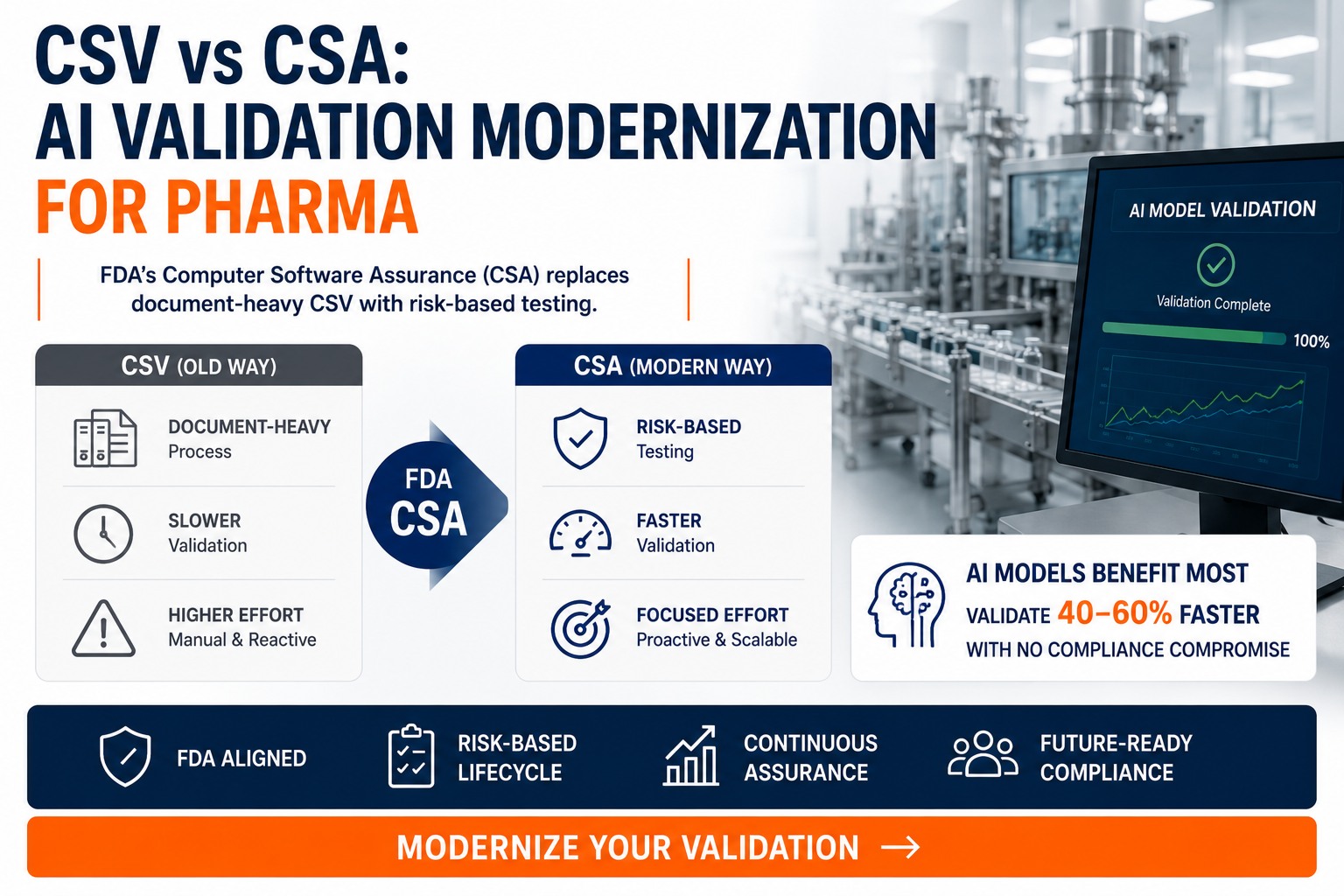

Plant operating data, fuel contracts, and emission readings cannot legally leave the plant in regulated industries. 2026 privacy and AI governance rules tightened this further—data residency is a control requirement, not a preference.

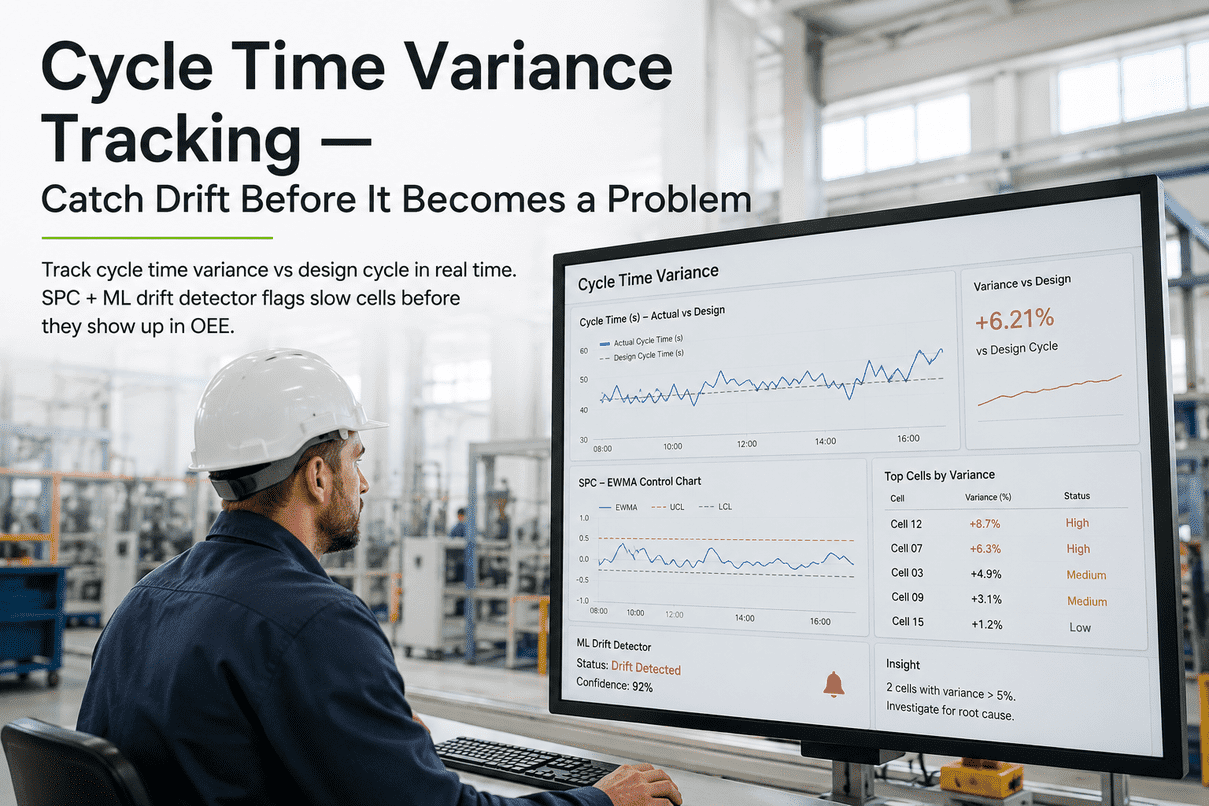

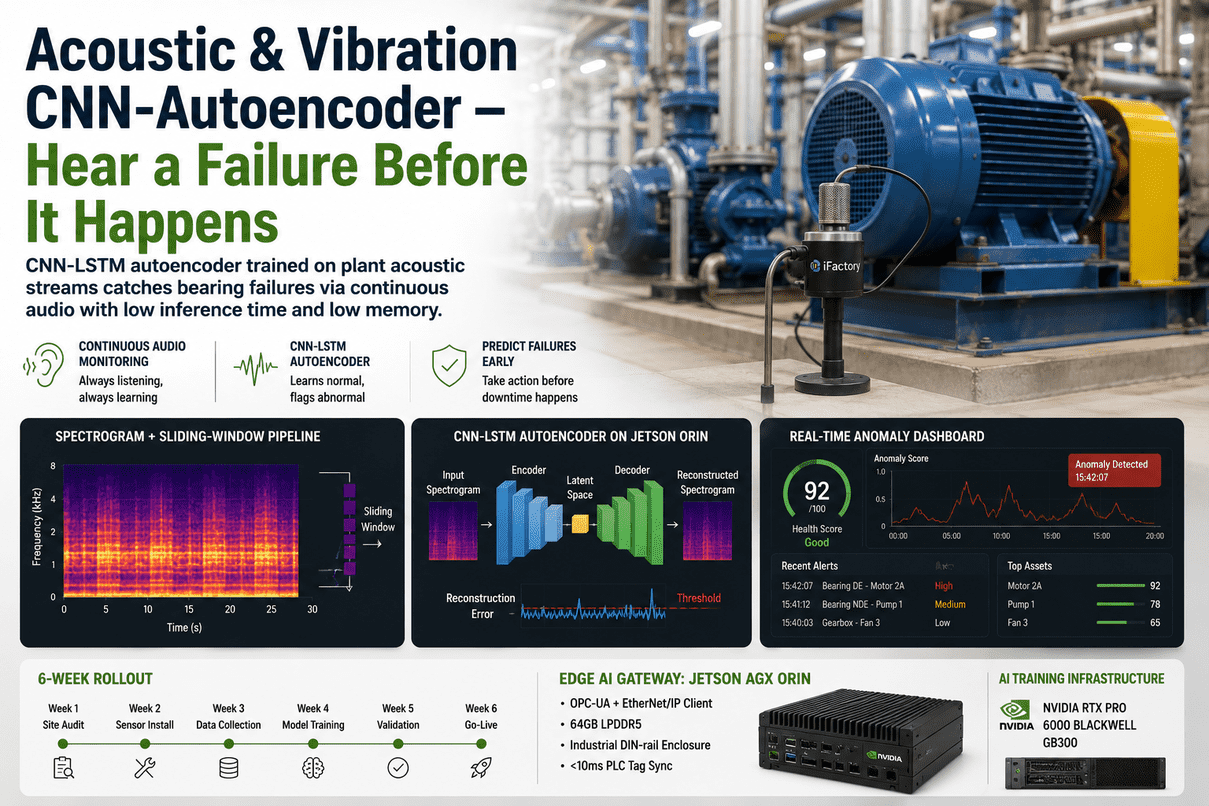

Turbine vibration analysis, combustion control, and emission soft-sensors run in tight control loops. A round-trip to a hyperscaler region adds 80–150 ms—too slow. On-prem GPU inference holds latency under 50 ms.

The DynoWiper attack on Polish energy infrastructure in December 2025 moved laterally from corporate IT into OT and destroyed PLC configurations. Cloud-routed AI traffic widens that bridge. On-prem keeps the attack surface closed.

A 660 MW thermal unit has spare auxiliary capacity for a 1–5 MW AI campus. For most plants, on-site generation eliminates the 18–24 month utility lead time that delays hyperscaler deployments.

The new metric for AI infrastructure success in 2026. Stranded plant power converts directly into AI tokens at a fraction of cloud cost—if the data center is co-located. Off-site dependency wastes the advantage.

The Four-Tier On-Premise AI Data Center for a Power Plant

Every working on-prem AI data center inside a power plant is built on the same four-tier reference architecture. Get the boundaries right between tiers and the rest is execution.

Liquid vs Air vs Hybrid — The Cooling Decision That Defines Your Footprint

Rack density has crossed a line that air cooling cannot follow. A standard rack used to push 30–40 kW; AI racks are now measured in hundreds of kilowatts, with GB300 NVL72 at ~120 kW and designs approaching the megawatt range. Schedule a cooling-strategy review for your specific rack mix.

Traditional CRAH/CRAC + raised floor. Works for storage, networking, and inference nodes under 25 kW per rack. Familiar, low-risk, but caps your future density.

Cold-plate-to-chip warm-water loop. Mandatory for GB300/GB200 NVL72. Captures up to 92% of heat directly from CPU, GPU, and switch silicon. Industry standard for new AI builds.

Servers submerged in dielectric fluid. Up to 1,200× the thermal efficiency of air. Compelling for multi-MW AI campuses but adds fluid management and serviceability complexity.

Choosing N+1, 2N, or 2N+1 — Tier Level Drives Cost

Power redundancy is where on-prem AI data centers either earn their uptime or burn capital. The right model depends on the workload. Plant-control AI demands the highest tier; experimental training can live on N+1.

Single distribution path with one extra component as backup. Cheapest, simplest. Acceptable for non-critical AI training and dev environments.

Two fully independent and identical distribution paths. Either path can carry the full load alone. Concurrent maintenance possible without downtime.

Two mirrored paths plus one additional component. Highest available redundancy. Required for AI tied directly to plant control or safety functions.

The Purdue + IEC 62443 Model — Where AI Sits in the Network

The Purdue Reference Model maps where systems live; IEC 62443 defines how they are protected. Your on-prem AI data center sits at Level 3.5—the Industrial DMZ—with strictly controlled traffic to and from each level. Talk to our support team for an IEC 62443 zone-and-conduit review of your current architecture.

When Sovereignty Means Fully Disconnected — The Air-Gapped AI DC

Nuclear plants, defense facilities, and the most regulated thermal sites cannot route any data outside the perimeter. The air-gapped on-prem AI data center makes that work in 2026 without sacrificing model freshness.

No live network path from the AI compute core to the public internet. Period. Updates arrive on signed, scanned removable media through a physical chain-of-custody process. Outbound telemetry is strictly forbidden.

- Zero outbound network connectivity

- Signed-media update chain-of-custody

- On-prem certificate authority for internal trust

- Local model registry, no cloud sync

The challenge is that frontier models update monthly. The solution: an offline model bundle process where signed weight files, evaluation artifacts, and changelogs are physically transferred and validated against an internal red-team suite before deployment.

- Quarterly bundle delivery via signed media

- Internal red-team gate before activation

- Differential weight updates to reduce volume

- Roll-back path retained for 4 prior versions

Sizing Your On-Prem AI Data Center — A Practical Calculator

Most over-builds start with the wrong question. The right one is: how many concurrent inference requests, at what latency, against what model? From there, everything else falls out. Book a 30-minute sizing session and we'll run your numbers live with you.

| Plant Profile | Compute Tier | Power Draw | Cooling | Floor Space | Workloads Supported |

|---|---|---|---|---|---|

| Single 660 MW unit · Pilot | 4× L40S + 2× H200 node | ~30 kW | Air or RDHx | ~50 sq ft | Inference, vibration, soft sensor |

| Multi-unit thermal · Production | 1× HGX B200 8-GPU | ~14 kW | Direct Liquid (DLC) | ~80 sq ft | Plant LLM, twin pilot, all sensors |

| Combined-cycle fleet · Production | 1× GB200 NVL72 | ~120 kW | Direct Liquid (mandatory) | ~150 sq ft | Foundation LLM, training, twin |

| Nuclear or defense · Sovereign | 1× GB300 NVL72 (air-gap) | ~120 kW | Direct Liquid + 2N CDU | ~200 sq ft | Reasoning, twin, all + air-gap |

| Multi-site fleet HQ | 2–4× GB300 NVL72 cluster | ~480 kW | Direct Liquid + chilled water plant | ~600 sq ft | Federated training, fleet reasoning |

The 18-Week On-Prem AI Data Center Build

A first-time on-prem AI data center inside a power plant is a coordinated facility, electrical, cooling, network, and software project. Here's what we run for a 1× GB300 NVL72 + DMZ deployment.

What Plant Engineers Ask Before Building On-Prem

These come up in every on-prem AI data center scoping call. Reach out to our support team for tailored answers on your specific plant.

For inference under 25 kW per rack, yes. For GB300/GB200 NVL72 at 120 kW, almost certainly not—you need a purpose-built room with 480V 3-phase, direct liquid cooling, and floor loading rated for ~440 psf.

2N is enough for production AI inference that supports decisions but does not directly close control loops. 2N+1 (Tier IV) is the right choice when AI output is part of a closed-loop control or safety-related system.

Purdue describes where systems live; IEC 62443 defines how they're protected through zones, conduits, and security levels. You need both. Purdue alone is a diagram, not a control. IEC 62443 turns the diagram into auditable security.

Quarterly signed-media transfer is the established pattern. Frontier model weights, evaluation results, and changelogs arrive on hardware tokens, are validated against an internal red-team suite, and only then promoted to production with rollback retained.

Why Power Plants Choose iFactory for On-Prem AI Data Centers

Building a hyperscaler-class data center in a generating station is not a lift-and-shift of cloud architecture. It is an OT-grade install where safety, sovereignty, and uptime constraints come first. Book a build-readiness review and we'll model the data center inside your plant before you sign a PO.

Get an On-Prem AI Data Center Site Plan for Your Plant

Thirty minutes with our infrastructure engineers. Bring your plant layout, electrical capacity, water spec, and IT/OT topology. We'll size the data center, map the redundancy tier, and give you a concrete 18-week build path—before you commit a single dollar to civil work.