Every hour a defective unit escapes your production line, it compounds — warranty claims, recall risk, brand damage, and the quiet erosion of customer trust that no marketing budget can undo. Manual visual inspection, the industry's default quality gate for over a century, now operates at a fundamental disadvantage: human inspectors fatigue, blink, and miss. AI-powered automated visual inspection does not.

iFactory AI Vision Cameras

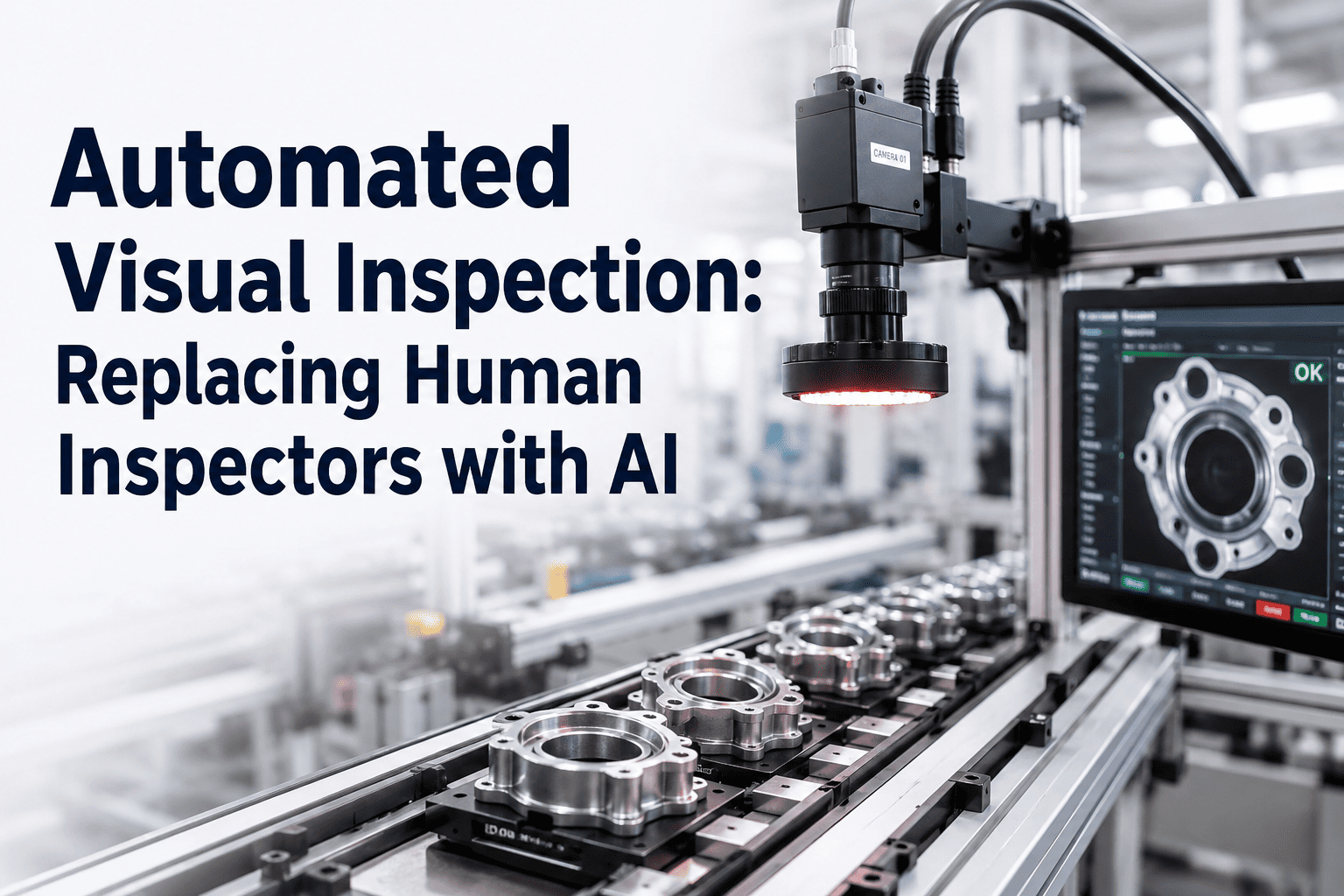

Automated Visual Inspection: Replacing Human Inspectors with AI

How manufacturers are cutting inspection costs by 70%, reducing defect escapes by 90%, and achieving zero-defect production targets through AI-powered machine vision — deployed in weeks, not months.

70%

Reduction in inspection labour cost

90%

Fewer defect escapes to customers

99.7%

Inspection accuracy at full line speed

6-8wk

Typical deployment to live inspection

The Hidden Cost of Human Visual Inspection

Manual quality inspection carries costs that rarely appear in a single line of the P&L — but accumulate across every shift, every line, and every shipment. Inspector fatigue degrades detection accuracy by up to 30% in the final two hours of a shift. Subjectivity creates inconsistency across inspectors and sites. Documentation is manual, slow, and incomplete. And every inspector is a cost centre that scales linearly with output volume.

Fatigue-Driven Escapes

Inspector accuracy drops measurably after hour six. Defects that should be caught leave the facility — and surface as warranty claims weeks later.

Linear Labour Scaling

More output requires more inspectors. There is no leverage in the model — every unit of growth adds headcount, overhead, and scheduling complexity.

Subjective Inconsistency

Two inspectors will reject different units on the same defect class. Cross-site consistency is nearly impossible without standardised, objective inspection criteria.

Zero Traceability

Paper logs and spreadsheets cannot provide the granular defect traceability required for root cause analysis, regulatory compliance, or customer audit response.

What AI Visual Inspection Actually Does

Automated visual inspection (AVI) replaces the human eye with high-resolution industrial cameras, structured lighting, and deep learning models trained to detect, classify, and log defects at production speed. Unlike rule-based machine vision systems that require explicit programming for every defect type, AI-based inspection learns from labelled image data — and continues improving as it accumulates more examples from your specific production environment.

01

Camera and Lighting Setup

Industrial-grade cameras — 2MP to 20MP depending on defect resolution requirements — are positioned at critical inspection points. Structured lighting (coaxial, dark field, or telecentric) is configured to maximise defect contrast. Proper lighting accounts for up to 70% of inspection accuracy, making it the foundation of any AVI deployment.

02

Training Data Collection and Labelling

The AI model requires labelled examples of both conforming and non-conforming units. iFactory's platform accelerates this process with semi-automated labelling tools — typically 500 to 2,000 images per defect class are sufficient to achieve production-ready accuracy on most applications.

03

Model Training and Validation

Deep learning models — convolutional neural networks fine-tuned on your product and defect taxonomy — are trained and validated against a held-out test set. Accuracy, precision, recall, and false positive rates are reported before any live deployment. No model goes live until it exceeds your defined acceptance thresholds.

04

Live Inspection and Continuous Learning

Once deployed, the system inspects every unit at line speed — typically 100ms to 500ms per inspection cycle. Results are logged, defects are classified and imaged, and the AI model retrains on edge cases flagged by quality engineers — continuously improving accuracy over time.

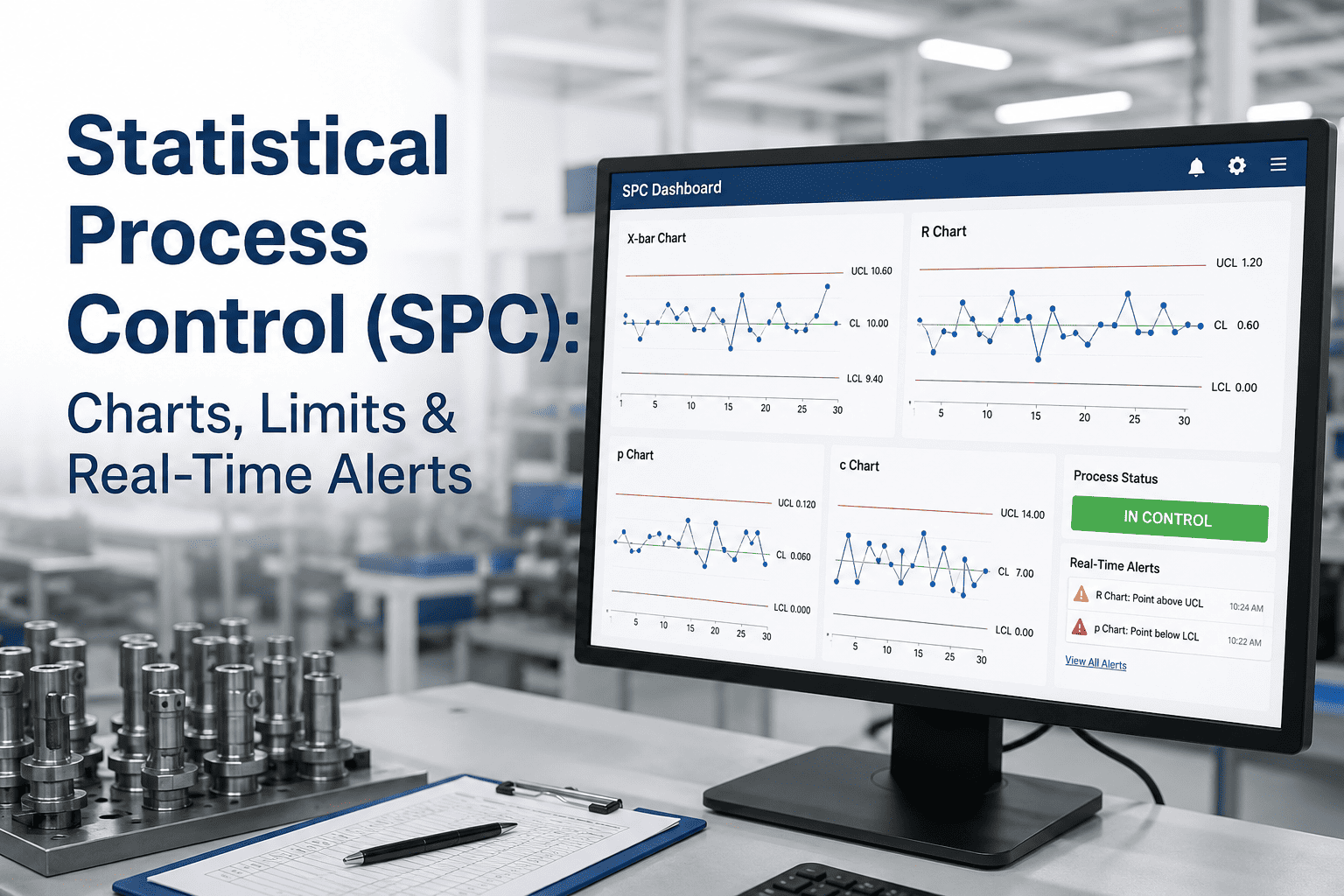

Legacy Inspection vs. AI Visual Inspection: The Performance Gap

The operational difference between manual inspection and AI-powered automated visual inspection is not incremental. It is structural. The table below maps the specific failure modes of legacy inspection against the capabilities of an AI vision deployment.

| Dimension |

Legacy Human Inspection |

AI Automated Visual Inspection |

| Inspection Speed |

5–15 units per minute per inspector |

200–1,200 units per minute per camera |

| Accuracy Over Time |

Degrades 20–30% after hour six of shift |

Consistent 99.7%+ at all hours and volumes |

| Defect Classification |

Binary pass/fail with subjective severity |

Multi-class with pixel-level location and severity scoring |

| Repeatability |

±15–25% variation between inspectors |

100% repeatable — same result on same input every time |

| Documentation |

Manual logs, often incomplete or delayed |

Automatic image capture and structured defect records per unit |

| Scaling Cost |

Linear — each throughput increase adds headcount |

Near-zero marginal cost per additional unit inspected |

| Root Cause Analysis |

Relies on inspector memory and anecdote |

Defect trending, heatmaps, and time-stamped image libraries |

| Night Shift Performance |

Significantly below day shift average |

Identical performance at all hours |

See AI visual inspection running live on your product type.

Book a Demo

The Business Impact Across Three Dimensions

Automated visual inspection creates value across three distinct areas of the business — quality, operations, and finance. Each produces measurable outcomes within the first 90 days of deployment.

Quality and Compliance

- Defect escape rate reduced by 85–92% across all product lines

- Full traceability for every unit — image, timestamp, defect classification, and disposition

- Automated compliance documentation for ISO 9001, IATF 16949, and FDA 21 CFR Part 11

- Incoming customer audit response time reduced from days to hours

- First-pass yield improvement of 3–8 percentage points at comparable volume

Operational Efficiency

- Inspection labour cost reduced 60–75% within 12 months of deployment

- Inspection throughput scales with line speed — no additional headcount required

- Inspection bottlenecks eliminated — AI inspection cycles in 100–500ms per unit

- Inspector redeployment to higher-value process engineering and oversight roles

- Consistent multi-site inspection standards without cross-site training programmes

Financial Outcomes

- Warranty claim volume reduced by 40–65% within the first year of operation

- Customer-facing defect costs eliminated, protecting contract renewal and pricing power

- Scrap rework cost visibility enables targeted process improvement investment

- Typical ROI payback in 8–14 months on full deployment cost

- Annual savings of $400K–$2.1M depending on production volume and defect cost profile

Defect Types AI Vision Inspection Detects

iFactory's AI vision platform handles the full spectrum of surface and dimensional defects across discrete manufacturing, assembly, and packaging environments. Common defect categories include:

Surface scratches and abrasions at micron-scale resolution

Porosity, voids, and inclusions in cast or sintered components

Dimensional non-conformance via calibrated photogrammetry

Colour deviation and print registration on packaging and labels

Missing, misaligned, or incorrect assembly components

Solder joint defects — bridging, cold joint, insufficient coverage

Coating defects — bare spots, drips, orange peel, fisheye

Weld quality — undercut, porosity, incomplete fusion, spattering

Seal integrity on blister packs, pouches, and closures

Implementation: From Camera Mount to Live Inspection in Six Weeks

The most common concern among quality directors considering AVI is deployment complexity. The iFactory approach is designed specifically to avoid the extended timelines and integration challenges that have plagued traditional machine vision deployments. A six-week path from site assessment to live production inspection is standard.

Week 1–2

Site Assessment and Camera Specification

Engineering audit of inspection points, line speed, product geometry, and defect classification requirements. Camera resolution, frame rate, and lighting configuration specified. Integration path to existing MES or ERP confirmed.

Week 2–3

Hardware Installation and Data Collection

Camera brackets, lighting rigs, and edge computing hardware installed during scheduled maintenance window — no production shutdown required. Image collection begins immediately using existing production flow.

Week 3–5

Model Training and Validation

Quality team labels collected images using iFactory's semi-automated annotation interface. AI models are trained, validated against hold-out sets, and tuned until accuracy, precision, and recall thresholds are met. False positive rate is tuned with production team feedback.

Week 6

Live Deployment and Parallel Validation

AI inspection goes live alongside human inspection for one week of parallel validation. Agreement rate and any systematic gaps are documented. Once agreement thresholds are met, AI inspection becomes the primary quality gate with human oversight for escalated cases only.

Frequently Asked Questions

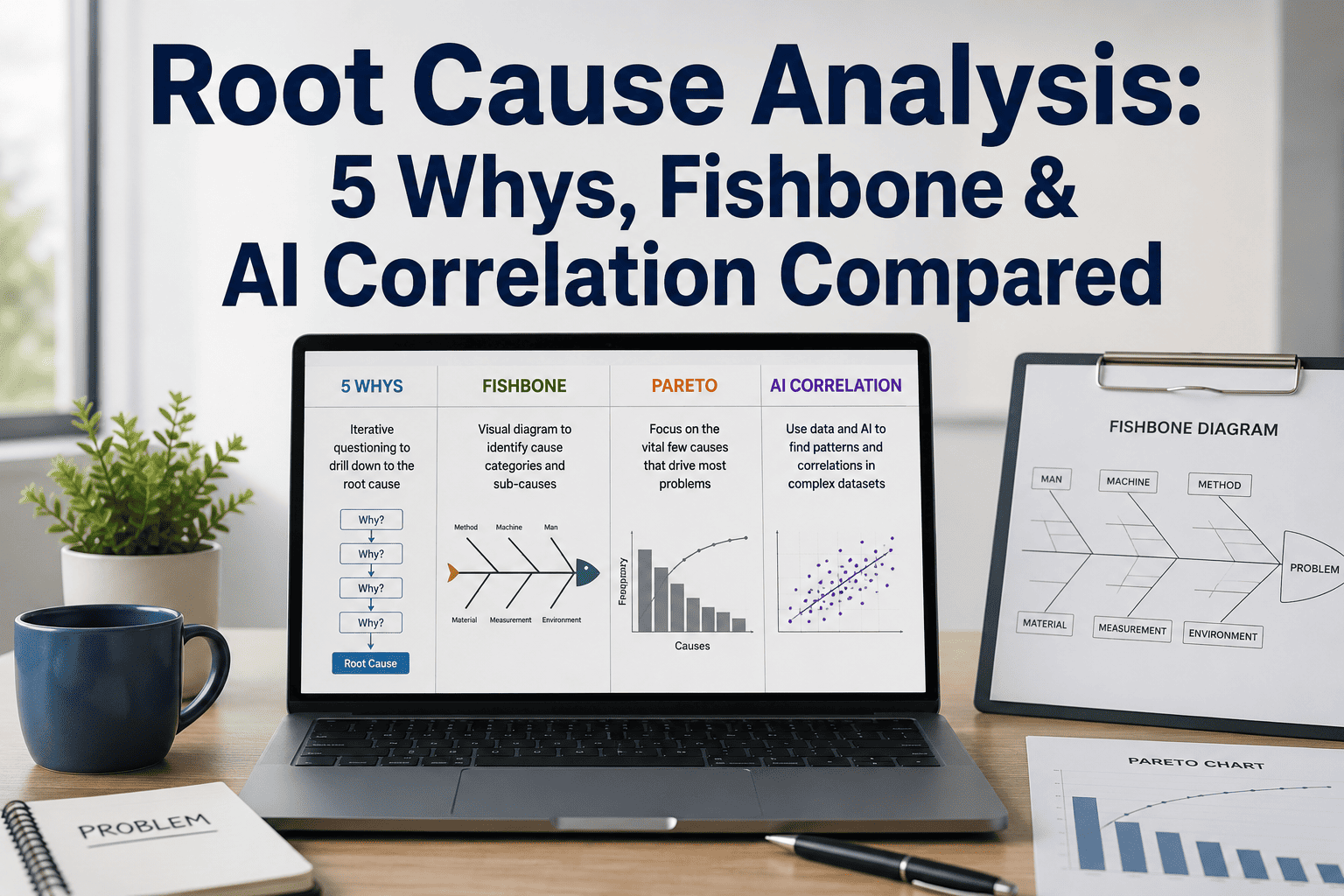

How much training data is required before the model is accurate?

Most applications reach production-ready accuracy with 500 to 2,000 labelled images per defect class. For simple surface defects on consistent backgrounds, 300 images per class can be sufficient. iFactory's semi-automated labelling tools reduce the time required to build training datasets by 60% compared to manual annotation workflows.

What happens when a new defect type appears that the model has not seen?

New defect types not in the training set will typically be flagged as anomalies by the outlier detection layer — rather than passed as conforming. Quality engineers review flagged anomalies, label them, and trigger a model update cycle. New defect classes are incorporated within 3 to 5 business days in most cases.

Can AI inspection handle product variants and changeovers?

Yes. The iFactory platform supports multi-product model libraries. Product changeover triggers an automatic model switch — no manual reconfiguration required. If a new variant has not been trained, the system flags the gap and queues a training data collection cycle rather than running an unapproved model.

How does the system integrate with our existing MES and quality systems?

Integration is via REST API and standard industrial protocols. Inspection results — including defect images, classification, severity, and disposition — can be pushed to any MES, CMMS, or ERP in real time. iFactory has pre-built connectors for SAP, Oracle, Infor, and most major quality management platforms.

What accuracy can we realistically expect from day one versus six months in?

Day-one accuracy on trained defect classes typically runs 95 to 97%. As the model accumulates edge cases from production and undergoes regular retraining cycles, accuracy converges to 99.3 to 99.8% within three to six months. Continuous learning is a core architectural feature — the model improves with every production run.

Zero-Defect Production Starts Here

Stop Relying on Human Eyes to Protect Your Quality Standards

iFactory's AI Vision Cameras deliver 99.7% inspection accuracy at full production speed — with full defect traceability, automatic compliance documentation, and continuous model improvement built in. First ROI in under 90 days.

70%

Labour cost reduction

10-30x

Return on investment