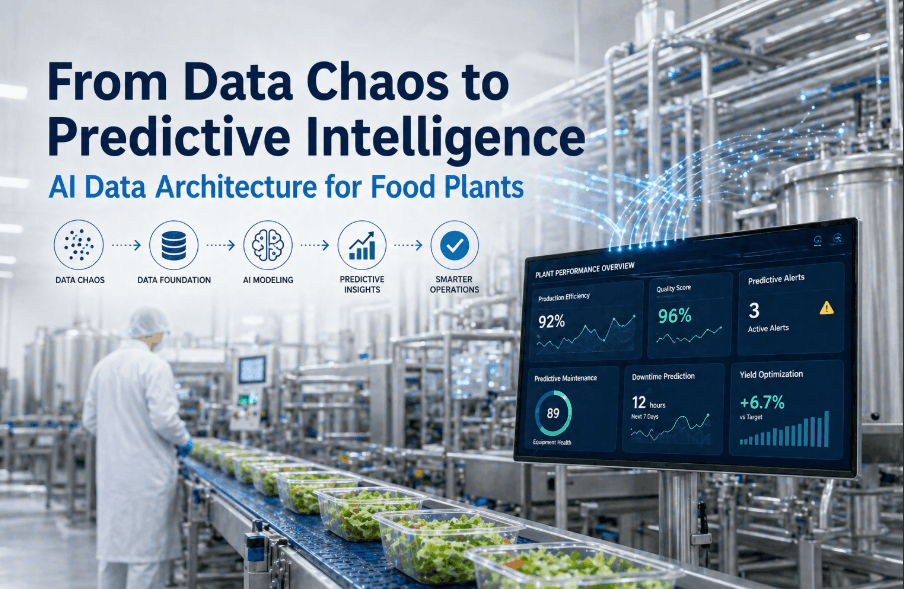

Food manufacturing facilities generate enormous volumes of operational data every second—sensor readings, batch records, equipment telemetry, quality inspection results, and energy consumption logs—yet most plants operate in a state of persistent data chaos. Siloed systems, incompatible formats, and legacy infrastructure prevent this raw data from becoming actionable intelligence. AI data architecture for food plants solves this problem by creating a unified, scalable foundation that connects every data source across the facility, enabling real-time predictive analytics, proactive equipment management, and enterprise-wide manufacturing intelligence. If your facility is still wrestling with fragmented data systems, Book a Demo to see how a modern data architecture can transform your production operation within weeks.

Why Data Chaos Is the Biggest Barrier to Predictive Intelligence in Food Manufacturing

The modern food plant is not short on data. A mid-sized facility with 200 production assets generates upwards of 10 million data points per day across vibration sensors, SCADA systems, ERP platforms, quality management systems, and energy monitoring tools. The problem is not data volume—it is data fragmentation. Without a coherent data integration platform, this information lives in isolated silos that cannot communicate with each other, making cross-system analytics, predictive modeling, and real-time decision-making structurally impossible.

The consequences are measurable and severe. Reliability engineers spend hours manually extracting data from disconnected systems. Quality teams cannot correlate equipment performance data with batch outcome records. Operations leadership makes capital allocation decisions without access to accurate asset health history. Every one of these inefficiencies is a direct product of poor data pipeline architecture—and every one of them disappears when a properly designed AI data architecture is in place.

Siloed OT and IT Systems

Operational technology systems—PLCs, SCADA, historians—collect rich process data that never reaches IT business intelligence platforms. This OT/IT gap prevents holistic manufacturing data platform integration and leaves critical operational context invisible to enterprise decision-makers.

Incompatible Data Formats

Equipment from different manufacturers uses proprietary protocols—Modbus, OPC-UA, MQTT, Profibus—that require specialized connectors and transformation logic before data can flow into a unified industrial IoT platform for cross-asset analysis and predictive modeling.

Latency and Batch Processing Gaps

Legacy data infrastructure relies on batch processing cycles that create hours-long delays between an event occurring on the production floor and that event appearing in reporting dashboards—making genuine real-time data processing for predictive maintenance and quality control structurally impossible.

No Unified Data Lake

Without a centralized data lake for manufacturing, historical asset performance data, quality records, and maintenance logs cannot be combined into the training datasets that machine learning models require to deliver accurate failure prediction and process optimization recommendations.

The Four-Layer AI Data Architecture Framework for Food Manufacturing Plants

Effective AI data architecture for food manufacturing is not a single technology—it is a structured, layered framework that transforms raw industrial signals into refined predictive intelligence. Each layer has a distinct function, and the integrity of the overall system depends on each layer performing its role with precision. Understanding this architecture is the foundation of any successful digital transformation manufacturing initiative. Facilities ready to map this framework to their specific environment can Book a Demo for a live architecture review with our data engineering team.

Data Acquisition & Edge Processing

The foundation of the architecture begins at the asset level. Industrial-grade sensors capture high-frequency vibration, temperature, pressure, current draw, and flow rate data continuously from every production asset. Edge computing gateways perform initial signal processing—filtering noise, compressing data, and performing FFT analysis—before transmitting structured feature vectors upstream. This edge-first approach reduces bandwidth requirements by up to 90% while ensuring that latency-sensitive predictive alerts can be generated locally without cloud round-trips.

Protocols: OPC-UA · MQTT · Modbus · ProfibusData Integration & Streaming Pipeline

The integration layer is where data chaos is resolved. A purpose-built data integration platform ingests streams from edge gateways, SCADA historians, ERP systems, CMMS platforms, and laboratory information management systems simultaneously. Schema normalization, protocol translation, and real-time data quality validation ensure that all incoming data meets the structural requirements of downstream AI models. Event-driven streaming pipelines—built on technologies like Apache Kafka or Azure Event Hub—enable true sub-second data latency across the entire facility data ecosystem.

Latency: Sub-100ms · Throughput: 10M+ events/dayManufacturing Data Lake & Feature Store

All normalized data streams converge in a structured data lake for manufacturing that stores both hot (real-time) and cold (historical) data in a queryable, time-series-optimized format. A feature store sits above the raw data layer, pre-computing the engineered variables—rolling averages, spectral energy bands, interoperability ratios—that machine learning models consume during inference. This architecture enables both real-time anomaly scoring and retrospective model training on historical failure events simultaneously, without resource contention.

Retention: 7+ years · Query: Sub-second · Format: Parquet + Time-seriesAI Analytics & Operational Intelligence Layer

The intelligence layer hosts the machine learning models, predictive analytics software engines, and operational dashboards that convert processed data into business value. Multi-model ensembles handle anomaly detection, remaining useful life estimation, quality correlation analysis, and energy optimization simultaneously. Outputs are surfaced through role-based dashboards for reliability engineers, operations directors, and executive leadership—and pushed directly to CMMS and ERP systems via API to trigger automated work orders and procurement actions without manual intervention.

Outputs: Work Orders · Alerts · KPI Dashboards · ERP SyncBuilding a Unified Manufacturing Data Platform: System Integration Deep Dive

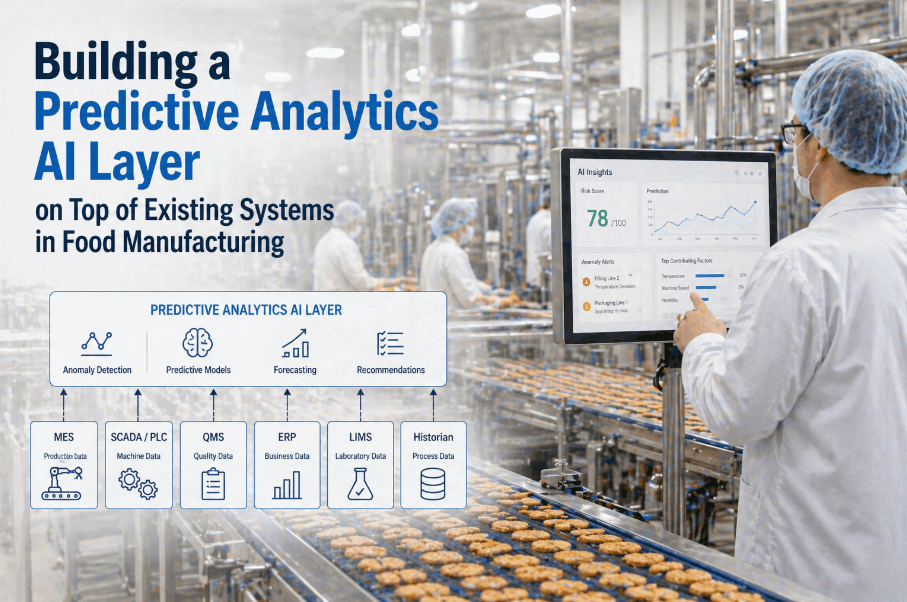

The most technically demanding component of any smart factory data platform initiative is the system integration layer. Food manufacturing facilities typically operate a heterogeneous mix of technologies—some acquired over decades—that were never designed to share data. Successfully integrating these systems without disrupting production requires a connector-first architecture strategy that prioritizes non-intrusive data extraction over invasive system modifications. Facilities looking to assess their current integration complexity can Book a Demo and receive a complimentary integration readiness assessment.

| System Type | Common Platforms | Integration Method | Data Contribution | Latency Class |

|---|---|---|---|---|

| SCADA / Historians | OSIsoft PI, Wonderware, iFIX | OPC-UA connector / REST API | Process parameters, setpoints, alarms | Near real-time (1–5s) |

| ERP Systems | SAP S/4HANA, Oracle, Microsoft Dynamics | REST API / SAP RFC | Production orders, BOMs, inventory levels | Batch (minutes) |

| CMMS Platforms | Maximo, SAP PM, UpKeep, MP2 | REST API / webhook | Work orders, maintenance history, asset master data | Bidirectional, near real-time |

| Industrial IoT Sensors | MEMS vibration, thermal, current clamps | MQTT / edge gateway | High-frequency vibration, temperature, current | Streaming (sub-second) |

| Quality / LIMS | LabVantage, LIMS Factory, custom QMS | REST API / database connector | Batch test results, in-process quality checks | Batch (5–30 minutes) |

| Energy Management | Schneider EcoStruxure, Siemens Desigo | Modbus / BACnet / REST | Electricity, gas, water, compressed air consumption | Near real-time (15–60s) |

How the Predictive Data Pipeline Converts Raw Signals Into Failure Forecasts

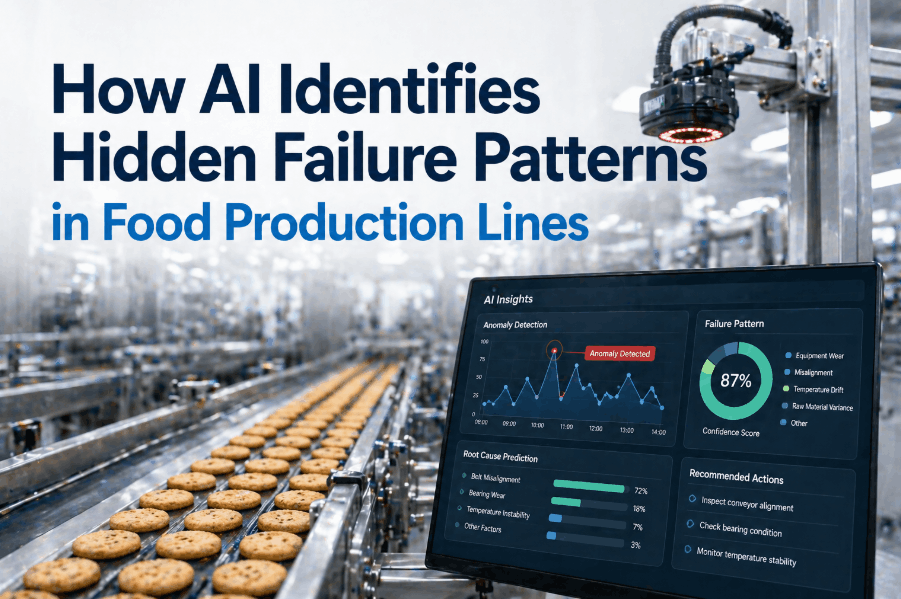

The data pipeline architecture that powers predictive maintenance in food manufacturing must handle four distinct data transformation stages in near-real-time—from raw sensor ingestion through feature engineering, model inference, and alert generation. Each stage introduces computational complexity, and the engineering choices made at each transition point determine whether the platform delivers genuine weeks-early failure prediction or simply an expensive monitoring system that triggers alarms too late to prevent downtime. Understanding this pipeline is essential for any reliability or data engineering team evaluating manufacturing intelligence software.

Raw Signal Ingestion & Validation

High-frequency sensor streams enter the pipeline at rates up to 100kHz per channel. Automated data quality checks flag missing values, out-of-range readings, and sensor drift signatures before data advances downstream. Invalid data is quarantined and flagged for sensor health review, ensuring model inference never occurs on corrupted input data.

Feature Engineering & Spectral Transformation

Time-domain signals are transformed into frequency spectra via Fast Fourier Transform. Statistical features—RMS amplitude, kurtosis, crest factor, bearing defect frequency energy bands—are extracted and stored in the feature store. These engineered variables are what AI models actually consume, and their quality determines prediction accuracy.

Multi-Model Ensemble Inference

Ensemble models—combining isolation forests, LSTM neural networks, and gradient boosting classifiers—score each asset's current feature vector against its learned baseline in real time. Each model specializes in different failure mode signatures, and the ensemble aggregation logic produces a single, calibrated Asset Health Index that reflects composite mechanical condition across all failure dimensions simultaneously.

Intelligent Alert Routing & Work Order Generation

When health index scores breach calibrated thresholds, the platform triggers an automated workflow: generating a diagnostic work order with failure mode classification, severity rating, estimated time-to-failure, and recommended corrective action. This work order is pushed simultaneously to the facility's CMMS, the responsible maintenance technician's mobile device, and the operations dashboard—eliminating alert-to-action latency entirely.

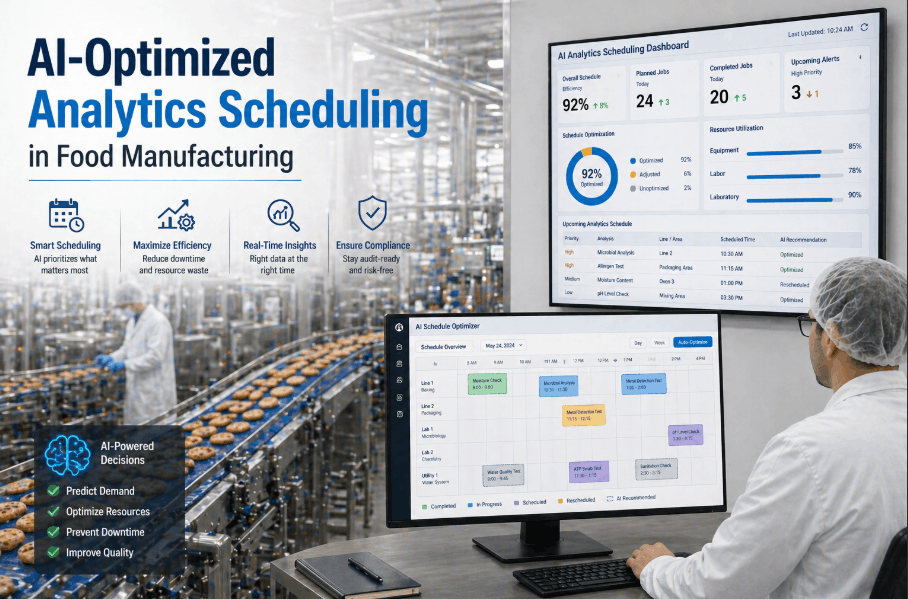

What Operational Analytics Platform Capabilities Matter Most for Food Plants

Not all operational analytics platforms are created equal, and the capabilities that matter most in a food manufacturing context are different from those prioritized in discrete manufacturing or process industries. Food plants require analytics platforms that can handle the specific challenges of their environment: variable loading profiles driven by recipe changes, washdown cycles that affect sensor baselines, temperature fluctuations across refrigerated and ambient production zones, and strict regulatory documentation requirements that demand audit-ready data trails. Facilities ready to evaluate platform capabilities against their specific requirements can Book a Demo for a live capability demonstration.

Real-Time Asset Health Dashboards

Role-specific dashboards give reliability engineers, operations managers, and executive leadership simultaneous visibility into asset health scores, active anomalies, upcoming maintenance requirements, and OEE performance metrics—all derived from live sensor data and updated continuously without manual refresh cycles.

Equipment-to-Quality Analytics

Cross-system correlation analysis links equipment condition data with quality inspection outcomes, enabling manufacturers to identify which specific mechanical parameters—fill valve wear, conveyor speed variance, mixer torque degradation—are statistically correlated with quality defects before those defects reach finished product inspection.

Energy Consumption Anomaly Detection

AI models trained on energy consumption baselines detect when motors, compressors, and HVAC systems are drawing abnormal power relative to their production load—flagging both mechanical degradation signatures and energy waste events that traditional BMS systems are too coarse to identify in their early stages.

Audit-Ready Compliance Records

Continuous, timestamped equipment performance and maintenance records generated by the enterprise data management layer provide the auditable documentation required for FSMA, BRC, SQF, and FDA inspections—automatically, without manual record compilation or the data integrity risks of paper-based maintenance logs.

Condition-Based Maintenance Scheduling

AI-generated remaining useful life estimates for every monitored asset enable maintenance schedulers to plan interventions precisely—booking technicians, ordering parts, and scheduling production downtime windows weeks in advance based on actual equipment condition rather than calendar-driven assumptions that waste resources on unnecessary replacements.

Asset Lifecycle & CapEx Intelligence

Historical asset health trajectories and failure rate data give finance and operations teams the quantitative foundation to build accurate total cost of ownership models—enabling data-backed repair versus replace decisions and capital expenditure forecasts that eliminate the guesswork from long-term asset investment planning.

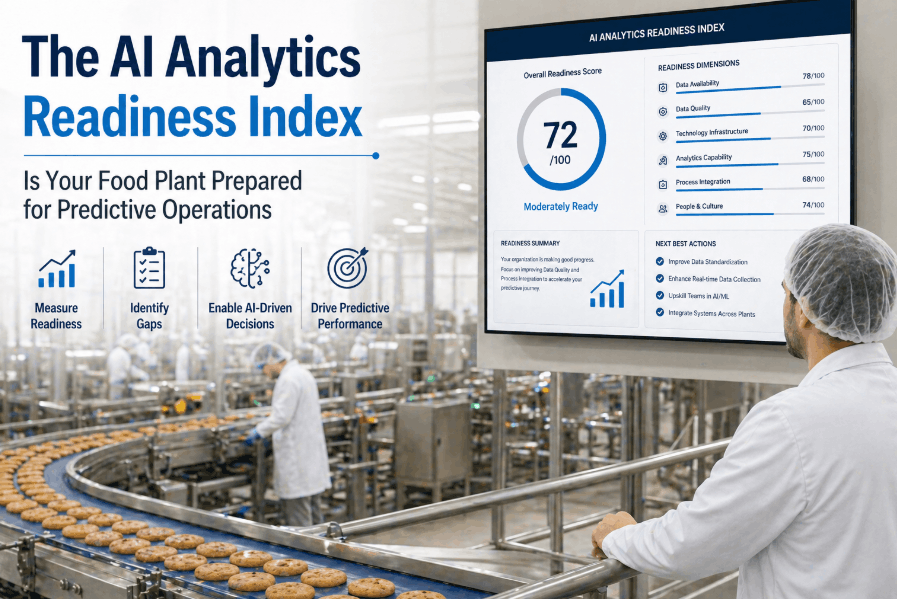

Quantifying the Business Value of AI Data Architecture in Food Production

For plant directors and technology investment committees evaluating an analytics infrastructure overhaul, the business case must be grounded in measurable financial outcomes—not technology capability claims. The value of deploying a unified AI data architecture materializes across four distinct performance dimensions, each of which contributes to a compounding return that accelerates over the first 18 months of platform operation.

Average reduction in time between a production event occurring and that event becoming visible in operational dashboards—from hours to seconds.

Failure detection accuracy achieved by ensemble AI models trained on food manufacturing-specific failure event datasets and calibrated per-asset baselines.

Total maintenance cost reduction driven by eliminating emergency repair premiums, unnecessary preventive replacements, and unplanned overtime labor costs.

Percentage of production assets—regardless of age, manufacturer, or protocol—that can be brought under unified AI monitoring within 72 hours of deployment.

Why the First Prevented Failure Pays for the Entire Architecture Investment

The "single-event ROI" calculation is the most compelling argument for AI data architecture investment in food manufacturing. A single undetected gearbox failure on a primary processing line—including lost production, emergency procurement, overtime labor, product disposal, and customer penalty clauses—typically costs between $150,000 and $600,000. The complete deployment cost of a unified manufacturing data platform covering an entire facility ranges from $50,000 to $200,000. One prevented catastrophic failure justifies the entire platform investment. Reliability engineers ready to build this business case for their leadership teams can Book a Demo to receive a facility-specific ROI projection based on their actual equipment inventory and production volume data.

AI Data Architecture Deployment Roadmap: From Fragmented Systems to Full Predictive Intelligence

The most common concern plant directors raise when evaluating an analytics infrastructure transformation is implementation complexity. The perception that unifying legacy OT systems, modern IoT platforms, and enterprise IT applications requires months of disruptive integration work is both understandable and outdated. Modern connector-first deployment architectures bring the first AI-powered insights online within 48 to 72 hours of sensor deployment—without stopping a single production line. The full roadmap to enterprise-wide manufacturing intelligence software coverage typically spans six to twelve weeks depending on facility size and system complexity.

Sensor Deployment & Edge Gateway Installation

Industrial MEMS vibration, temperature, and current sensors are mounted on critical production assets using magnetic or adhesive mounts requiring no electrical modifications or production stoppages. Edge gateways are installed in control panels and begin streaming processed feature data to the cloud integration layer within hours of installation.

System Integration & Data Pipeline Activation

Pre-built connectors for SCADA historians, CMMS platforms, and ERP systems are configured and validated. The data pipeline architecture begins normalizing and unifying data streams from all connected systems into the manufacturing data lake. Data quality validation rules are calibrated for the facility's specific operational profiles and production schedules.

AI Baseline Learning & Model Calibration

Machine learning models learn the normal operational signature of each asset across all production modes, load conditions, and recipe changeovers. Per-asset anomaly detection thresholds are statistically calibrated to minimize false alarms while maintaining maximum sensitivity to genuine mechanical degradation signals. Initial predictive alerts begin generating within this window for assets already showing early failure indicators.

Full Predictive Intelligence & Continuous Improvement

The platform enters full predictive mode with enterprise-wide asset coverage, automated CMMS work order generation, and live dashboards active for all stakeholder groups. The AI model enters its continuous self-improvement cycle—incorporating every resolved maintenance event as new training data, compounding prediction accuracy over time and expanding failure pattern recognition to increasingly subtle mechanical degradation signatures.

AI Data Architecture for Food Manufacturing — Frequently Asked Questions

What is the difference between a data integration platform and a traditional data warehouse for manufacturing?

A traditional data warehouse is a structured, batch-refresh repository designed for historical reporting. A modern data integration platform for manufacturing handles real-time streaming data from OT systems, IoT sensors, and enterprise applications simultaneously—enabling sub-second analytics latency that is essential for predictive maintenance and live production monitoring rather than retroactive analysis.

Can AI data architecture be deployed in facilities with 20-year-old legacy equipment?

Yes. Modern industrial IoT platforms are specifically designed to add monitoring capability to equipment of any age without modification. Wireless MEMS sensors attached externally to legacy motors, gearboxes, and pumps provide the same high-frequency data streams as purpose-built smart assets—bringing aging equipment fully under AI condition monitoring coverage without capital replacement.

How does a data lake for manufacturing differ from a standard cloud storage solution?

A manufacturing data lake is a time-series-optimized storage architecture specifically structured to support industrial analytics workloads—handling millions of time-stamped sensor readings per day with sub-second query performance. Standard cloud object storage lacks the indexing strategies, schema enforcement, and feature store integration layers that AI model inference pipelines require for real-time prediction accuracy.

How long does it take to see ROI from an AI data architecture investment?

Most food manufacturing facilities achieve positive ROI within the first 90 days of deployment—often from a single prevented equipment failure event that would otherwise have caused hours or days of unplanned downtime. The compounding value of improved maintenance scheduling, extended asset lifecycles, and quality incident reduction continues to build over the full deployment lifetime.

Does implementing AI data architecture require a dedicated data science team on-site?

No. Purpose-built predictive analytics software platforms for food manufacturing are designed for deployment and operation by reliability engineers and operations teams—not data scientists. Pre-trained models, automated baseline learning, and no-code dashboard configuration eliminate the need for specialized machine learning expertise within the facility, while vendor data engineering teams handle model maintenance and platform updates remotely.

What cybersecurity protections are built into industrial IoT platform deployments?

Enterprise-grade industrial IoT platforms implement network segmentation that keeps OT and IT networks isolated, with data flowing unidirectionally from OT to the cloud integration layer through encrypted MQTT or OPC-UA tunnels. No inbound connections to plant floor systems are required, eliminating the attack surface that bidirectional integrations create while maintaining full compliance with IEC 62443 industrial cybersecurity standards.