Cloud-based AI for manufacturing predictive analytics sounds convenient until a procurement manager asks where the process data goes, a CISO flags the OT network egress requirement, and a production director discovers that a 200ms cloud round-trip latency makes real-time anomaly detection impossible on a 1,200 RPM conveyor line. Edge AI solves all three problems by running every AI model, every fault classification, and every alert on servers inside your facility. Your sensor data never leaves the plant network. iFactory deploys on NVIDIA DGX and EGX edge servers inside your facility, connecting to existing PLCs and SCADA via read-only OPC-UA, and delivers the same AI capability as cloud-based platforms with none of the data sovereignty, latency, or cybersecurity trade-offs. Book a free edge AI assessment for your plant today.

iFactory's edge AI platform deploys on NVIDIA DGX or EGX servers physically located inside your manufacturing facility, connected to plant sensors and control systems via read-only OPC-UA or Modbus. All AI inference, fault classification, alert generation, and compliance record-keeping runs locally. Zero sensor data is transmitted to any cloud endpoint. Inference latency is under 10 milliseconds. The deployment satisfies IEC 62443, NIST 800-82, NERC CIP, GDPR, and UAE data localization requirements by architecture, not by configuration workaround.

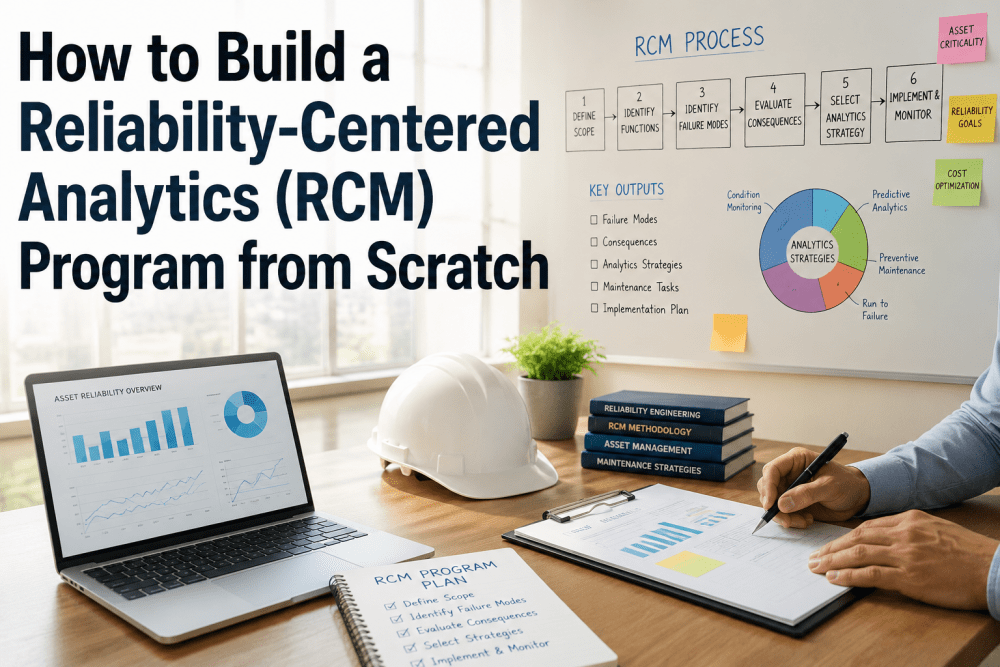

Three Deployment Modes: Why On-Premise Edge AI Is the Only Viable Option for Manufacturing OT

Three deployment architectures exist for industrial AI. Each has a specific trade-off profile. For manufacturing OT environments with strict cybersecurity, latency, and data sovereignty requirements, the trade-offs of cloud and hybrid models are operationally and legally unacceptable in most jurisdictions. Book a demo to see iFactory's edge architecture configured for your OT network topology.

Sensor data streams from plant PLC or SCADA to a cloud endpoint via internet or VPN. AI models run on cloud servers. Alerts and dashboards are delivered back to plant operators over the same internet connection.

A local edge device runs basic anomaly detection. More complex AI analysis and model training happens on cloud servers. Alerts may be generated locally, but model updates require cloud connectivity.

NVIDIA DGX and EGX servers physically located inside the facility, connected to plant sensors read-only. All AI inference, model training, and compliance record-keeping runs locally. Full air-gap option available for highest-security environments.

iFactory Edge AI Architecture: From Plant Sensor to AI Work Order

Five architectural layers, each running inside your facility on hardware you own, controlled by your team, with no external dependency at any layer.

Vibration sensors, temperature probes, pressure transmitters, and current CT clamps connect to plant PLCs or directly to iFactory's I/O edge gateway. Existing SCADA and DCS connections are read-only via OPC-UA or Modbus. No modification to any control system is required at this layer. All raw data remains in the plant OT network.

NVIDIA EGX edge nodes at each monitoring zone collect, time-stamp, and buffer sensor data. High-frequency signals (vibration at 10 kHz+) are preprocessed locally into frequency domain representations before transmission to the AI engine. This distributed edge layer maintains monitoring continuity during any AI server maintenance period without any data loss.

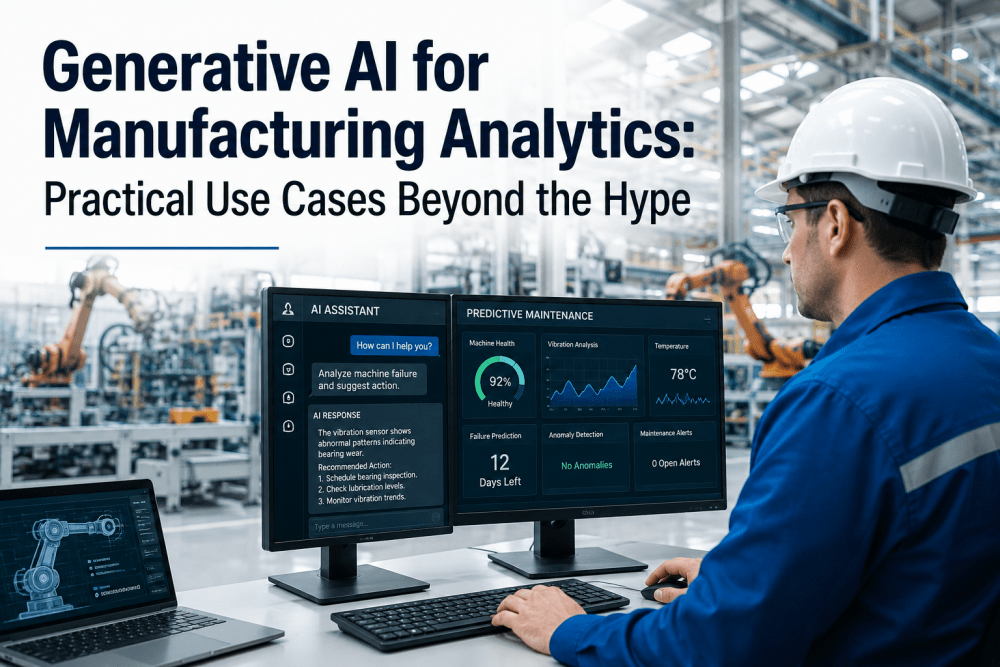

NVIDIA DGX servers run all AI inference locally. GPU-accelerated multi-sensor models process vibration spectra, thermal trends, pressure analytics, and current signatures simultaneously per asset. Fault classification, remaining useful life estimation, and alert generation all complete in under 10 milliseconds. Model training and refinement runs during off-hours on the same hardware using accumulated plant-specific operational data.

Confirmed fault alerts automatically generate condition-based work orders in iFactory's native CMMS or push to your existing asset management system (SAP PM, IBM Maximo, Oracle EAM, Fiix, MaintainX) via secure API running entirely within the plant network. Work orders include fault type, affected component, severity, maintenance recommendation, and parts list pre-populated from local inventory records. All integration traffic stays within the facility network.

Every sensor reading, AI alert, work order, maintenance action, and system change is recorded in an immutable, cryptographically signed audit trail stored entirely on local storage. Compliance reports for OSHA PSM, ADNOC, PUWER, NERC CIP, ISO 55001, and regional frameworks are generated from this local audit trail on demand, with no external data access required. Audit packages for statutory inspections assemble automatically in under 60 seconds.

iFactory's five-layer edge architecture delivers full GPU-accelerated predictive maintenance, automatic work order generation, and compliance audit trail capability with zero internet connectivity required. Air-gap deployment available for maximum-security OT environments.

iFactory vs Competing Edge AI and On-Premise Platforms

Most platforms marketed as "edge" still require cloud connectivity for model training, dashboard access, or alert notification. iFactory is the only manufacturing predictive analytics platform that runs all AI inference, training, dashboards, and compliance documentation fully on-premise without any cloud dependency. Book a demo to review iFactory's edge architecture against your current or planned platform.

| Capability | iFactory | TRACTIAN | Augury | Siemens Insights Hub | C3 AI Mfg | MaintainX | Fiix (Rockwell) | Limble CMMS |

|---|---|---|---|---|---|---|---|---|

| Edge and On-Premise Architecture | ||||||||

| All AI inference runs on-premise (no cloud calls) | 100% local on NVIDIA DGX/EGX | Cloud required for AI | Cloud required for AI | Hybrid: edge preprocessing, cloud AI | Cloud required for AI | Cloud SaaS only | Cloud SaaS only | Cloud SaaS only |

| Full air-gap (zero network interfaces to external) | Full air-gap available | Not available | Not available | Not available | Not available | Not available | Not available | Not available |

| AI inference latency | Under 10ms (GPU local) | 100ms-1s (cloud) | 100ms-1s (cloud) | 50-500ms (hybrid) | 200ms-2s (cloud) | Not applicable | Not applicable | Not applicable |

| On-premise AI model training (no cloud data upload) | Full local GPU training | Cloud training required | Cloud training required | Partial hybrid | Cloud training required | No AI models | No AI models | No AI models |

| Security and Compliance Architecture | ||||||||

| IEC 62443 OT zone isolation by architecture | Zone 2 and 3 segmentation native | Cloud egress violates zones | Cloud egress violates zones | Partial (Siemens ecosystem) | Cloud egress violates zones | Requires OT firewall config | Requires OT firewall config | Requires OT firewall config |

| GDPR and data sovereignty satisfied by architecture | Zero data egress, fully on-premise | Cloud data transfer creates exposure | Cloud data transfer creates exposure | EU cloud region available | Cloud data transfer creates exposure | Data processing agreement required | Data processing agreement required | Data processing agreement required |

| NERC CIP Electronic Security Perimeter compliance | CIP-005 to CIP-013 by architecture | Cloud violates CIP-005 ESP | Cloud violates CIP-005 ESP | Customer-managed CIP | Cloud violates CIP-005 ESP | Customer-managed | Customer-managed | Customer-managed |

Based on publicly available documentation as of Q1 2025. Verify capabilities with each vendor before procurement decisions.

Regional Compliance: How On-Premise Edge AI Satisfies Every Major Requirement

Data sovereignty, OT cybersecurity, and critical infrastructure protection requirements across all major manufacturing jurisdictions are easier to satisfy with on-premise architecture than with cloud architecture. iFactory's edge deployment satisfies every framework below by architecture, without supplementary cloud compliance configuration.

| Region | Key OT and Data Compliance Standards | How On-Premise Edge AI Satisfies Requirements | Why Cloud AI Fails These Requirements |

|---|---|---|---|

| USA | NIST SP 800-82 (OT security) / NERC CIP-005 to CIP-013 (BES cyber) / CISA OT security guidance / ITAR (defense manufacturing) / EAR (controlled technology) | iFactory deploys inside NERC CIP Electronic Security Perimeter as EACMS. Read-only DCS. No internet. CIP-005 through CIP-013 satisfied. ITAR data never leaves facility. | Cloud-connected systems violate NERC CIP ESP isolation requirements. ITAR-controlled process data cannot be transmitted to cloud endpoints under 22 CFR 120. |

| UAE | UAE Data Protection Law (Federal Decree 45/2021) / ADNOC cybersecurity standards / NCA ECC-1 data localization / IEC 62443 / UAE Critical Information Infrastructure protection | All data remains in UAE facility. UAE data localization requirements satisfied without supplementary data residency agreements. NCA ECC-1 and ADNOC standards met by on-premise deployment. | Cloud providers require specific data residency configuration to comply with UAE data localization. ADNOC OT cybersecurity standards prohibit cloud data egress for process data. |

| UK | UK GDPR / UK NIS Regulations 2018 / NCSC OT Security guidance / DSPT (healthcare manufacturing) / UK Cyber Essentials / IEC 62443 | UK GDPR data minimization satisfied: zero data leaves facility. NIS Regulations OT security met by IEC 62443 zone isolation. NCSC OT guidance implemented by read-only DCS integration. | UK GDPR requires lawful basis and DPA for cloud processing. NCSC OT guidance recommends OT-IT network isolation that cloud connectivity undermines. |

| Canada | PIPEDA / Bill C-27 (CPPA) / CCCS OT security guidance / NERC CIP (Canadian BES) / Quebec Law 25 (Act 25) / CSA Z1000 | PIPEDA data minimization and purpose limitation satisfied: process data not collected beyond facility. Quebec Law 25 data residency satisfied. NERC CIP Canadian BES requirements met by on-premise architecture. | PIPEDA and Quebec Law 25 cross-border data transfer rules apply to cloud processing. NERC CIP Canadian BES cyber regulations mirror US CIP and prohibit cloud-connected OT systems on BES. |

| Germany / EU | EU GDPR / EU NIS2 Directive / BSI IT-Grundschutz / KRITIS regulation / IEC 62443 / EU AI Act (Article 6 high-risk AI in critical infrastructure) / BDSG | GDPR data minimization and purpose limitation: zero personal or process data egress. NIS2 essential entity OT security met by IEC 62443 zone architecture. EU AI Act high-risk AI governance satisfied by on-premise audit trail. | GDPR requires explicit legal basis and SCCs for non-EU cloud processing. NIS2 OT security requirements and KRITIS obligations make cloud-connected OT systems a compliance risk for German critical infrastructure operators. |

| Australia | SOCI Act 2018 (Critical Infrastructure) / Privacy Act 1988 / ASD Essential Eight / Australian Cyber Security Centre OT guidance / ASD ISM (Information Security Manual) | SOCI Act critical infrastructure obligations met by on-premise deployment: no data egress from regulated asset. ASD Essential Eight controls applied at edge server level. Privacy Act APP 8 cross-border disclosure rules satisfied. | SOCI Act regulated asset data egress to cloud requires specific risk assessment and notification. ASD ISM and Essential Eight OT guidance recommends network isolation that cloud monitoring systems undermine. |

iFactory's on-premise edge AI satisfies GDPR, PIPEDA, UAE data localization, NERC CIP, NIS2, SOCI, and ITAR requirements without a data residency agreement, a cloud supplementary compliance configuration, or months of middleware documentation. Zero data egress means zero compliance exposure from data transfers.

Results: Manufacturing Plants Running iFactory Edge AI

GPU-accelerated local inference on NVIDIA DGX hardware processes multi-sensor data from all monitored assets simultaneously in under 10 milliseconds. Real-time fault detection is possible even for high-speed rotating equipment at 10 kHz+ vibration sampling rates.

Across all iFactory edge deployments, zero sensor data, process data, or operational records have been transmitted to any cloud endpoint. Every deployment operates entirely within the facility's OT network perimeter with zero internet connectivity required.

Complete edge AI deployment including NVIDIA server commissioning, OT network integration, AI baseline learning, and first predictive alert generation within 6 to 8 weeks from project kickoff, without any production stoppage at any stage.

iFactory's read-only DCS and SCADA integration never creates a bidirectional connection or any path for external access to OT systems. All integration is strictly inbound data collection with no write capability and no external network connectivity.

Measured across bearing, gear, thermal, pressure, and electrical fault types after the 21-day baseline learning period. On-premise model training on plant-specific operational data achieves higher accuracy than generic cloud models trained on cross-client datasets.

Most manufacturing plants achieve full edge AI deployment cost recovery within 60 days from prevented equipment failures and the immediate energy savings identified by compressed air and utility monitoring in the first weeks after commissioning.

Frequently Asked Questions

Continue Reading

iFactory's five-layer edge AI architecture delivers full GPU-accelerated predictive maintenance, automatic work order generation, and multi-jurisdiction compliance documentation with zero internet connectivity, zero data egress, and zero cloud dependency at any operational stage. Air-gap deployment available. First predictive alerts within 6 to 8 weeks of installation.